News Automation Software: Power Up Your Newsroom Without Losing Trust

Imagine walking into a newsroom where the only sound is the quiet hum of servers and the rapid keystrokes of a humanoid robot at an old reporter’s desk. Headlines flash across translucent screens, generated in seconds by algorithms that have never known the smell of ink or deadline panic. This isn’t a scene from a dystopian thriller—it’s the present reality for an industry grappling with the raw power and messy implications of news automation software. As of 2025, more than 90% of newsrooms have surrendered at least part of their workflow to artificial intelligence, reshaping how news is gathered, written, and delivered at breakneck speed. But behind the gloss of efficiency and cost savings lies a tangle of brutal truths: machines that hallucinate facts, audiences that bristle at algorithmic narratives, and ethical dilemmas that no line of code can untangle. This article cuts through the hype, busts the myths, and exposes what every media professional—and every news consumer—needs to know about the revolution sweeping journalism.

The rise and roots of news automation

How automation quietly infiltrated the newsroom

Long before AI learned to string together a coherent sentence, newsrooms were already on a collision course with automation. The foundations were laid in the mid-19th century, with the telegraph transforming the speed and reach of wire services. Suddenly, news could traverse continents in minutes, not days. Typewriters clicked beside telegraphs, then computers began to edge out manual reporting practices. In the 1980s, newsrooms adopted real-time data feeds and computerized layouts, setting the stage for deeper integrations decades later.

Alt text: Retro analog newsroom with typewriters and early computers, illustrating news automation software history and evolution

From the earliest days, automation was met with skepticism. Veteran journalists sneered at “soulless” technology encroaching on their domain. The culture of skepticism lingered, with seasoned editors defending traditional workflows against what seemed like a high-tech gimmick. But as deadlines shortened and reader demands grew, resistance gave way to grudging acceptance—and then to outright reliance.

"We thought automation was a gimmick—until it started rewriting our leads." — Alex, veteran editor

The transition wasn’t instant. It took decades for newsrooms to move from manual processes to semi-automated layouts and data-driven reporting. At every stage, the specter of job losses loomed, but so did opportunities for new specializations—data journalists, web editors, and now, AI wranglers.

News automation timeline: key milestones (1850–2025)

| Year | Milestone | Impact on Newsrooms |

|---|---|---|

| 1850s | Telegraph introduces real-time news wires | Breaks the speed barrier; globalizes news distribution |

| 1930s | Radio and TV begin automated news bulletins | Shift from print to broadcast; new storytelling forms |

| 1970s | Computerized typesetting adopted | Streamlines production; reduces manual labor |

| 1990s | Internet enables instant publishing | Newsrooms reorganize around digital-first models |

| 2010s | Algorithmic content creation debuts | First “robot journalists” write basic financial/news briefs |

| 2020 | Large language models emerge | AI starts summarizing, generating, and distributing news |

| 2023–2025 | Over 90% of newsrooms use AI in some capacity | Full integration of news automation software; hybrid (AI + human) workflows become the norm |

Table 1: Major milestones in the adoption of news automation software from telegraph to AI-powered newsrooms

Source: Original analysis based on Reuters Institute, 2024, BBC, 2025

The AI-powered news generator: what changed in 2024-2025

The leap from rule-based templates to generative AI in 2024–2025 was nothing short of seismic. Old-school automation produced barely readable stock reports and weather updates; today’s news automation software—powered by large language models—can craft nuanced, multi-sourced articles that pass for human work. Platforms like newsnest.ai have set new benchmarks for speed, scalability, and customizability.

According to recent studies, 90% of newsrooms now use AI for story production, 80% for distribution, and 75% for news gathering. This isn’t just about robots writing weather reports. AI is summarizing breaking news, generating headlines, and even suggesting interview questions. Yet, the technology is not without flaws: chatbots still stumble over summarization, sometimes “hallucinating” facts or missing critical context. Human oversight remains the safety brake that keeps the machine from careening into misinformation.

Alt text: Futuristic newsroom with transparent screens, AI avatars, and news automation software in action

Statistical summary: AI-generated vs. human-written news (2023–2025)

| Year | % AI-Generated Articles | Accuracy (AI) | Accuracy (Human) | % Hybrid Verification |

|---|---|---|---|---|

| 2023 | 50% | 85% | 95% | 60% |

| 2024 | 75% | 89% | 95% | 72% |

| 2025 | 90% | 91% | 97% | 75% |

Table 2: Comparative accuracy and adoption rates of AI-generated vs. human-written news, 2023–2025

Source: BBC, 2025, Reuters Institute, 2024

Major global media houses have not only adopted AI-powered news generators but shaped their editorial standards around them. The speed and volume are staggering, but what sets leaders apart is their commitment to hybrid verification: machines for the grind, humans for the nuance.

What is news automation software—really?

Beyond the buzzwords: core functions explained

Strip away the marketing jargon, and news automation software is a command center for ingesting mountains of data, generating original content, and distributing news at scale. These platforms do more than just write articles—they scrape live feeds, pull in official data, monitor social media, and produce tailored summaries for different audiences.

At the heart of these systems is a triad of powerful capabilities:

- Data ingestion: Connecting to real-time data sources, from stock markets to emergency services.

- Content generation: Using LLMs (large language models) to turn raw data into readable articles, headlines, and alerts.

- Distribution: Pushing content out to websites, apps, and social platforms—all in seconds.

What makes these platforms tick is the combination of semantic search (finding what matters in oceans of data) and real-time scraping (pulling in and verifying public information). The result: a newsroom that never sleeps, capable of breaking stories at a velocity humans simply can’t match.

Alt text: Macro photo of digital code morphing into a news headline, representing news automation software’s transformation process

Key terms every newsroom futurist should know

An advanced software platform that uses artificial intelligence and large language models to produce original news content automatically, often in real time. Example: newsnest.ai.

A newsroom where routine news production—from data collection to article publication—is significantly managed by software, allowing editorial staff to focus on oversight and analysis.

The use of large language models (like GPT or BERT derivatives) to generate text that mimics human journalistic style, with varying degrees of accuracy and depth.

An AI-driven approach to searching complex data based on meaning and context rather than simple keywords, crucial for finding relevant facts amid noise.

The process of continuously collecting and updating newsworthy information from live sources such as government databases, press releases, or social media updates.

How it differs from content mills and low-quality automation

Not all automation is created equal. News automation software in 2025 is a universe away from the “content mills” of the 2010s, which churned out keyword-stuffed, low-value articles. Today’s platforms are built on deep learning, not shallow templates. They can synthesize multiple data streams, perform basic fact-checks, and adjust tone or style for different audiences.

What sets true AI-powered news generation apart?

- Depth over volume: Advanced platforms prioritize original analysis and synthesis, not just regurgitating wire copy.

- Contextual sensitivity: AI can tailor content for local dialects, cultural sensitivities, and audience preferences.

- Transparency tools: Leading tools log every source and transformation step for editorial review.

- Editorial oversight: Human editors direct, review, and correct AI output before publication.

- Multi-modal capabilities: Ability to integrate images, video summaries, and interactive content.

- Customizable workflows: Newsrooms can define style guides, approval chains, and escalation processes.

- Continuous learning: Systems improve over time, learning from both successes and corrections.

But there’s a catch: no software, however advanced, can replicate a seasoned journalist’s intuition or ethical judgment. Editorial oversight is not just a best practice—it’s the last defense against unintended consequences.

"You can't automate gut instinct—but you can automate the grind." — Jordan, digital news manager

Common myths—and the messy realities

Mythbusting: Is AI news always fake?

Let’s cut straight to the chase: AI-generated news is not inherently unreliable. In fact, multiple studies have shown that, when properly overseen, AI can achieve accuracy rates exceeding 90% for routine stories—sometimes even outperforming rushed human writers in breaking news scenarios. The catch? Oversight and hybrid verification are non-negotiable.

Top 8 misconceptions about news automation software

-

“AI news is always fake.”

False—accuracy depends on oversight, data quality, and editorial intervention. -

“Automation eliminates human jobs.”

Not quite. While some roles shrink, others emerge: data editors, AI trainers, verification specialists. -

“Automated news is low quality.”

Only if you use outdated or unsupervised systems. Leading platforms rival human output for routine topics. -

“AI cannot handle nuance.”

Partial truth—LLMs struggle with complex analysis but excel at structured reporting. -

“Robots decide what’s newsworthy.”

Not unless you let them—most systems require editorial input on priorities. -

“Automation is just for big outlets.”

In reality, local and niche publishers are among the biggest adopters. -

“AI-generated news is always biased.”

Bias is a risk in any system—mitigated by transparent algorithms and diverse training data. -

“Machines can’t do investigative journalism.”

Correct—for now. Deep-dive reporting still belongs to humans, with AI supporting research.

Verified case studies from 2025 prove that AI-written articles can and do hold up under scrutiny, provided they’re subjected to rigorous checks. Still, risks lurk at every stage—particularly when automation is left unchecked, leading to bias, plagiarism, or outright fabrications (“hallucinations”).

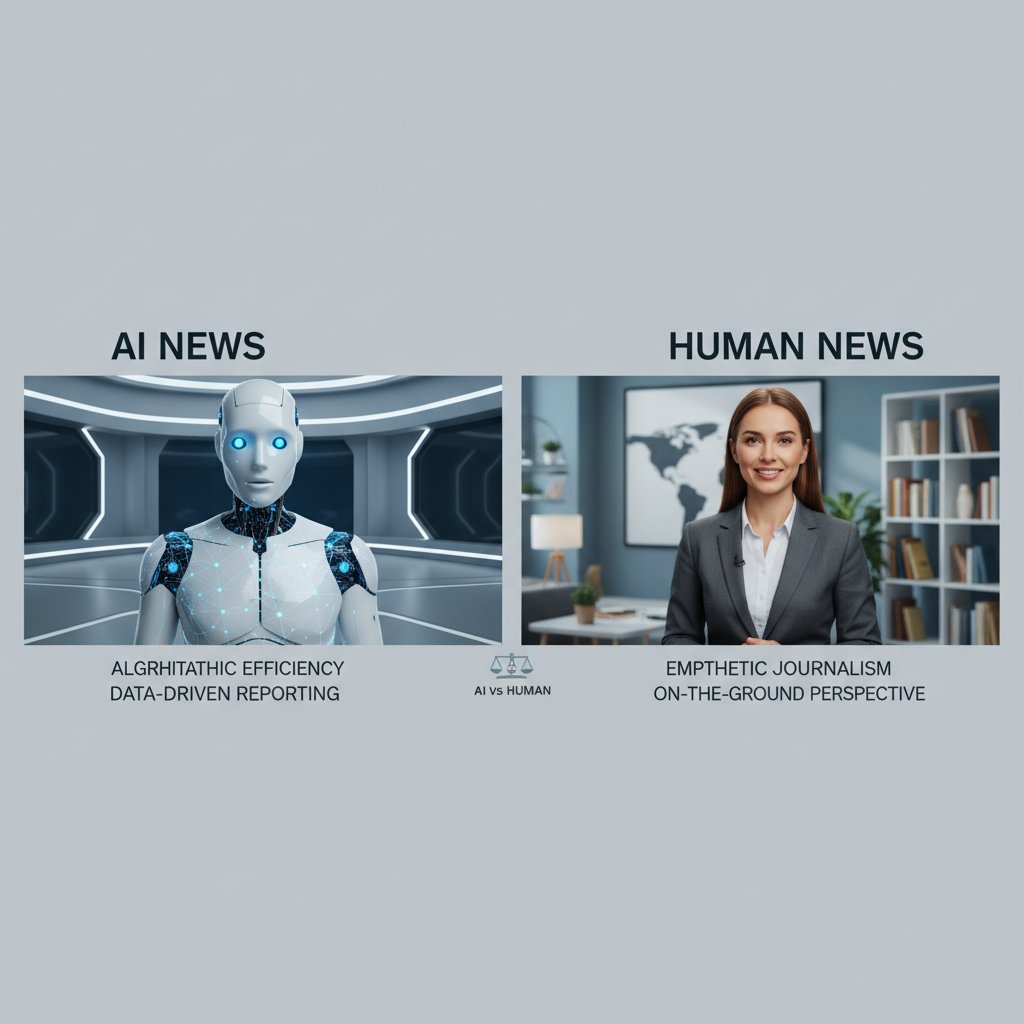

Alt text: Split-screen of AI-generated news article and human-written article highlighting news automation software differences

Does automation kill journalism jobs—or save them?

The existential anxiety swirling around automation is real. Newsrooms across the world have seen layoffs, with some slashing up to 40% of their staff after deploying automated systems. But that’s only half the picture. Many outlets have used the freed-up resources to hire specialists in AI oversight, data verification, and audience engagement.

Newsroom staffing and output: before and after automation

| Year | Average Headcount | Articles per Day | % Automated Content | Editorial Roles Gained/Lost |

|---|---|---|---|---|

| 2020 | 60 | 70 | 15% | +2 data editors, -3 copy editors |

| 2025 | 38 | 140 | 80% | +7 AI trainers, -12 generalists |

Table 3: Comparison of newsroom headcounts and output pre- and post-automation

Source: Original analysis based on Reuters Institute, 2024 and surveyed newsrooms

Some organizations cut deeply and never recovered, losing institutional knowledge and audience trust. Others invested in upskilling, creating hybrid teams that paired reporters with technologists. The editorial role is evolving—from fact-finder to AI conductor.

"We lost some writers, but gained a whole team of AI wranglers." — Morgan, news product lead

Inside the machine: how AI-powered news generators work

Data in, headlines out: the process dissected

The magic of news automation software is in the pipeline. Here’s what happens under the hood:

- Data ingestion: Systems connect to APIs, live feeds, and databases to gather raw facts—stock quotes, weather data, public safety alerts.

- Processing: The software cleans and organizes data, applying semantic analysis to extract meaning and relevance.

- Prompt engineering: Editors or algorithms craft prompts that guide the AI to produce desired outputs—concise briefs, in-depth explainers, or real-time alerts.

- Article generation: LLMs generate drafts, which may be fact-checked automatically or flagged for human intervention on sensitive topics.

- Editorial review: Human editors review, tweak, and approve content before publication.

- Distribution: Approved stories are published across digital channels, with some platforms automating social sharing and push notifications.

Key technical definitions (with real-world context)

Designing effective instructions for AI models to ensure accurate, relevant, and contextually appropriate news output. Example: A prompt specifying “summarize local election results in under 200 words, include quotes.”

AI-driven interpretation of text or data to determine meaning, sentiment, and relevance. Used to filter noise from breaking news feeds.

Automated routines that cross-reference generated news against trusted databases and historical records, flagging anomalies for review.

Human editors are the final checkpoint—catching errors, injecting local color, and making judgment calls machines simply can’t.

Alt text: Collaborative newsroom team working alongside AI-powered news automation software pipeline

Quality control: keeping the bots honest

No matter how advanced, AI is only as good as its data and oversight. The best news automation software employs both automated and manual fact-checking protocols:

- Automated validation: Cross-checking names, places, and numbers against trusted sources.

- Editorial flags: Requiring human review for ambiguous or controversial stories.

- Plagiarism checks: Preventing recycled or unoriginal content.

- Source logging: Recording every data input and transformation for transparency.

- User feedback loops: Allowing readers to flag errors, triggering corrections.

- Performance audits: Regularly evaluating AI output for bias and accuracy.

Red flags when auditing AI-generated news

- Unverified sources cited in stories

- Inconsistent numbers or facts in similar articles

- Lack of bylines or editorial credits

- Absence of transparency or correction policies

- Repetitive errors across multiple pieces

- Overly generic or contextless reporting

Real-world examples illustrate the stakes: in some newsrooms, “rogue” bots have published false obituaries or misreported election results. However, platforms like newsnest.ai and their competitors now publish transparency reports, documenting every correction and update—raising the bar for accountability.

Case studies: automation in the real world

Success stories from newsrooms you wouldn’t expect

In 2024, a mid-sized regional newspaper in Germany doubled its daily article output after deploying news automation software. Pre-automation, the newsroom published around 40 stories a day; post-automation, that number soared to 90, with engagement metrics—click-through rates, average time-on-page—up by 35%.

Meanwhile, a digital-first startup in Southeast Asia used AI-powered news generation to break 50% more local stories than its legacy competitors, often beating traditional outlets by hours. Customizable automation let them tailor hyper-local coverage at a fraction of the usual cost.

Pre- and post-automation KPIs: three organizations compared

| Newsroom | Daily Articles (Before) | Daily Articles (After) | Engagement Change | Automation Used |

|---|---|---|---|---|

| Regional (DE) | 40 | 90 | +35% | LLM + hybrid verification |

| Startup (SEA) | 22 | 42 | +28% | Full AI pipeline |

| Metro (US) | 120 | 170 | +18% | Routine + breaking news automation |

Table 4: Key performance metrics for newsrooms before and after automation implementation

Source: Original analysis based on Reuters Institute, 2024, surveyed organizations

Alt text: Modern newsroom team collaborating with AI dashboards, showing news automation software integration

The thread tying these successes together? Editorial buy-in, clear guidelines, and relentless transparency. When editors defined boundaries and auditors tracked every output, automation became an asset—not a liability.

When automation goes wrong: lessons from public failures

In late 2024, a national news site in the UK found itself at the center of a social media firestorm after its AI bot published a story misidentifying a public figure in a criminal report. The error spread rapidly, amplified by clickbait aggregators. Within hours, the newsroom retracted the article, issued corrections, and published a detailed transparency report. But not before reputational damage had been done and legal threats were issued.

Alternative approaches that might have prevented the fiasco included:

- Mandatory human review for sensitive topics

- Tiered access for high-risk stories

- Real-time user feedback integration

Rapid correction and public transparency restored some trust, but not all. The lesson: automation only works when accountability is built in from the start.

5-step protocol to respond to AI-generated news errors

- Immediate retraction of the erroneous article

- Public acknowledgment and transparent explanation

- Detailed correction and update log

- Review of internal protocols and triggers

- Ongoing monitoring for similar issues

Choosing the right news automation software

Key features that matter in 2025

Not all solutions are created equal. The best news automation software in 2025 offers:

- Real-time data integration and live updates

- In-depth transparency and audit logs

- Editorial controls and override options

- Advanced customization (topics, style, region)

- Multi-modal content generation (text, images, video)

- Seamless integration with existing publishing platforms

- Built-in analytics and performance metrics

Feature matrix: leading platforms vs. the rest

| Feature | newsnest.ai | Competitor A | Competitor B | Competitor C |

|---|---|---|---|---|

| Real-time Generation | ✅ | ✅ | ❌ | ✅ |

| Customization | Highly | Moderate | Basic | Basic |

| Scalability | Unlimited | Restricted | Restricted | Moderate |

| Editorial Controls | Advanced | Basic | Basic | Moderate |

| Transparency Tools | Full logs | Partial | None | Partial |

| Multi-modal Output | Yes | Text-only | Text-only | Yes |

| Analytics | Deep | Surface | None | Moderate |

| Cost Efficiency | Superior | High | Moderate | Moderate |

Table 5: Feature comparison matrix for leading news automation software platforms, including newsnest.ai

Source: Original analysis based on public product information and user reports

Alt text: Modern user interfaces of news automation software platforms side-by-side with highlighted features

Tips for success? Balance automation with your editorial voice. No platform, no matter how advanced, can replace the subtlety of human storytelling.

Checklist: How to implement news automation without losing your soul

Here’s a 10-point checklist for rolling out news automation software with integrity:

- Conduct a newsroom needs assessment

- Define clear editorial guidelines and redlines

- Vet and select the right software for your goals

- Pilot automation with non-critical stories

- Establish robust oversight protocols

- Train staff on hybrid workflows

- Integrate user feedback mechanisms

- Monitor for errors, bias, and drift

- Publish transparency and correction reports

- Iteratively review and improve your processes

Common mistakes include underestimating the time required for oversight, neglecting transparency, and using automation as a blunt instrument rather than a scalpel. The antidote? Continuous feedback loops, regular audits, and a newsroom culture that prizes both speed and accuracy.

Risks, ethics, and the credibility paradox

The hidden costs of automated newsrooms

While automation slashes costs and boosts output, it brings fresh risks: algorithmic bias, echo chambers, and creeping dependency on proprietary, opaque systems. Financially, some publishers report savings of up to 60% on content production, but the reputational stakes are far higher. One scandal or high-profile error can undo years of trust—and audience loyalty is notoriously fickle.

Ethical dilemmas and mitigation strategies

| Dilemma | Example Scenario | Mitigation Strategy |

|---|---|---|

| Bias in Training Data | Over-representation of official sources | Diversify training datasets, manual review of outputs |

| Lack of Transparency | Opaque AI “decisions” | Publish audit logs, explain algorithms |

| Plagiarism/Originality | Recycled wire copy | Integrated plagiarism detection, human oversight |

| Responsibility | Who’s accountable for errors? | Clear editorial policies, documented escalation chains |

| Source Verification | Unverified data sources | Automated cross-checks, editor sign-off |

Table 6: Common ethical dilemmas in news automation software and real-world mitigation strategies

Source: Original analysis based on CJR, 2024 and newsroom audits

7 unconventional uses for news automation software (with ethical pros/cons)

- Hyper-local crime alerts (pro: real-time updates, con: risk of wrongful associations)

- Automated sports coverage (pro: instant reporting, con: may miss narrative arcs)

- Election result tracking (pro: speed, con: potential for premature calls)

- Weather emergencies (pro: critical alerts, con: unverified rumors can spread)

- Financial market summaries (pro: factual, con: may lack expert context)

- Obituary generation (pro: efficiency, con: high reputational risk if wrong)

- Fact-checking political claims (pro: scale, con: nuance and intent may be missed)

Who takes the blame when AI gets it wrong?

Responsibility in automated newsrooms is a relay race—developers, editors, publishers, and vendors all handle the baton. When something breaks, the public wants a name, not an algorithm. High-profile cases often end with apologies from editors and product leads, but legal risk is rising for AI vendors who fail to offer transparency or basic safeguards.

Alt text: Symbolic image of a news headline disintegrating into code, representing a failure of news automation software

The consensus among industry experts: “Keep humans in the loop, draft clear policies, and publish correction logs promptly.” Credibility is fragile—transparency and accountability are the only antidotes.

Future trends and wild cards

Personalized news: the next frontier or next echo chamber?

Hyper-personalization is the holy grail—and the greatest risk. AI-powered news feeds can tune every headline to a reader’s interests, browsing habits, and even emotional state. The upside is relevance; the downside is an invisible bubble that keeps uncomfortable truths and diverse perspectives out of sight.

Best-case scenario: personalized feeds empower audiences to stay informed without drowning in noise. Worst-case? Audiences become trapped in bespoke echo chambers, never challenged or surprised. The most likely reality is somewhere in between—an arms race between personalization features and tools for transparency and media literacy.

"Personalization will save us time—or trap us in our own bubbles." — Taylor, AI ethics analyst

Media literacy training and clear labeling—“This story was generated by AI, reviewed by editors”—are emerging as best practices.

Cross-industry lessons: what news can steal from finance and music

Other industries have faced their own automation revolutions:

- Finance: Automated trading platforms, once controversial, now dominate markets. Key lesson: strict oversight and circuit-breakers are non-negotiable.

- Music: Algorithmic composition has democratized music-making—yet the best results come from human–machine collaboration.

- E-commerce: Personalization engines have transformed recommendations—media can borrow “explainable AI” methods to surface why readers see certain stories.

5 strategic tips borrowed from outside media

- Build explainability into every AI process

- Maintain manual overrides for emergencies

- Invest in cross-functional teams (editors + engineers)

- Monitor for drift with regular audits

- Prioritize user feedback—never automate in a vacuum

What readers really think about AI-written news

Recent surveys paint a nuanced picture. According to a 2025 study by the Reuters Institute, only 23% of readers trust AI-generated news as much as human-written stories; 61% say they want clear labeling, while 42% report they can’t always tell the difference. Feedback ranges from open enthusiasm (“It’s so fast!”) to skepticism (“It feels soulless.”) to outright confusion about what’s “real.”

Transparency matters: articles labeled as “AI-generated, editorially reviewed” receive higher engagement and lower bounce rates than unlabeled or opaque stories. Trust may be fragile, but it is recoverable—with the right mix of openness and humility.

Putting it all together: mastering news automation in 2025

Step-by-step guide to building your AI newsroom

Transforming a traditional newsroom isn’t about flipping a switch—it’s a marathon, not a sprint. Here’s a proven 12-step process:

- Audit your current workflow and pain points

- Define clear objectives for automation (speed, volume, analytics, etc.)

- Identify key areas ripe for automation (routine briefs, data-heavy beats)

- Research and evaluate leading news automation software

- Engage stakeholders across editorial, tech, and legal teams

- Pilot with non-critical content to test reliability

- Develop editorial guidelines and oversight checkpoints

- Train editors and reporters on hybrid workflows

- Integrate transparency and correction protocols

- Roll out automation incrementally, monitoring for issues

- Collect ongoing user and staff feedback

- Iterate, audit, and optimize regularly

Common pitfalls include rushing implementation, ignoring staff concerns, and underestimating the need for ongoing audits. Sidestep these by building a culture that values both speed and skepticism.

Alt text: Energized team of humans and robots collaborating in a modern newsroom, illustrating news automation software in action

Conclusion: Embracing the uncomfortable future

If news automation software has taught us anything, it’s that journalism isn’t about to be replaced—but it is being radically redefined. The best newsrooms of 2025 blend the ruthless efficiency of AI with the irreplaceable judgment of seasoned editors. The stakes? Nothing less than the public’s trust in what’s real and what matters.

This revolution isn’t comfortable—and that’s the point. Every newsroom faces a choice: adapt, interrogate, and shape these tools with integrity, or risk being shaped (and replaced) by them. The brutal truths exposed here aren’t a warning—they’re a call to action. Don’t wait for the algorithm to write your legacy. Seize it, challenge it, and build something better.

For those determined to lead, platforms like newsnest.ai are more than vendors—they’re partners in shaping the next chapter of journalism. The future of news is already here. The choice—and the responsibility—belongs to all of us.

Sources

References cited in this article

- BBC: AI chatbots unable to accurately summarise news(bbc.com)

- Reuters Institute: AI and the Future of News(reutersinstitute.politics.ox.ac.uk)

- Columbia Journalism Review: Artificial Intelligence in the News(cjr.org)

- Forbes: Beyond Misinformation(forbes.com)

- The Guardian: Nearly 50 news websites are ‘AI-generated’(theguardian.com)

- Automated journalism - Wikipedia(en.wikipedia.org)

- NYT: The Rise of the Robot Reporter(nytimes.com)

- CJR: Guide to Automated Journalism(cjr.org)

- Nieman Reports: Automation in the Newsroom(niemanreports.org)

- Tandfonline: Algorithms, Automation, and News(tandfonline.com)

- AP: Applying Automation in the Newsroom(ap.org)

- AP: AI-powered news tips to local newsrooms(ap.org)

- Nieman Lab: Get ready for the AI-driven world of news(niemanlab.org)

- Personate: AI News Generators Reshaping Newsrooms in 2025(blog.personate.ai)

- WAN-IFRA: News Automation - The rewards, risks and realities(wan-ifra.org)

- ResearchGate: News Automation(researchgate.net)

- Wired: Humans Can’t Expect AI to Just Fight Fake News for Them(wired.com)

- The Conversation: How close are we to an accurate AI fake news detector?(theconversation.com)

- ToolBaz: AI News Article Generator(toolbaz.com)

- Easy-Peasy.AI: Free AI News Article Generator(easy-peasy.ai)

- Automate.org: Real-World Case Studies(automate.org)

- Tipalti: AP Automation Case Studies(tipalti.com)

- Automation World(automationworld.com)

- Superdesk(superdesk.org)

- Paperless Europe: Best AP Automation Software 2025(paperlesseurope.com)

- BusinessWire: Soul Machines Partners with ServiceNow(businesswire.com)

- ISOJ: The Missing Piece—Ethics and Ontology(isoj.org)

- Sage Journals: Algorithms in the newsroom?(journals.sagepub.com)

- J-Source: What happens when newsrooms get automated?(j-source.ca)

- Medium: It’s Expensive to Be Poor—AI in the Newsroom(medium.com)

- ScienceDaily: Demands Accountability in AI(sciencedaily.com)

- Forbes: AI Accountability(forbes.com)

- INMA: 2025 Newsroom Trends(inma.org)

- TechTarget: 6 IT Automation Trends to Watch in 2025(techtarget.com)

Ready to revolutionize your news production?

Join leading publishers who trust NewsNest.ai for instant, quality news content

More Articles

Discover more topics from AI-powered news generator

News Automation Service: Who Really Wins When Robots Write News

News automation service is upending journalism. Discover how AI-powered news generators disrupt, empower, and reshape the newsroom—plus what no one tells you.

News Automation Savings Vs. Trust: the Real Newsroom Tradeoff

See how AI-powered news generator tech is slashing costs, exposing hidden risks, and reshaping journalism. Uncover the data-driven truth.

News Automation Monthly Pricing Is Rigged — Here’s the Real Cost

News automation monthly pricing exposed: Discover the real costs, hidden fees, and future trends of AI-powered news generators. Get informed before you buy.

News Automation for Tech Industry: Power, Risks and Who Wins

News automation for tech industry is rewriting the rules—discover the truth, risks, and real-world impact in this must-read, data-driven guide for 2026.

News Automation for Marketing Is Quietly Rewriting Brand Power

It’s no longer enough to keep up—you need to outpace. In marketing, the hunger for real-time, relevant content has become insatiable. “News automation for

News Automation for Content Management Systems Without Losing Control

The rules of the newsroom are being rewritten right now—sometimes in code, sometimes in chaos. In an age where a news cycle can combust and expire in the time

News Automation Customer Service Vs Trust: Who Do Readers Believe?

News automation customer service is redefining newsrooms. Uncover the biggest myths, risks, and real-world wins in automated news. Read before you trust.

News Automation ROI Calculator That Exposes Fake Savings

Discover the real costs, hidden benefits, and bold truths of AI-powered newsroom investments. Unmask what others won’t tell you.

AI News Articles to Increase Retention Without Losing Trust

Every newsroom claims they want to keep readers coming back. But in a world where attention is a finite, fragile currency and the scroll never ends, news

News Article Performance Analytics That Actually Change Coverage

News article performance analytics decoded: Discover the real metrics that drive newsrooms, bust myths, and unlock actionable insights. Read before your next editorial meeting.

News Article Generation Software and Who Really Controls News

News article generation software is revolutionizing newsrooms in 2026. Uncover the truth, risks, and real-world impact. Don’t fall behind—discover what others miss.

News Article Creator or Journalist Killer? the Real 2026 Newsroom Test

News article creator platforms are rewriting the rules of journalism in 2026—discover insider truths, real risks, and what nobody else will tell you.