AI Content Generator or Editor-In-Chief? Who Writes 2026 News

Step into any newsroom today and you won’t just hear the clatter of keystrokes—you’ll sense the whirring of algorithms shaping headlines before the coffee brews. The AI content generator isn’t a niche tool or tomorrow’s fantasy: it’s the backbone of a seismic shift in how news is written, delivered, and, crucially, trusted. With AI-generated content now making up as much as 90% of online news articles, the lines between human insight and machine logic have blurred more than most audiences realize. The stakes? Journalistic integrity, public trust, and the very fabric of truth in the digital age. If you’re still picturing an intern cobbling together wire copy as the face of news automation, you’re about five years behind. Welcome to the underbelly of the news revolution, where speed, bias, and raw machine power upend everything you thought you knew about information.

The AI content generator revolution: How we got here

A brief history of automated news writing

AI-generated journalism didn’t appear from thin air. The journey began with clunky attempts at sports recaps and financial reports in the early 2010s—stories that sometimes read like a programmer’s inside joke. Editors warily eyed these robo-writers, finding both spectacular failures (think weather articles confusing Celsius for Fahrenheit, causing a minor panic in local communities) and unexpected breakthroughs. One early case: the 2014 Associated Press experiment with automated earnings reports, which quietly—and accurately—published thousands of business updates without missing a beat. But what really changed the game was the rise of Large Language Models (LLMs), such as BERT (2018) and GPT (2019 onward). These models didn’t just regurgitate data; they synthesized it, infusing context and nuance on a scale that stunned traditional journalists.

When ChatGPT exploded into the public sphere in 2023, it became clear that AI content generators were no longer just newsroom curiosities. The arrival of multimodal models—systems that handle text, images, and data simultaneously—pushed even the most skeptical editors to reconsider what machines could do. By 2025, these tools had evolved from glorified templates to full-blown collaborators. According to the Reuters Institute, 2025, between 60% and 90% of digital news content is now at least partially generated by AI.

| Year | Milestone | Impact/Setback |

|---|---|---|

| 2010 | Early experiments in sports/finance automation | Low quality, limited scope |

| 2014 | AP launches automated earnings reports | Boosts productivity, accuracy for routine stories |

| 2018 | BERT revolutionizes natural language understanding | Foundation for contextual AI writing |

| 2019 | GPT-2 (OpenAI) demonstrates fluency, coherence | Raises quality expectations |

| 2023 | ChatGPT popularizes conversational AI | Mainstream adoption, sparks ethical debates |

| 2024 | Multimodal AI models emerge | Enables text, image, and data synthesis |

| 2025 | 60–90% of online news AI-generated | Journalistic job losses, trust issues surge |

Table 1: Timeline of key AI content generation breakthroughs, 2010–2025

Source: Original analysis based on Reuters Institute, Personate.ai, and Quidgest reports

What separates hype from reality

Let’s cut through the marketing fog. AI content generator platforms are pitched as magical solutions promising perfect grammar, instant fact-checks, and zero human effort. The reality? While AI can churn out articles at breakneck speed, it still stumbles over nuance, culture-specific references, and breaking news that’s too fresh for its datasets. In 2023, a widely shared AI-generated story about a celebrity scandal went viral—only for readers to discover that the “scandal” was a recycled rumor from 2015, resurfaced because the AI misread trending data.

So what aren’t AI content generator companies telling you?

- AI-generated content can propagate outdated or incorrect facts if not meticulously checked.

- Most platforms require significant prompt engineering and editorial oversight to avoid embarrassing outputs.

- AI often struggles with names, dates, and context in fast-moving news cycles.

- “Originality” claims are often exaggerated; many generators recombine existing content.

- Editorial bias is encoded in the training data—often invisibly.

- Misinformation can spread faster, and at greater scale, than ever before.

- Human editors are still essential for high-stakes or controversial stories.

The rise of the AI-powered news generator

Enter the era of the AI-powered news generator—a category dominated by platforms like newsnest.ai that promise not just speed, but real-time accuracy and editorial adaptability. These systems don’t just automate writing; they enable businesses, publishers, and even solo creators to cover breaking news and niche beats that would have been economically unsustainable just a few years ago. The adoption curve is staggering: between 2024 and 2025, the number of AI-driven news platforms tripled, according to Pearl Lemon, 2025. Outlets such as the Washington Post and BBC now rely on AI for routine reporting and, increasingly, for virtual presenters.

This isn’t just an efficiency play. As traditional ad revenue tanks—projected to drop by $2.4 billion by 2026—AI content generators are seen as a lifeline for both legacy and upstart newsrooms. But with this explosion comes a new set of risks, from brand safety nightmares to the deepening of echo chambers via personalized, algorithm-driven feeds.

How AI content generators actually work (and why it matters)

The tech under the hood: LLMs, prompts, and pipelines

At their core, AI content generators are powered by Large Language Models (LLMs). These are neural networks trained on massive swaths of internet text, news articles, books, and even social media chatter. But it’s not just about the data; it’s about how you steer it. This is where prompt engineering comes into play—crafting the right cues to guide the AI toward coherent, informative, and accurate articles. Behind the scenes, data pipelines continuously feed new information into these models, updating their context without losing consistency.

Key terms defined:

The art and science of designing input text (prompts) to elicit desired outputs from an AI model. Mastery here means the difference between bland summaries and newsworthy scoops.

When an AI confidently invents facts, statistics, or quotes that sound plausible but have no basis in reality. This is one of the biggest dangers in automated news writing.

Systemic preferences or prejudices inherited from training data, which can skew AI-generated content in subtle (or sometimes glaring) ways.

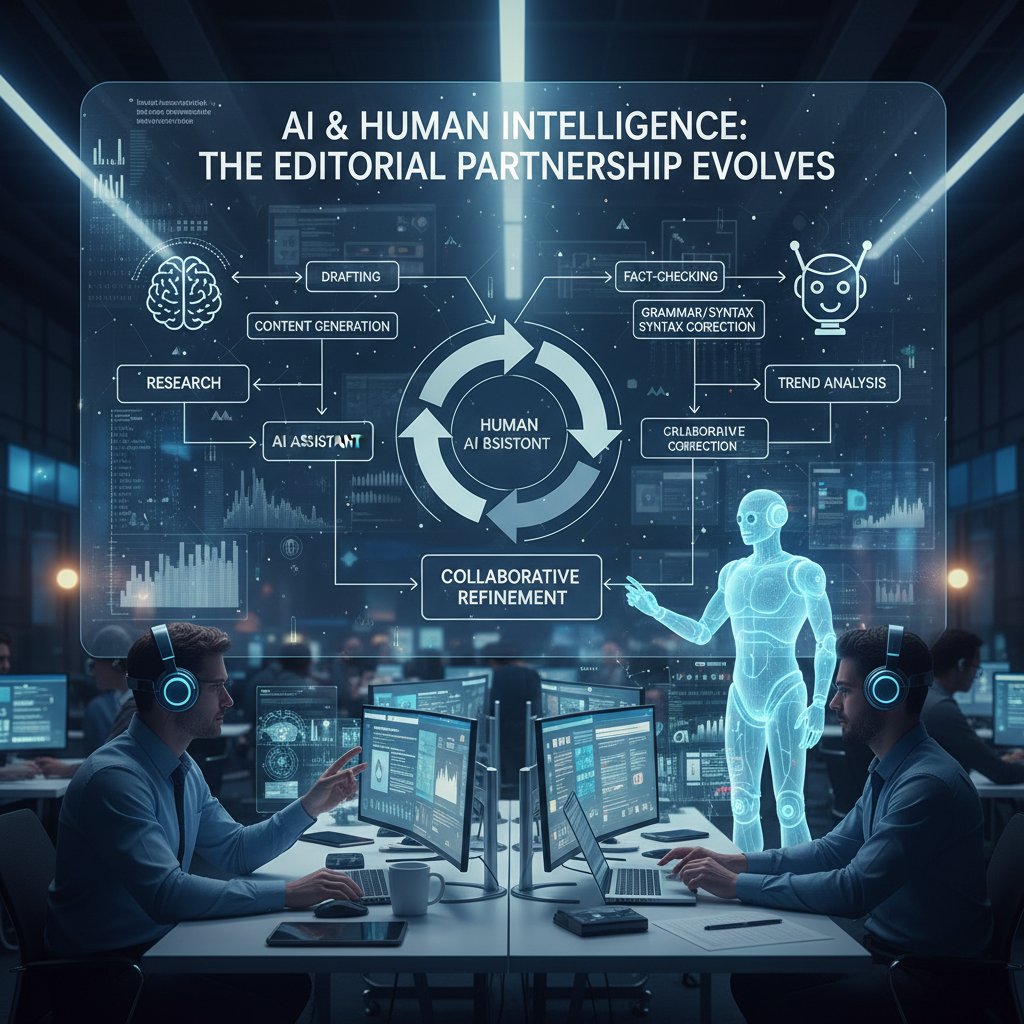

From input to headline: The step-by-step journey

How does raw data become a polished article? Here’s the journey, step by step:

- Data ingestion: Scrape structured and unstructured data from trusted feeds.

- Prompt creation: Editorial team or automated tools craft contextual prompts.

- Initial AI draft: LLM generates a first-pass article from the input.

- Fact-checking: Automated checks against databases flag inconsistencies.

- Editing loop: Human editors review, re-prompt, and refine as needed.

- Bias audit: Algorithms and/or humans scan for bias and tone issues.

- Headline optimization: AI suggests SEO-friendly headlines.

- Publishing: Final article is pushed to web, app, or syndication partners.

Optimizing each stage can shave hours off manual workflows. Newsnest.ai, for example, automates prompt refinement and bias detection, claiming a 60% reduction in editorial turnaround times (source: Personate.ai, 2025).

| Workflow Stage | Manual Process Time | AI-Driven Process Time | Cost (Manual) | Cost (AI) |

|---|---|---|---|---|

| Data collection | 2 hours | 5 minutes | $75 | $5 |

| Drafting | 3 hours | 10 minutes | $100 | $8 |

| Fact-checking | 1 hour | 2 minutes | $35 | $2 |

| Editing & headline | 2 hours | 10 minutes | $75 | $6 |

| Publishing | 30 minutes | 1 minute | $15 | $1 |

Table 2: Manual vs. AI-driven news production workflows and cost metrics

Source: Original analysis based on Pearl Lemon, Personate.ai, and newsroom survey data

Beyond the algorithm: The limits of ‘automation’

The seductive myth: AI content generators are “set and forget.” The harsh reality: every platform, no matter how advanced, still needs human oversight. Automation can streamline, but it can also amplify errors at warp speed. As AI skeptic Alex put it:

“Handing over the newsroom to an algorithm is like letting a self-driving car navigate a war zone. You might get there faster, but you’re gambling with every mile.” — Alex, media analyst, 2025

Practical editorial control means building hybrid workflows where humans set guardrails, review outputs, and intervene when algorithms hit their limits. The smartest organizations treat AI as a force multiplier—not an excuse to downsize critical thinking.

Debunking the myths: What AI content generators can and can’t do

Myth #1: All AI-generated content is low quality

Let’s kill this myth for good. In data-heavy domains—earnings reports, sports recaps, weather bulletins—AI-generated news doesn’t just match human speed; it often surpasses it on accuracy. According to Quidgest, 2025, many financial publishers now prefer AI-drafted copy for breaking market updates because it reduces headline errors and typos by 75%.

But the pendulum swings both ways. In 2024, an AI system tasked with summarizing a political debate invented quotes for two candidates, sparking a wave of corrections and an apology from the newsroom. The lesson? Quality varies based on training data, oversight, and editorial principles.

Myth #2: AI can’t handle breaking news

A decade ago, this was true. Today, it’s dead wrong—at least for structured news. AI-powered platforms like newsnest.ai deliver real-time updates faster than any human, parsing live feeds and turning them into accessible stories in minutes. But limitations persist:

- Limited context for highly local or nuanced events.

- Struggles with unexpected slang or cultural references.

- Can misinterpret rapidly evolving facts (e.g., during disasters).

- Dependent on quality and speed of data inputs.

- Lacks on-the-ground reporting instincts.

- Vulnerable to manipulation by bad actors seeding false data.

Industry insider Julia, reflecting on a recent crisis, said:

“AI is lethal for speed, but still needs human eyes for sense-making—especially when facts change by the hour.” — Julia, newsroom innovation lead, 2025

Myth #3: AI content generators are all the same

Just as every newsroom has its quirks, not all AI platforms are created equal. Differences in model architecture, data sources, and editing tools can mean the difference between readable copy and disaster.

| Platform | Customization | Data Sources | Editorial Tools | Reliability |

|---|---|---|---|---|

| Newsnest.ai | High | Diverse | Advanced | Very High |

| Competitor A | Limited | Narrow | Basic | Moderate |

| Competitor B | Medium | Moderate | Standard | High |

| Competitor C | Basic | Public only | None | Variable |

Table 3: Feature matrix for major AI content generators (2025)

Source: Original analysis based on product documentation and newsroom interviews

When choosing a solution, scrutinize not just the marketing slick, but the quality of outputs, editorial controls, and transparency of data sourcing.

Inside the AI-powered newsroom: Real-world case studies

How legacy media is adapting (or not)

The story of the legacy newsroom is written in trial, error, and—sometimes—redemption. In 2022, a regional newspaper tried automating its local election coverage. The result? Misattributed quotes, a flood of reader complaints, and a hasty public apology. Months later, with tighter editorial guardrails, the same outlet used AI to break a story on city budget fraud, scooping competitors by hours.

Statistics from Reuters Institute, 2025 show that over 35,000 media jobs were lost between 2023 and 2024 as automation spiked. Yet, hybrid AI-human teams are on the rise, blending speed with context that algorithms can’t replicate.

Startups and the underground: Disrupting the news cycle

While large outlets wrangle policy and PR, scrappy startups are eating their lunch. One such startup launched an AI-driven alert system that outpaced mainstream news by minutes on every major market movement—attracting a cult following and VC cash. But the same technology is fueling underground networks that churn out fake news for clicks and chaos. As Maya, a startup founder, admits:

“For every dreamer using AI to inform, there’s a hustler using it to deceive. The tech is neutral—the intent isn’t.” — Maya, AI news entrepreneur, 2025

Unexpected wins and spectacular failures

Not all stories are cautionary. Last year, an AI-powered tool broke news of a corporate merger hours before it hit the wires, using public filings overlooked by humans. But failures are just as instructive: a notorious AI-generated obituary in 2024 misgendered the deceased and invented surviving relatives, provoking outrage and a retraction.

- Human review is non-negotiable.

- Transparent sourcing beats speed, every time.

- Niche beats (e.g., local government) still need human insight.

- Misinformation spreads fastest when unchecked by editors.

- Success depends on continuous training and prompt refinement.

Ethical minefields and the future of trust

Who’s to blame when AI gets it wrong?

Accountability in automated journalism is a legal and ethical minefield. If an AI system libels a public figure, is it the publisher, the engineer, or the algorithm at fault? Newsnest.ai and its competitors address this by baking in multi-step editorial approval processes, ensuring a human signs off before publication. But the philosophical dilemma lingers, as Sam, a newsroom editor, points out:

“When a machine makes a mistake, everyone points fingers. But if we trust AI with the facts, we own every error it makes.” — Sam, newsroom editor, 2025

AI, bias, and the invisible hand

No AI is neutral. Generators inherit not only the facts, but the blind spots and prejudices of their training data. Recent examples flagged for bias range from underreporting minority stories to amplifying political slant, as documented by Toxigon, 2025.

| Topic | Bias Incident | Source/Date |

|---|---|---|

| Immigration | Skewed language | Reuters Institute, 2024 |

| Gender equality | Stereotyping | Toxigon, 2025 |

| Political coverage | Partisan slant | Pearl Lemon, 2025 |

Table 4: Examples of AI-generated news flagged for bias

Source: Original analysis based on Reuters Institute, Toxigon, Pearl Lemon

Best practices for mitigating bias include diversifying training data, running regular audits, and maintaining transparent editorial standards—strategies increasingly adopted by leading platforms.

The battle for public trust in 2025

According to recent Reuters Institute data, 2025, public trust in AI-generated news is sharply polarized. While some audiences value speed and breadth, others express deep skepticism about invisible algorithms shaping their worldview. For newsrooms, rebuilding credibility means doubling down on transparency: labeling AI-generated stories, disclosing editorial processes, and inviting reader scrutiny.

Definitions:

AI-generated audio or video designed to mimic real people, often used to mislead or deceive. In news, deepfakes can create synthetic interviews or “eyewitness” accounts.

Content (text, audio, images) produced with generative AI, sometimes indistinguishable from authentic reporting. The challenge is separating storytelling from simulation.

The economics of automated journalism

Cost, scale, and the end of the newsroom as we knew it

AI content generators aren’t just tech novelties—they’re economic disruptors. According to Personate.ai, 2025, companies adopting automated workflows report up to 70% cost savings on routine coverage. Revenue models are shifting: fewer salaried reporters, more “editor-curators,” and a surge in custom, subscription-driven news products.

| Industry Sector | AI Adoption Rate (2025) | Key Insights |

|---|---|---|

| Financial Services | 92% | Real-time market updates |

| Technology | 88% | Product news, trend analysis |

| Healthcare | 79% | Medical updates (non-clinical) |

| Media/Publishing | 95% | Breaking coverage, analytics |

Table 5: AI content generator adoption by sector

Source: Original analysis based on Personate.ai and Quidgest, 2025

Who wins and who loses in the AI news economy?

Automation isn’t a blanket job killer, but it is a reshaper. Routine roles—copy editors, basic reporters—are most threatened. Yet, new opportunities are emerging in data analysis, AI prompt design, verification, and trend forecasting.

- AI workflow designers

- Editorial auditors

- Fact-check automation engineers

- News data scientists

- AI ethics consultants

- Audience trend analysts

- Real-time news curators

- AI integration specialists

At the same time, hybrid teams—where humans oversee, correct, and contextualize machine output—have become the new standard in forward-thinking newsrooms.

Subscription, syndication, and the platform wars

AI content generators are fueling a new era of news syndication, letting organizations launch their own branded platforms with unprecedented speed. Here’s how news organizations are jumping in:

- Audit current workflows for automation opportunities.

- Choose a customizable AI content generator (e.g., newsnest.ai).

- Integrate AI into existing CMS and publishing pipelines.

- Train editorial teams on prompt engineering.

- Roll out pilot projects and gather feedback.

- Scale successful models across wider coverage areas.

For independent journalists and small publishers, these tools are democratizing access—but also raising the bar for originality and credibility.

Mastering the AI content generator: Actionable strategies

Choosing the right AI for your newsroom

Selecting an AI content generator is a high-stakes choice. Use this checklist:

- Evaluate output quality with real-world demos.

- Scrutinize bias detection and editorial control features.

- Check integration with your publishing stack.

- Audit security and data privacy protocols.

- Demand transparent reporting of data sources.

- Assess scalability and cost structure.

- Review technical support and community documentation.

Red flags to watch for:

- Inability to audit training data.

- Overly broad marketing claims of “perfect accuracy.”

- Lack of customization options.

- No human-in-the-loop workflows.

- Weak support for bias detection.

- Opaque pricing models.

- Poor customer reviews or support.

Pilot testing, combined with iterative editorial feedback, is the only surefire way to vet a platform’s real-world suitability.

How to train, prompt, and edit for results

A 10-step workflow for training and optimizing your AI for journalism:

- Define editorial guidelines and core values.

- Curate and vet training data relevant to your beat.

- Develop a library of prompt templates for common story types.

- Test outputs for coherence, accuracy, and tone.

- Continuously update dataset with new, high-quality inputs.

- Establish a multi-layered editorial review system.

- Audit for bias and flag problematic outputs.

- Solicit audience feedback and refine prompts accordingly.

- Benchmark performance against human-written baselines.

- Document all changes for transparency and accountability.

Common mistakes? Relying on default settings, skipping bias audits, and treating AI as a black box. Avoid these and you’ll harness—not fear—the power of automated news.

Future-proofing your content strategy

Staying ahead means embracing adaptability, transparency, and relentless learning. Newsrooms that failed to update their workflows have seen audience trust—and ad dollars—evaporate. In contrast, those investing in continuous training and community engagement are thriving.

Unconventional uses for AI content generators:

- Hyperlocal weather or traffic alerts.

- Automated event calendars.

- Instant translation of news into multiple languages.

- Data-driven investigative reporting.

- Personalized, interactive news quizzes.

- Real-time sports highlight summaries.

Adjacent realities: Misinformation, deepfakes, and the underground

Underground AI: The fake news factories

Beneath the surface of mainstream platforms, shadowy networks are weaponizing AI to flood the web with fake news. These operators run “content farms” that generate thousands of plausible-sounding stories daily, exploiting viral algorithms for profit or influence. The global impact: from swaying elections to inciting violence.

Deepfakes and synthetic news: The new battleground

Deepfake news—AI-generated audio, video, or text that mimics real sources—has become the latest front. Synthetic anchors now deliver news bulletins indistinguishable from human presenters. The implications? A world where seeing is no longer believing.

Definitions:

News content (text, video, audio) that uses AI to create or alter reality, often intended to mislead.

AI-generated newsreaders—virtual presenters whose every word and gesture is scripted by algorithms.

A recent viral hoax involving a deepfake video of a world leader announcing fake policy changes caused panic before it was debunked by multiple outlets, highlighting just how convincing—and dangerous—these tools have become.

Fighting back: Tools and strategies for verification

To verify AI-generated news:

- Check for explicit AI-generated labels.

- Cross-reference story details against multiple sources.

- Use reverse image and audio search tools.

- Inspect for inconsistencies in style or metadata.

- Review source domains for credibility and history.

- Employ AI-detection software for text and media.

- Report and flag suspect content promptly.

Emerging technologies, such as blockchain-based verification and AI-powered forgery detectors, are arming journalists and readers alike. But in 2025, vigilance—both human and digital—is the ultimate safeguard.

The future of news: Human and AI in uneasy alliance

Collaboration, not competition: The hybrid newsroom

The most successful organizations don’t pit human versus machine—they blend their strengths. Editorial teams now function in three layers: AI drafts content, editors supervise and contextualize, and fact-checkers audit outputs before publication. Lee, an AI editor, says it best:

“Our AI writes the news fast. We make sure it’s worth reading.” — Lee, AI editor, 2025

What’s next? Predictions for AI content in 2025 and beyond

The next generation of AI-powered news generators will get smarter, faster, and—hopefully—more transparent. Expect:

- Seamless, multimodal content (text, video, audio—instantly).

- Real-time bias correction and transparency reports.

- Ultra-personalized, interest-based news feeds.

- Proactive misinformation detection.

- New legal standards for AI editorial accountability.

Should you trust your next headline?

The brutal truth: the AI content generator is here to stay, and your next headline could easily be written by code. But trust isn’t a binary; it’s earned through transparency, rigorous editorial standards, and relentless skepticism. As both creators and consumers, we must learn to read between the lines—machine-written or not. If you value truth, stay curious, demand accountability, and make peace with the uneasy alliance of human and AI in the newsrooms of today.

Sources

References cited in this article

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

- Personate.ai(blog.personate.ai)

- Pearl Lemon(pearllemon.com)

- Quidgest(quidgest.com)

- Toxigon(toxigon.com)

- Grammarly AI History(grammarly.com)

- Electropages(electropages.com)

- AI Toolr Timeline(ai-toolr.com)

- IDC/Microsoft(blogs.microsoft.com)

- AI Magazine(aimagazine.com)

- Exadel(exadel.com)

- MDPI(mdpi.com)

- IBM(ibm.com)

- Columbia Journalism Review(cjr.org)

- Partnership on AI(partnershiponai.org)

- Brightspot(brightspot.com)

- Wise Digital Partners(wisedigitalpartners.com)

- Makebot.ai(makebot.ai)

- Forbes(forbes.com)

- LinkedIn(linkedin.com)

- TechPilot.ai(techpilot.ai)

- Venngage(venngage.com)

- Pageon.ai(pageon.ai)

- ONA Resources(journalists.org)

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

- AP(ap.org)

- Pugpig(pugpig.com)

- Built In(builtin.com)

- Newstex(newstex.com)

- Red Line Project(redlineproject.news)

- Forbes(forbes.com)

- LinkedIn(linkedin.com)

- Lockton(global.lockton.com)

- Taylor Amarel(tayloramarel.com)

- Built In(builtin.com)

- Scientific Reports(nature.com)

- arXiv(arxiv.org)

- UCL News(ucl.ac.uk)

- MIT Sloan(mitsloanedtech.mit.edu)

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

- TechXplore(techxplore.com)

- KPMG(kpmg.com)

- Trusting News(trustingnews.org)

- DataIntelo(dataintelo.com)

- Wiley AI Magazine(onlinelibrary.wiley.com)

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

Ready to revolutionize your news production?

Join leading publishers who trust NewsNest.ai for instant, quality news content

More Articles

Discover more topics from AI-powered news generator

AI Content Creation in 2026: Authenticity Vs Algorithmic Scale

AI content creation is reshaping media in 2026. Uncover hidden risks, expert insights, and must-know tactics for using AI to create authentic, impactful stories.

AI Breaking News Alerts: Speed You Crave, Trust You Can’t See

AI breaking news alerts are reinventing real-time journalism—discover the hidden risks, power moves, and what no platform will admit. Read before you trust your feed.

AI Blog Content Generator Vs. Writers: Who Really Runs 2026?

Discover how cutting-edge AI is rewriting the rules of digital publishing in 2026. Get the truth, the risks, and the real rewards.

AI Article Writing Vs Human Trust: Who Should Own Your Voice?

AI article writing is disrupting newsrooms. Discover the real risks, hidden benefits, and how to tell if AI is worth trusting in 2026. Read before you publish.

AI Article Summarizer or Informed Illusion? the 2026 Reckoning

AI article summarizer tools are reshaping news in 2026—discover the hidden risks, real-world wins, and how to get smarter, faster. Don’t settle for surface-level.