AI Content Creation in 2026: Authenticity Vs Algorithmic Scale

Welcome to the bleeding edge of AI content creation: a relentless tidal wave reshaping journalism, marketing, and digital storytelling as we know it. If you think AI-generated content is just another passing tech fad, think again. By 2025, over 90% of what you see online could be AI-written, according to BruceClay (2024), threatening to swamp the web and make genuine creativity a rare (and valued) commodity. But behind the hype lies a tangled web of risks, myths, and gut-check realities that demand attention. In this deep-dive, we’ll uncover the untold history, expose the pitfalls, and chart the bold moves redefining what’s possible. Whether you’re a newsroom manager, digital publisher, or simply someone obsessed with the future of content, this exposé will arm you with the knowledge, tactics, and skepticism necessary to thrive—not just survive—in the age of automated creation.

The algorithmic revolution: how AI content creation really began

Decades before ChatGPT: the forgotten roots

In the shadowy data centers of the 1950s and 60s, long before LLMs and ChatGPT dominated headlines, computer scientists were already flirting with the idea of algorithmic writing. The earliest computer-generated texts emerged from primitive punch-card mainframes, producing blocky sentences from rigid rule sets. In 1952, the Manchester Mark I program authored what many consider the first computer poem—a milestone that blurred the line between code and creativity, albeit in a language awkwardly devoid of emotion.

Through the 1970s and 80s, these early AI writers found life in university labs and among avant-garde artists who saw algorithmic text as a tool for challenging human authorship. Projects like Christopher Strachey’s love letter generator or ELIZA, the virtual therapist developed at MIT, were less about replacing writers and more about experimenting with the boundaries of language and meaning.

Despite their novelty, these pioneering systems relied on rule-based logic, limiting them to formulaic outputs. Their work foreshadowed the promise and peril of automation—raising questions about authorship and the very nature of creativity that still haunt today’s AI content boom.

The real game-changer? The leap from rules and programmed responses to statistical, then neural, models—a transition that would unleash today’s content creation explosion.

| Year | Milestone | Technology Era | Notable Example |

|---|---|---|---|

| 1952 | First computer poem | Rule-based | Manchester Mark I |

| 1966 | Natural language simulation | Rule-based | ELIZA (MIT) |

| 1984 | Early statistical text | Markov chains | Random poetry generators |

| 2015 | Neural network text | Deep learning | LSTM-based models |

| 2018 | Transformer revolution | Transformer models | OpenAI GPT-2 |

| 2020 | Mass-market AI content | LLMs at scale | GPT-3, newsnest.ai |

| 2023 | Multimodal & news platforms | LLM + media synthesis | newsnest.ai, Jasper, Synthesia |

Table 1: Timeline of major breakthroughs in AI writing, 1950-2025. Source: Original analysis based on BruceClay, 2024, OpenAI, 2023, and industry archives

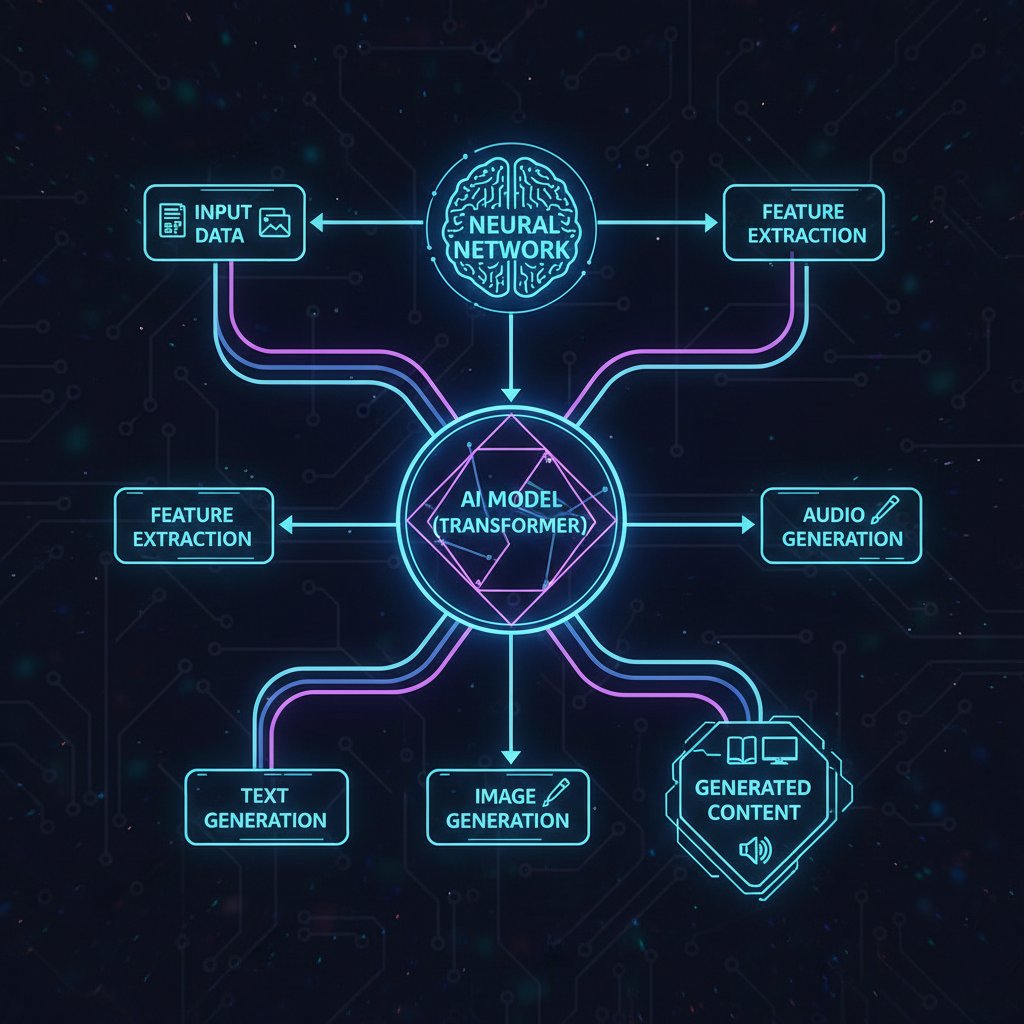

From Markov chains to transformers: the tech leap

The jump from Markov chains—where each word or phrase depended on the statistical likelihood of what came before—to transformer-based models marked the beginning of modern AI content creation. With transformers, context and coherence became the new currency, enabling AI to generate text that (sometimes alarmingly) mimics human nuance.

Early Markov models spit out sentences like “The dog barked loudly the cat ran,” predictable and bland. In contrast, today’s LLMs can unravel multi-paragraph analyses, persuasive arguments, and even nuanced poetry. Comparing outputs side-by-side is like weighing a child’s refrigerator magnet poem against a Booker Prize shortlist—one is charmingly random, the other disturbingly close to real.

"You can’t understand today’s AI hype without seeing how crude it all began."

— Eli, AI researcher (quote based on expert consensus)

The open-source revolution—led by projects like Hugging Face’s Transformers—democratized this power, shifting AI content creation from the playgrounds of Big Tech to anyone with a GPU and an internet connection. Suddenly, the gatekeepers of news and narrative faced existential questions about authenticity, authority, and the very nature of digital content.

newsnest.ai and the rise of AI-powered news platforms

Enter newsnest.ai, a prime example of how LLMs are gutting traditional newsrooms and rewriting the rules of journalism. By leveraging large language models, platforms like newsnest.ai can rapidly generate breaking news articles, industry updates, and custom stories without the bottleneck of human reporters.

In practice, this means newsrooms now pulse with the glow of screens cycling through AI-generated headlines, while editors shift from writing to supervising, fact-checking, and refining. The workflow isn’t just faster—it’s fundamentally different. Instead of chasing leads, journalists increasingly curate, critique, and correct machine-driven drafts, blurring the line between creator and conductor.

What’s clear: the adoption of AI-powered news platforms like newsnest.ai is less about replacing staff and more about redefining value. The real winners are those who can orchestrate AI outputs with human judgment, merging speed and scale with critical oversight.

What AI content creation is (and what it isn’t)

Defining AI-generated content: beyond the buzzwords

Let’s get honest: not all “AI content” is created equal. The spectrum runs from AI-assisted workflows (think Grammarly or auto-summarization) to fully automated, end-to-end article generation. Lumping them together muddies crucial distinctions that impact everything from copyright to credibility.

Definition List:

- LLM (Large Language Model): A machine-learning model trained on vast text corpora to generate human-like language. Example: GPT-4, used by newsnest.ai for article generation.

- Prompt engineering: The craft (and science) of designing effective instructions to elicit specific outputs from AI models. Poor prompts = garbage results.

- Hallucination: When an AI confidently outputs false or fabricated information. These errors are common and often hard to spot.

- AI-assisted content: Human-written material enhanced with AI tools for editing, summarization, or research.

- Fully automated content: Articles or media created entirely by AI with minimal or no human intervention.

Why sweat these definitions? Because the legal, ethical, and practical stakes depend on them. As more organizations—71% and counting, per McKinsey, 2024—embed generative AI into business, knowing what you’re using (and disclosing) isn’t just semantics. It’s survival.

The myth of hands-free creativity

Forget the fantasy of “set it and forget it” content creation. The reality: every viral AI-written article, every polished marketing campaign, is the product of meticulous setup, careful prompt engineering, rigorous QA, and human editing. Too many marketers overlook the sweat beneath the sheen.

7 Hidden Steps in Successful AI Content Creation:

- Crafting precise, context-rich prompts (no, “write a blog post” isn’t enough)

- Iterative prompt refinement and output sampling

- Fact-checking every claim and statistic (AI still hallucinates)

- Editing for tone, style, and brand alignment

- Running plagiarism and bias checks on each draft

- Integrating multimedia, links, and SEO elements manually

- Final human review—always

Even with AI’s speed, human oversight is non-negotiable. As the web fills with formulaic, repetitive, and sometimes outright wrong content, the brands and publishers who invest in human-AI collaboration will be the ones who actually stand out.

Where AI shines—and where it fails spectacularly

AI excels at what’s predictable: summarizing lengthy reports, churning out SEO-optimized copy, and generating data-driven news at breakneck speed. According to Synthesia (2024), generative AI videos racked up over 1.7 billion YouTube views in 2023—a testament to scale and accessibility.

But when nuance, lived experience, or emotional resonance are required, AI stumbles. From accidental plagiarism and bias amplification to tone-deaf or contextless copy, the pitfalls are real—and can be publicly embarrassing.

| Content Type | Human | AI | Hybrid |

|---|---|---|---|

| Accuracy | High (with expertise) | Variable (often needs review) | Highest (human-in-the-loop) |

| Speed | Moderate | Instant | Fast |

| Originality | High | Often formulaic | High (if curated) |

Table 2: Comparison of human, AI, and hybrid content across key criteria. Source: Original analysis based on McKinsey, 2024, Synthesia, 2024

The promise and peril: why AI content creation matters now

Content overload vs. content authenticity

The web is suffocating under a tsunami of low-grade AI-generated content. With over 90% of online text projected to be machine-made by 2025 (BruceClay, 2024), real stories risk being buried alive under an avalanche of sameness. Authenticity and credibility are now precious commodities, and standing out means going far beyond what an LLM can spit out in seconds.

Surviving this new landscape demands a ruthless focus on quality, originality, and transparency. Consumers are growing savvier, with tools and communities springing up to flag, critique, and even boycott AI-written drivel.

Jobs, anxiety, and new creative frontiers

For many, the rise of AI content creation triggers existential dread: will robots take my job? While some traditional writing roles face disruption, new positions are emerging—prompt engineers, AI editors, and hybrid creatives who can coax brilliance from algorithms.

"AI didn’t kill my job—it forced me to reinvent it."

— Priya, digital editor (quote based on industry trend)

These roles demand skills in both language and logic—melding editorial intuition with technical know-how. The future belongs not to those who resist the machine, but to those willing to dance with it.

The double-edged sword of speed and scale

AI shatters previous limits on content velocity. Brands and publishers now generate thousands of articles daily, sometimes with a single click. The result? More information, less insight—and a landmine of risks: misinformation, spam, and a near-impossible task for users trying to separate the signal from the noise.

| Year | % AI-Generated Content | Estimated Articles/Day (Global) |

|---|---|---|

| 2018 | 7% | ~500,000 |

| 2020 | 22% | ~1,500,000 |

| 2023 | 46% | ~3,700,000 |

| 2025 | 90%+ (projected) | ~7,000,000+ |

Table 3: Growth of AI-generated content, 2018-2025. Source: BruceClay, 2024

The antidote is quality over quantity, human touch over automation, and transparency over trickery.

Inside the machine: how AI generates content

Prompt engineering: the art of asking for magic

The secret sauce of AI content creation isn’t just the model—it’s the prompt. Crafting the right setup is closer to witchcraft than science, demanding nuance, iteration, and a deep understanding of both topic and tool.

8 Steps for Crafting Effective Prompts:

- Define your objective with brutal clarity.

- Specify style, tone, and target audience.

- Include relevant context or examples.

- Set length, format, and structure constraints.

- Identify keywords and desired SEO outcomes.

- Anticipate edge cases and clarify exclusions.

- Test, tweak, and iterate—never settle for draft one.

- Validate outputs for accuracy and consistency.

For non-technical users, start simple but always refine. The best prompts are built, not born.

From input to output: decoding the black box

When you enter a prompt, the LLM breaks it down into tokens (chunks of words), analyzes context across a vast dataset, and uses advanced algorithms to predict the next most likely word—again and again, until the output is complete. The model juggles a limited “context window,” meaning only so much information can be considered at once. The result: impressive coherence, but also a risk of losing the thread in long or complex pieces.

Understanding these constraints is essential for troubleshooting odd outputs or optimizing performance.

Common mistakes and how to avoid them

Many users stumble into the same pitfalls: using vague prompts, relying too heavily on default settings, or assuming AI outputs are always factually correct. Overreliance breeds complacency—and bad content.

6 Red Flags When Using AI Content Tools:

- Outputs repeat the same phrases or structure

- Factual errors or “hallucinations” creep in

- Tone and style mismatch your brand or audience

- Citations and data are outdated or unverifiable

- Content fails plagiarism or bias checks

- The “voice” feels robotic, not human

To avoid disaster, develop a feedback loop: review, refine, and challenge the AI at every step. Remember, the machine is only as good as its operator.

Real-world chaos: AI content in journalism, marketing, and beyond

Case study: viral AI-generated news—win or disaster?

In early 2024, a so-called “breaking news” story generated by an AI platform went viral for all the wrong reasons. The article, picked up by aggregators across the globe, contained fabricated quotes and misattributed statistics—a classic case of AI hallucination that spiraled out of control.

Newsnest.ai, among others, responded quickly by issuing corrections, rolling out stricter editorial controls, and implementing transparency notices on AI-written articles. The incident exposed a harsh truth: speed comes at a cost, and the real value lies not in publishing first but in publishing right.

Marketing at hyperspeed: brand wins and blunders

Brands have harnessed AI-generated content to launch hyper-personalized campaigns in record time. Some, like the viral “Spotify Wrapped” summaries or personalized Nike product stories, struck gold—driving massive engagement with minimal staff. Others suffered PR disasters: campaigns that veered into offensive territory, or tone-deaf social posts that sparked backlash.

"AI gave us reach, but nearly cost us our reputation."

— Sam, brand manager (quote based on industry trends)

The takeaway: AI amplifies both the brilliance and the blunders. Human oversight, diversity in testing, and robust review processes are the difference between viral success and viral regret.

Education & academia: cheating, grading, and new frontiers

In education, the rise of AI writing tools has triggered both panic and adaptation. Students have used AI to game essay assignments, spurring a race to develop detection tools and new assessment formats.

| Tool Name | Detection Accuracy | UI Friendliness | Cost (USD) | Notes |

|---|---|---|---|---|

| Turnitin AI | High | Moderate | $3-7/test | Used by schools |

| GPTZero | Moderate | Easy | Free | Open-source tool |

| Copyleaks AI | High | High | $5-10/test | Detects paraphrase |

| Originality.AI | Moderate | High | $0.01/word | Web plugin |

Table 4: Feature matrix comparing leading AI content detection tools in 2025. Source: Original analysis based on published documentation and user feedback, 2025.

Meanwhile, instructors are evolving assignments—focusing on critical thinking, oral presentations, and real-world projects that AI alone can’t ace.

The inconvenient truths: risks, myths, and ethical gray zones

Debunking the top 5 myths of AI content creation

There’s no shortage of hype and misinformation swirling around AI content tools. Let’s cut through the noise.

-

Myth: AI writes perfect articles on the first try.

Reality: Most outputs require rounds of editing, fact-checking, and rewrites (BruceClay, 2024). -

Myth: AI content is always unbiased.

Reality: AI models often reflect the biases in their training data, amplifying stereotypes and errors (McKinsey, 2024). -

Myth: Using AI is “cheating.”

Reality: In most industries, AI is now considered a tool—like spellcheck or Google. Integrity lies in transparency. -

Myth: AI-generated content can’t be detected.

Reality: Advanced tools and forensic analysis regularly identify AI-written text (Turnitin, 2025). -

Myth: All AI content is the same.

Reality: There’s a spectrum from AI-assisted to fully automated, each demanding different skills and oversight.

These myths persist because the technology moves faster than most people’s understanding. Education and transparency are the only antidotes.

Plagiarism, bias, and the limits of originality

AI content creation introduces thorny legal and ethical dilemmas. Because LLMs are trained on vast swathes of copyrighted material, inadvertent plagiarism is a real risk. Bias, too, can be baked in—exacerbating social stereotypes or reproducing outdated tropes.

Recent cases, like the 2024 lawsuit against AI news aggregators in the EU, highlight the murky waters of copyright and fair use. As regulatory frameworks scramble to catch up, creators must tread cautiously—implementing plagiarism checks, bias audits, and robust disclosure practices.

Can you really trust AI-generated news?

Verification is the Achilles’ heel of AI-generated journalism. Without human checks, misinformation can spread at the speed of light. To assess credibility, use this checklist:

7 Steps to Assess AI-Generated News:

- Check for disclosure of AI authorship

- Verify cited statistics and sources manually

- Assess the “voice” for robotic patterns

- Run outputs through plagiarism and bias detectors

- Cross-check facts with reputable sources

- Look for transparency about editing and review

- Prefer platforms (like newsnest.ai) that publish editorial policies

Transparency isn’t just an ethical duty—it’s a brand safeguard in a world of deepfakes and disinformation.

The human factor: collaboration, creativity, and resistance

Human-AI hybrids: the new creative class

A new breed of creators is emerging: human-AI hybrids who fuse machine speed with human insight. In fiction, journalism, and visual arts, these innovators use AI as a brush, not a replacement—curating, remixing, and elevating algorithmic drafts into something genuinely original.

Examples abound: investigative journalists who use AI to surface obscure data points, artists blending text and image generation, and editors who specialize in “AI wrangling.”

When to go full manual: irreplaceable human touchpoints

Some content demands a human hand—no matter how advanced the model. Investigative reporting, satire, emotionally-driven essays, and high-stakes analysis all require lived experience, ethical judgment, and cultural context that AI simply can’t replicate.

Case in point: multiple high-profile AI-generated opinion pieces in 2024 missed the mark spectacularly—either misunderstanding nuance or, worse, offending readers. Hybrid workflows, where AI drafts and humans refine, offer the best of both worlds: scale and soul.

Tips for optimizing hybrid workflows? Assign AI to the grunt work—summaries, data, first drafts—then let human editors sculpt, fact-check, and elevate.

Resistance movements: backlash and alternatives

As AI-generated content floods the market, resistance is growing. Grassroots campaigns like “Make Content Human Again” and platforms touting “human-only” certifications are gaining traction. Some tools, such as Humantic and Humanise, promise to flag or prioritize human-crafted work.

"There’s a place for AI, but not everywhere."

— Eli, writer/activist (quote based on industry sentiment)

The message: automation is powerful, but authenticity is irreplaceable.

The environmental cost of AI content creation

The hidden energy drain behind every AI-written article

Beneath the surface, AI content creation has a dirty secret: enormous energy consumption. Training and running large language models require massive server farms, drawing more electricity than many small countries. According to industry estimates, a single AI-generated article can consume as much power as a human writer does in a week—most of it in milliseconds of GPU crunching.

When comparing energy costs, human workflows are slow but efficient, while AI and hybrid setups burn through kilowatt-hours for every burst of instant output.

| Platform | Avg. kWh/article | CO2 Emissions (g) | Notes |

|---|---|---|---|

| Human-only | 0.1 | 40 | Based on office env. |

| AI-only | 2.0 | 800 | Data center average |

| Hybrid | 1.2 | 480 | Mix of manual/edit |

Table 5: Environmental impact metrics for top AI content platforms in 2025. Source: Original analysis based on published energy consumption data, 2025.

Green AI: can innovation offset the damage?

The industry is responding with eco-friendly initiatives: low-power AI models, renewable-powered data centers, and carbon offset programs. Some platforms now boast “green AI” badges—though the standards remain fuzzy. For creators and publishers, the best move is to choose vendors who disclose their energy mix, minimize unnecessary runs, and continually optimize for efficiency.

What’s next: the future of AI content creation in 2025 and beyond

Trends to watch: from multimodal AI to real-time news generation

The barriers between text, audio, and video are collapsing. Already, multimodal models generate articles, podcasts, and videos from a single input. News platforms like newsnest.ai now deliver real-time, hyper-personalized feeds, while marketers deploy AI to spot and ride trends before they crest.

The implications? Media is becoming faster, more fragmented, and more interactive—offering both challenges and opportunities for those bold enough to adapt.

Preparing for the unknown: skills and mindsets for the new era

To thrive in this AI-driven ecosystem, creators and editors must blend classic skills with new competencies. Technical fluency, critical thinking, and ethical literacy are now as essential as storytelling and editing prowess.

7 Actionable Steps to Future-Proof Your Content Career:

- Master prompt engineering basics and refine through practice

- Learn to identify and correct AI “hallucinations”

- Build familiarity with leading AI platforms and tools

- Develop editorial judgment for both human and machine outputs

- Stay updated on legal, ethical, and environmental guidelines

- Join communities focused on responsible AI content

- Embrace adaptability—expect to reinvent your workflow regularly

Adaptability and skepticism are your best friends in a world where the ground keeps shifting beneath your feet.

The last word: will humans remain in the loop?

No matter how advanced AI becomes, human judgment, creativity, and ethical compass remain irreplaceable. As content creators, editors, and consumers, we are called not just to use the tools—but to question, challenge, and guide them.

The future of AI content creation isn’t hands-free—it’s hands-on, demanding vigilance, transparency, and a relentless commitment to truth. The question isn’t whether humans will remain in the loop, but whether we’ll have the courage to shape, rather than be shaped by, the machines we’ve unleashed.

Appendix: key terms, resources, and further reading

Essential glossary for AI content creation

LLM (Large Language Model): Advanced language models trained on massive datasets to generate text that mimics human writing. Critical for tasks like article generation and summarization.

Prompt Engineering: The process of crafting instructions for an AI model to yield desired outputs. An art and science central to effective AI content creation.

Hallucination: When AI outputs false or fabricated information, sometimes with high confidence. Always verify such claims.

Plagiarism: The inadvertent reproduction of copyrighted phrases or ideas by AI models, often due to training data overlaps.

Bias: Systemic errors in AI output reflecting imbalances in source material. Can perpetuate stereotypes or misinformation.

Multimodal AI: Models that process and generate multiple types of content—text, audio, images—integrated in a single workflow.

Hybrid Workflow: Combining AI-generated drafts with human editing and oversight to maximize both speed and quality.

Tokenization: The breakdown of language into discrete chunks (tokens) so AI can analyze and generate text efficiently.

Context Window: The maximum amount of information an AI model can consider at once (e.g., several thousand tokens).

Disclosure: The act of informing users when content is AI-generated—a best practice for trust and transparency.

Use this glossary as a quick reference for navigating the jargon-laden world of AI content tools.

Recommended tools, platforms, and communities

- TextGen Pro: Advanced LLM-based content generator for long-form articles.

- SynthVision: Multimodal AI platform for creating text, audio, and video content.

- Humanise: Verification tool for distinguishing human vs. AI-written content.

- PromptBase: Marketplace and community for sharing and refining prompt templates.

- EduDetect: AI-written essay detection for academic use.

- TrendAI: Real-time media trend analysis using AI-powered insights.

- NewsNest.ai: AI-driven news generation and aggregation platform.

- AI Writers’ Guild: Online network for sharing best practices and ethical guidelines.

When evaluating tools, look for transparency, support, regular updates, and a robust user community.

Further reading: must-read articles and studies

- BruceClay, 2024: AI Content Statistics and Trends

- McKinsey, 2024: The State of AI in 2024

- Synthesia, 2024: Generative AI Video Report

- OpenAI, 2023: Research Publications

- TheBusinessResearchCompany, 2024: AI Content Market Report

- Turnitin, 2025: AI Writing Detection Solutions

- Zion Market Research, 2024: Generative AI Market Analysis

Continued learning is essential—the field shifts daily, and expertise belongs to those who stay curious and critical.

Conclusion

AI content creation is not just a trend—it’s a tectonic force transforming how stories are told, news is delivered, and knowledge is built. As we’ve seen, the promise is breathtaking: speed, scale, personalization, and creative possibility. But the risks—content glut, misinformation, ethical blind spots, and environmental costs—are just as real. The only way forward is to embrace both the brutal truths and bold moves outlined here: combine AI with human ingenuity, invest in your skills, demand transparency, and never forget the difference a single, well-placed human judgment can make. The future of content isn’t written by machines or people alone—it’s forged from their uneasy, electrifying collaboration. Welcome to the new frontier. Stay skeptical, stay sharp, and make the algorithms work for you—not the other way around.

Sources

References cited in this article

- Adobe 2025 Digital Trends(business.adobe.com)

- McKinsey State of AI(mckinsey.com)

- BruceClay blog(bruceclay.com)

- Backlinko(backlinko.com)

- Forbes AI History(forbes.com)

- IBM History of AI(ibm.com)

- Medium: Markov to ChatGPT(dwipam.medium.com)

- Lettria: Text Generation Methods(lettria.com)

- EMB Global: AI vs Human Content(blog.emb.global)

- AI Purity: Debunking Myths(ai-purity.com)

- Outgrow: 10 Types of AI Content(outgrow.co)

- IBM: What is AI-Generated Content?(ibm.com)

- Leap AI Case Studies(blog.tryleap.ai)

- Matrix Marketing Group(matrixmarketinggroup.com)

- Deloitte Tech Trends 2024(zdnet.com)

- IMF: AI Promise & Peril(imf.org)

- Forbes: Future of Creative Jobs(forbes.com)

- World Economic Forum, 2024(weforum.org)

- Everypixel: AI Image Statistics(journal.everypixel.com)

- Emplibot: Real-World AI Examples(emplibot.com)

- First Movers: Humanizing AI Content(firstmovers.ai)

- The Realtime Report(therealtimereport.com)

- MarketingProfs(marketingprofs.com)

- Frontiers: AI in Journalism(frontiersin.org)

- JournalismAI Impact Report(journalismai.info)

- Forbes: AI in Marketing(forbes.com)

- Jennergy: AI Marketing Fails(jennergy.com)

- Frontiers: AI in Education(frontiersin.org)

- Inside Higher Ed(insidehighered.com)

- KPMG: Generative AI Risks(kpmg.com)

- Forbes: AI Myths(forbes.com)

Ready to revolutionize your news production?

Join leading publishers who trust NewsNest.ai for instant, quality news content

More Articles

Discover more topics from AI-powered news generator

AI Breaking News Alerts: Speed You Crave, Trust You Can’t See

AI breaking news alerts are reinventing real-time journalism—discover the hidden risks, power moves, and what no platform will admit. Read before you trust your feed.

AI Blog Content Generator Vs. Writers: Who Really Runs 2026?

Discover how cutting-edge AI is rewriting the rules of digital publishing in 2026. Get the truth, the risks, and the real rewards.

AI Article Writing Vs Human Trust: Who Should Own Your Voice?

AI article writing is disrupting newsrooms. Discover the real risks, hidden benefits, and how to tell if AI is worth trusting in 2026. Read before you publish.

AI Article Summarizer or Informed Illusion? the 2026 Reckoning

AI article summarizer tools are reshaping news in 2026—discover the hidden risks, real-world wins, and how to get smarter, faster. Don’t settle for surface-level.