Secure News Generation Software and the New Battle for Trust

It’s 2025, and the way news is created, secured, and distributed isn’t just evolving—it’s combusting. The digital pressroom is now a battleground for truth, and at the heart of this transformation lies secure news generation software powered by artificial intelligence. As more organizations swap clunky legacy systems for AI news generators like newsnest.ai, the promise is intoxicating: instant, high-quality articles at a fraction of the traditional cost, with personalization and scale that analog newsrooms can only dream of. But peel back the neon-lit surface, and darker questions emerge—about manipulation, data breaches, and who really controls the narrative. In a world where nearly 80% of organizations deploy AI for core functions (McKinsey, 2025), and over 1,200 unreliable AI news sites pollute the information stream (NewsGuard, 2025), “security” isn’t a checkbox—it’s the lifeline of public trust.

This is your unfiltered dossier on secure news generation software. Forget the glossy marketing and canned vendor pitches. Here you’ll find raw facts, real incidents, and expert insight into the security risks, hidden trade-offs, and culture war raging just beneath the AI news revolution. Whether you’re a newsroom manager, publisher, or simply a digital citizen concerned about the integrity of your daily headlines, buckle up. The truth about secure AI-powered news generation is far edgier—and more essential—than most realize.

The new frontier: Why secure news generation software matters in 2025

The explosive rise of AI-powered news

AI-generated news didn’t just sneak into the mainstream—it detonated. According to McKinsey’s 2025 global survey, a staggering 78% of organizations now use AI in at least one business process, with newsrooms at the bleeding edge. Over the past two years, publishers worldwide have flocked to solutions that promise instant content generation, multilingual publishing, and real-time analytics. Tools like newsnest.ai have become go-to platforms for organizations desperate to keep pace with relentless news cycles, cut production costs, and deliver hyper-personalized updates to their audiences.

Why the stampede? Traditional newsrooms face mounting pressure: shrinking budgets, audience fragmentation, and a tidal wave of misinformation. Integrating AI-powered news generators is less about chasing the latest tech and more about survival. These tools promise to automate the drudgery—transcriptions, summaries, even first drafts—so human journalists can chase deeper stories and investigative leads. As Maya, an AI ethics expert, notes:

"Security is more than encryption—it's about shaping trust." — Maya, AI ethics expert

The implication is clear: in 2025, security is not just about protecting data. It’s about protecting the very foundation of credible journalism.

The invisible threats behind the headlines

Of course, this digital transformation brings its own hazards. The most common vulnerabilities in news generation software aren’t just abstract technical risks—they’re active minefields. Data breaches, prompt injection attacks, algorithmic manipulation, and insider leaks have all become disturbingly routine. Security Navigator 2025 highlights a doubling in cyberattacks targeting AI-driven content platforms since 2023. Attackers exploit lax vendor standards, overconfident automation, and the sheer scale of AI operations to manipulate output or exfiltrate sensitive information.

| Year | Incident | Impact | Recovery Actions |

|---|---|---|---|

| 2023 | Prompt injection attack at a global news platform | Fake articles, loss of audience trust | Model retraining, new input validation |

| 2024 | Data breach exposing source identities | Threats to journalists, legal action | Incident disclosure, improved access control |

| 2024 | AI-generated misinformation campaign during elections | Public confusion, regulatory investigation | Content audit, partnership with fact-checkers |

| 2025 | Ransomware attack on automated news backend | Service outage, reputational harm | System overhaul, external audits |

Table 1: Summary of recent security incidents involving automated news tools

Source: Original analysis based on Security Navigator 2025, NewsGuard AI Tracking Center

Even so-called “secure” systems have been compromised. In 2023, a leading European publisher suffered a data leak that exposed confidential sources—ironically, because its trusted news generation software hadn’t been patched against a known vulnerability. The lesson: security is a moving target, and complacency is an open invitation for disaster.

What users actually want—and fear

So what’s keeping users up at night? Top concerns include not just the integrity of their news, but the privacy of their data, the potential for bias, and the specter of job displacement. According to a 2025 TechTarget survey, the main user demands for secure AI news tools are robust encryption, transparent audit logs, and the ability to verify the origin of every fact or quote.

But there are hidden benefits, too—perks that rarely make it into vendor decks:

- Lightning-fast incident response: Automated breach detection and response outpace human teams, catching threats before they escalate.

- Source protection: Secure tools anonymize contributors, reducing the risk of doxxing or reprisal.

- Tamper-proof archives: Immutable logs ensure stories can’t be quietly edited after publication.

- Real-time bias detection: Some platforms alert editors to skewed narratives or suspicious patterns as content is generated.

- Integrated compliance checks: Automated compliance with GDPR, digital news codes, and data sovereignty laws.

- Dynamic watermarking: Track and trace every version of an article to verify authenticity.

- Decentralized publishing: Distribute news across multiple nodes to resist censorship and DDoS attacks.

Yet, for all the technological firepower, the emotional toll of misinformation is profound. Readers and journalists alike report rising digital distrust, a fatigue born from the relentless churn of half-truths and algorithmic manipulation. Secure news generation software has the power to rebuild trust—but only if security is baked into the DNA, not bolted on as an afterthought.

Beyond encryption: Deconstructing what ‘secure’ really means for news generators

Encryption basics—and where they fail

Ask most vendors about security and you’ll hear the usual buzzwords: “end-to-end encryption,” “TLS 1.3,” “256-bit keys.” Encryption is foundational, but it’s not a panacea. News generation software typically employs encrypted data in transit (SSL/TLS) and at rest (AES-256 or similar), with user authentication layered on top. But here’s the rub: too often, attackers don’t bother brute-forcing the math—they target the implementation, the human error, or the interface.

Key security terms (and why they matter):

The process of encoding data so only authorized parties can access it. In news software, this protects stories and sources from snooping, but can’t prevent insider threats if credentials are compromised.

A security model that assumes no user or device is trustworthy by default—even inside the perimeter. Forces constant verification and tight access controls.

Running software in an isolated environment to prevent malicious code from escaping and contaminating the system. Useful for AI tools that process untrusted input.

Real-world breaches drive the point home. In early 2024, an encrypted news publishing platform was breached—not because the encryption failed, but because an admin account was compromised through social engineering. Encryption, while necessary, can’t fix weak passwords or unpatched software.

Data privacy by design: Not just a buzzword

“Privacy by design” means building privacy protections into the very architecture of software, not treating it as a compliance afterthought. In AI-powered news generation, this manifests as careful data minimization (collect only what’s needed), regular privacy impact assessments, and user consent baked into every workflow.

Unlike reactive privacy (patching leaks after they occur), proactive privacy anticipates vulnerabilities and neutralizes them at the blueprint stage. Automated tools now monitor every data access, flagging suspicious activity and anonymizing sensitive fields before content leaves the platform. The difference is night and day: systems built for privacy from the ground up are measurably harder to exploit, and inspire more user confidence.

Red flags: How to spot insecure news generation software

Wondering if your news automation platform is more sieve than safe? Here’s a 9-step guide to sniffing out insecurity:

- No third-party security audits: If you can’t see the latest security assessment, move on.

- Opaque data handling: Beware platforms that can’t explain how your data is stored and processed.

- Lack of audit logs: No logs, no accountability.

- Weak access controls: Generic admin accounts or password-only authentication are red flags.

- No user role separation: Editors and contributors should have distinct permissions.

- Absence of encryption at rest and in transit: Both are non-negotiable.

- Infrequent updates or patch notes: Stale software is an attacker’s playground.

- Overly broad vendor claims (“unhackable,” “perfect security”): Overconfidence masks gaps.

- No incident response plan: If they can’t tell you exactly what happens in a breach, run.

Common warning signs? Vague language around security, resistance to independent testing, or an inability to answer tough questions on compliance. As cybersecurity analyst Alex puts it:

"If you can’t audit it, you can’t trust it." — Alex, cybersecurity analyst

Auditability is not a luxury. It’s the difference between real security and security theater.

Debunking myths: The inconvenient truths about AI-powered news security

Myth #1: AI news generators can’t be hacked

Spoiler: they can, and they have. High-profile breaches include prompt injection attacks (where malicious input tricks the AI into generating fake or harmful content), supply chain exploits (tampering with third-party models or data), and credential theft. The aftermath? Corrupted news feeds, reputational damage, and even legal action.

| Platform | Vulnerability | Exploit Example | Mitigation |

|---|---|---|---|

| Cloud-based AI tool | Prompt injection | Model tricked into publishing fake stories | Input sanitization |

| Open-source CMS | Unpatched plugin | Unauthorized access to editorial backend | Regular updates, code reviews |

| Proprietary system | Credential reuse | Attacker logs in with leaked admin password | Two-factor authentication |

| Hybrid AI platform | Model manipulation | Adversarial input alters the tone of news output | Model retraining, robust testing |

Table 2: Attack vectors in news generation software, with platform-specific examples

Source: Original analysis based on Security Navigator 2025, TechTarget RSAC 2025

Myth #2: More security always means better news

It’s tempting to think that adding more security layers guarantees safer, higher-quality news. But there’s a dark side: excessive security can create silos, slow down publication, and stifle journalistic freedom. Over-securitization risks locking the very news it’s meant to protect behind walls so thick that transparency and accountability suffocate.

Striking the right balance means recognizing that real journalism thrives on both protection and openness. Locking journalists out of their own tools—or making it too cumbersome to verify and correct information—undermines the very purpose of news.

Myth #3: All secure news generation tools are equal

Not all news generators play by the same rules. Security standards and certifications vary widely, and the mere presence of an ISO badge on a website doesn’t guarantee airtight protection.

A widely used standard for internal controls over data security, but doesn’t guarantee end-to-end protection for AI-generated content.

The gold standard for information security management, focusing on comprehensive risk assessment and mitigation—but only as strong as its implementation.

Essential for platforms operating in or serving the EU, but doesn’t address technical loopholes or emerging AI-specific threats.

Many vendors leverage these certifications in marketing, glossing over the fact that compliance alone doesn’t equal security. Buyers need to look past the logos and demand proof: documented controls, transparent processes, and independent validation.

Inside the machine: How secure news generation software actually works

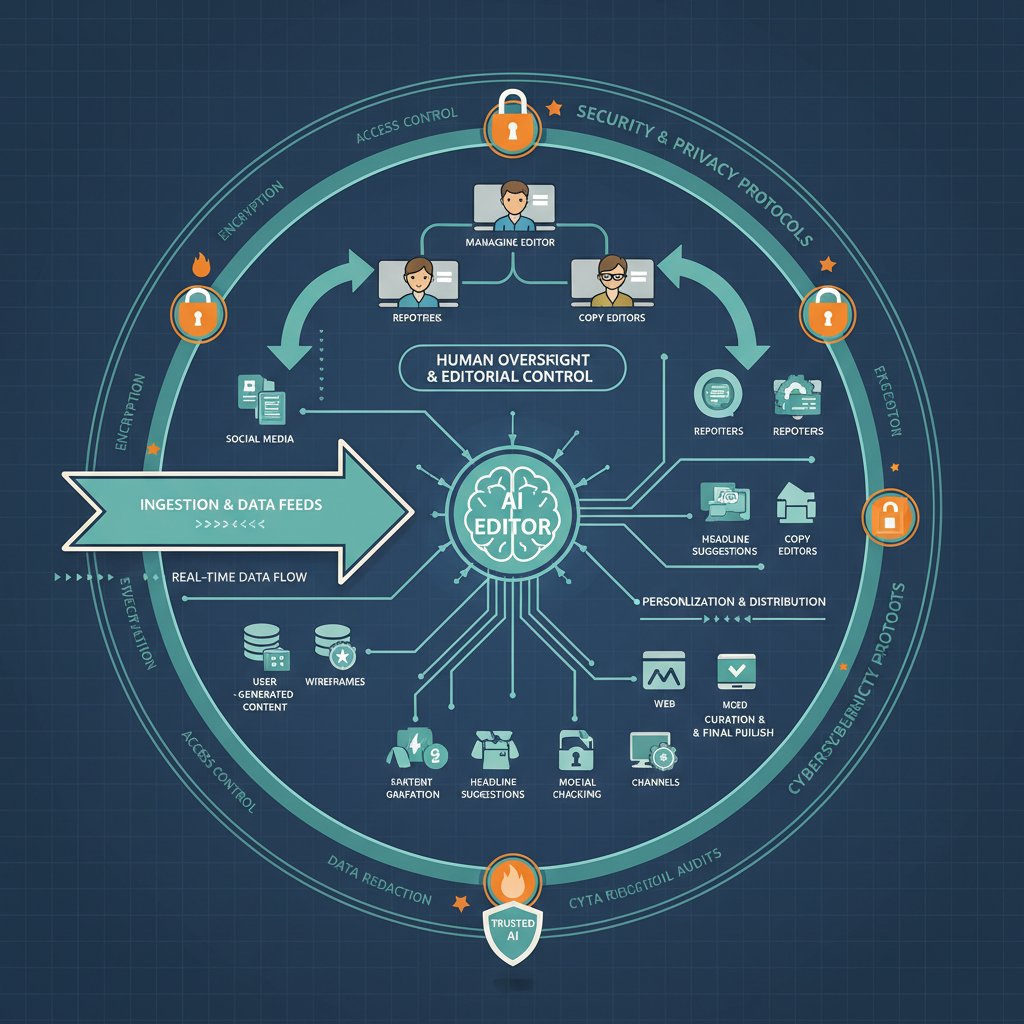

Under the hood: AI models, data pipelines, and human oversight

Modern AI-powered news generators rely on a complex architecture: secure data ingestion, advanced language models (often fine-tuned for specific industries), continuous content validation, and—crucially—human editorial oversight. Newsnest.ai and similar platforms blend automation with the judgment of experienced editors, who review flagged stories, train models against bias, and audit the content pipeline.

While automation handles the heavy lifting—transcription, summarization, translation—humans step in whenever nuance, sensitivity, or fact-checking is required. The result? A workflow where speed and scale don’t come at the expense of integrity.

Bias, manipulation, and algorithmic defense

AI models are only as good as the data they’re trained on. Bias can creep in through skewed inputs, adversarial attacks, or even subtle manipulation of prompts. Some of the most insidious risks involve echo chambers, where algorithms amplify certain narratives while burying dissenting voices.

Red flags for bias in AI-generated news:

- Overrepresentation of a single viewpoint

- Lack of diverse sources cited

- Consistent underreporting of minority issues

- Unexplained changes in editorial tone

- Suspiciously consistent sentiment across stories

- Absence of correction or retraction mechanisms

To fight these risks, state-of-the-art bias mitigation includes diverse data sampling, adversarial model testing, transparent prompt engineering, and, critically, real-time human monitoring. Platforms now flag suspect output for immediate review, and some even offer explainable AI logs so editors can trace exactly how each decision was made.

Who watches the watchers? Auditability and explainability

Audit trails are the unsung heroes of secure news automation. Every content change, model update, or access event is logged—giving publishers and audiences a verifiable record of what was done, when, and by whom. Explainable AI takes this further: breaking down the “black box” so editors and regulators can understand how outputs are generated, spot anomalies, and challenge mistakes.

"Transparency is the new security frontier." — Priya, investigative journalist

These features aren’t just for compliance—they’re shields against manipulation, accidental bias, and creeping editorial drift. When you can trace a headline’s lineage step by step, you neutralize one of modern journalism’s biggest threats: plausible deniability.

Case files: Real-world wins and epic fails in secure AI news deployment

Success stories: Secure news generation at scale

When a major international publisher rolled out secure AI-powered news generation in late 2024, the results were staggering: content delivery times dropped by 60%, fact-checking errors plummeted, and audience trust metrics soared. Automated compliance monitoring flagged three potential privacy violations before publication, averting costly legal battles.

Unconventional uses for secure news generation software:

- Crisis reporting in disaster zones

- Real-time market analysis for financial institutions

- Rapid translation and localization of breaking news

- Internal corporate communications with sensitive data

- Anonymous whistleblower submissions and vetting

- Hyperlocal crime and weather alerts

- Integration with social listening tools for trend analysis

Beyond media, sectors from healthcare to finance are now deploying secure news platforms for everything from urgent public health updates to investor communications—proving the technology’s adaptability across high-stakes industries.

When things go sideways: Breaches, leaks, and public fallout

But when security fails, the fallout is brutal. In early 2024, a well-known news platform suffered a breach that resulted in leaked source identities and fabricated stories. Public trust cratered; advertisers fled.

| Date | Event | Response | Outcome |

|---|---|---|---|

| Feb 2024 | Data leak exposes confidential sources | Public disclosure, emergency patching | Loss of trust, legal investigation |

| Mar 2024 | Fake news generated during political crisis | Internal audit, AI retraining | Regulatory scrutiny, staff overhaul |

| Apr 2024 | Ransomware attack disables news backend | System shutdown, external forensics | Temporary outage, new protocols |

Table 3: Timeline of major breaches and responses in AI-driven news deployment

Source: Original analysis based on Security Navigator 2025, NewsGuard AI Tracking Center

What could have been done differently? Faster patch cycles, mandatory two-factor authentication, real-time anomaly detection, and—most importantly—transparent communication with the public. The best crisis response isn’t spin. It’s accountability.

Lessons from the edge: News generation in high-risk environments

In war zones and authoritarian states, secure news generation software is both shield and sword. Reporters rely on AI-driven anonymization, encrypted communications, and decentralized publishing to get stories out without risking their lives. But these environments demand constant vigilance—attackers innovate as quickly as defenders.

Unique challenges include unreliable infrastructure, hostile actors deploying deepfake “counter-narratives,” and legal regimes that criminalize even the appearance of dissent. Adaptive security features—like instant data shredding, redundant cloud backups, and global failover nodes—are now non-negotiable for newsrooms on the front lines.

How to choose: A buyer’s guide to secure news generation software

Must-have features and dealbreakers

Every buyer wants the “most secure” platform, but what does that actually mean? Non-negotiable features for any reputable news generator:

- End-to-end encryption (in transit and at rest)

- Granular user permissions and role separation

- Immutable audit logs

- Regular third-party security audits

- Automated compliance checks (GDPR, CCPA, etc.)

- Real-time threat detection and alerts

- Integrated bias and fact-checking tools

- Transparent incident response protocols

- Seamless integration with existing workflows

- Responsive, knowledgeable support staff

Priority checklist for secure news software implementation:

- Identify your compliance obligations (GDPR, digital codes)

- Audit existing data flows and integrations

- Document user roles and required permissions

- Demand proof of independent security audits

- Test encryption protocols with internal red-teaming

- Evaluate audit log accessibility

- Verify incident response and breach notification policies

- Assess content bias and correction mechanisms

- Trial software in a sandboxed environment

- Solicit peer reviews and user testimonials

When weighing features against real-world needs, look for platforms with a proven track record—newsnest.ai is frequently cited as a thought leader not just for innovation, but for transparency and real-world accountability across the news security ecosystem.

Cost, complexity, and the hidden price of security

The best security isn’t cheap, but the cost of a breach is far greater. Advanced secure news platforms may require higher upfront investment—think licensing, integration, and staff training—but deliver savings in risk reduction, compliance, and credibility. Unseen costs often lurk in maintenance, user onboarding, and ever-evolving regulatory demands.

| Software | Security Level | Cost | Scalability | Support |

|---|---|---|---|---|

| NewsNest.ai | Advanced | $$$ | Unlimited | 24/7 expert staff |

| Competitor X | Basic | $$ | Restricted | Standard office hours |

| Competitor Y | High | $$$$ | Limited | Dedicated account manager |

Table 4: Cost vs. feature matrix for leading secure news tools

Source: Original analysis based on Personate Blog, 2025

Remember: the sticker price is just the start. Budget for ongoing training, regular security reviews, and compliance audits. If a platform seems “too good to be true,” scrutinize what’s left out of the contract.

Putting it to the test: How to vet your vendor

Don’t take vendors at their word. Demand third-party security audits, penetration testing, and full transparency around incident history. Ask for references from other buyers in your sector. Review software evolution timelines:

- 2018: Early AI-assisted journalism tools, basic automation

- 2020: First generation of encrypted news platforms

- 2022: Integrated compliance modules (GDPR, CCPA)

- 2023: Real-time threat detection debuts

- 2024: Bias mitigation and explainable AI logs

- 2024: Decentralized publishing architectures

- 2025: Automated incident response and source anonymization

- 2025: Cross-industry adoption (finance, healthcare, public sector)

User testimonials are telling; peer reviews and case studies offer unvarnished feedback. Don’t just read the quotes—ask for specifics about real incidents handled, support quality, and the speed of updates.

The culture war: Secure news generation and the battle for public trust

Societal impact: Shaping discourse in a polarized world

Secure AI news isn’t just a technical fix—it’s a weapon in the battle for hearts and minds. When done right, it can rebuild public trust, empower marginalized voices, and bolster democracy. When done wrong, it becomes an accelerant for misinformation, polarization, and cynicism.

Secure news platforms are stepping up, using automated fact-checking, source verification, and real-time audience feedback to fight back against the rising tide of falsehoods. The result? A more resilient, informed, and engaged public—one headline at a time.

Regulatory minefields: Navigating legal and ethical challenges

The legal landscape for AI-generated news is a patchwork of evolving laws and gray areas. Staying compliant means not just ticking boxes, but understanding the intent behind regulations.

The EU’s sweeping legislation on trustworthy AI, mandating transparency, bias mitigation, and human oversight.

Statutes that require platforms to label AI-generated content, flag corrections, and document provenance.

The principle that data must remain under the jurisdiction of the country where it’s collected—impacting storage, access, and global collaboration.

Non-compliance isn’t just risky—it’s a fast track to fines, public backlash, and operational shutdowns. The challenge? Many regulations are still catching up to the technology, leaving gaps and ambiguities that vendors and buyers must navigate with caution.

The arms race: Bad actors, deepfakes, and the next security threats

No security strategy is static. The latest threats include deepfake videos, social engineering campaigns targeting newsroom staff, and adversarial attacks designed to poison AI models from the inside.

Hidden dangers in the future of AI-powered news:

- Deepfake-generated interviews and quotes

- Synthetic news sources seeded for propaganda

- Supply chain attacks on third-party data feeds

- Social engineering of AI model trainers

- Watermark spoofing to bypass authenticity checks

- Automated phishing campaigns targeting editors

- Zero-day exploits in cloud publishing platforms

- Coordinated botnets amplifying misinformation

To stay ahead, leading vendors invest in continuous R&D, participate in cross-industry threat intelligence exchanges, and invite external “white hat” hackers to probe for weaknesses before attackers do.

The road ahead: Innovations, predictions, and the future of secure news software

Emerging tech: What’s changing in the next 3 years

The latest advances in AI-powered news generation focus on deeper explainability, decentralized trust models, and ever tighter human-AI partnerships. Holographic AI assistants, dynamic content validation, and quantum-resistant encryption are no longer sci-fi—they’re being prototyped now in forward-thinking newsrooms.

User expectations are shifting as well: audiences demand transparency, accountability, and the ability to verify every fact at the click of a button. The platforms that thrive will be those that make security and trust as intuitive as the news they deliver.

From automation to augmentation: Human-AI partnerships

The smartest newsrooms aren’t betting on full automation—they’re embracing augmentation, blending AI’s speed with human judgment and context.

Ways newsrooms can leverage secure AI for better reporting:

- Rapid multilingual translation and localization

- Automated trend and anomaly detection

- Instant fact-checking and source verification

- Bias flagging and real-time editorial alerts

- Secure reporting from hostile environments

- Cross-platform distribution with compliance checks

Pitfalls? Over-reliance on automation, underinvesting in training, and ignoring the “human in the loop.” The best results come when tech amplifies journalistic instincts, not replaces them.

Are we ever truly secure? A philosophical (and practical) conclusion

No system is invulnerable. The limits of security are defined as much by human vigilance as by code and cryptography. What matters most is critical engagement: questioning what you read, demanding transparency, and staying alert to new threats.

"Security is a journey, not a destination." — Jamie, tech philosopher

The world of AI-powered news will continue to evolve, bringing both opportunity and danger. But with the right mix of technology, transparency, and tenacity, readers and publishers alike can stay a step ahead in the fight for trustworthy, secure information.

Supplementary deep dives: Adjacent themes and real-world implications

Cross-industry lessons: What news can learn from fintech and cybersecurity

Fintech and news may seem like strange bedfellows, but both industries face relentless attacks, stringent regulation, and the need for absolute trust.

| Feature | Fintech | News | Overlap |

|---|---|---|---|

| Transaction logging | Required by law | Increasingly common | Traceable records |

| Encryption | End-to-end, mandatory | Often optional | Secures sensitive information |

| Real-time alerts | Fraud detection | Threat/bias detection | Automated risk identification |

| Regulatory audits | Annual, strict | Emerging, inconsistent | External validation |

Table 5: Feature comparison—fintech vs. news security needs

Source: Original analysis based on Security Navigator 2025, TechTarget RSAC 2025

Best practices from fintech—such as immutable audit trails, real-time anomaly detection, and layered access controls—are now being adapted to news platforms, raising the security bar across the board.

Common misconceptions: What the marketing never tells you

Vendors love to stretch the truth. Here are seven marketing claims about secure news generation software that don’t hold up under scrutiny:

- “Unhackable” platforms—No such thing; attack surfaces always exist.

- “AI guarantees fact-checking”—AI can accelerate, but not guarantee, accuracy.

- “Fully automated newsrooms”—Human oversight remains essential for credibility.

- “One-click compliance”—True compliance is an ongoing process, not a feature.

- “Universal bias protection”—Bias mitigation is complex and context-dependent.

- “Seamless integration in any environment”—Legacy systems can pose major challenges.

- “Zero maintenance required”—Updates, training, and monitoring are continuous necessities.

To see through the hype, critically evaluate claims, demand documentation, and insist on access to independent test results.

Practical tools: Self-assessment and next steps

Ready to dive into secure news automation? Start by assessing your organization’s real needs and readiness.

- Have you identified your compliance requirements?

- Is your current infrastructure compatible with secure AI platforms?

- Do you have a clear data governance policy?

- Are roles and permissions sharply defined?

- Is staff trained to recognize and report cyber threats?

- Have you budgeted for ongoing security reviews?

- Are you prepared to communicate transparently in a breach scenario?

To continue learning, tap into resources from recognized industry leaders—newsnest.ai offers up-to-date guides, case studies, and thought leadership on building secure, AI-powered newsrooms for organizations of every scale.

Conclusion

The promise and peril of secure news generation software are now in sharp relief. AI-powered platforms like newsnest.ai are rewriting the rules of digital journalism, offering unmatched speed, scale, and personalization. Yet with these gains come profound new risks: cyber threats, algorithmic bias, and the ever-present danger of eroding public trust. The stories you read—and the truths you believe—are only as strong as the software they’re built on.

Don’t settle for assurances. Demand proof. Prioritize transparency, relentless auditing, and a culture of critical questioning. In a world drowning in AI-generated content, secure news generation software is both shield and test of our collective vigilance. The battle for trustworthy headlines is just beginning—and the only certainty is that complacency is the greatest risk of all.

Sources

References cited in this article

- Security Navigator 2025(thehackernews.com)

- NewsGuard AI Tracking Center(newsguardtech.com)

- TechTarget RSAC 2025(techtarget.com)

- Personate Blog(blog.personate.ai)

- KPMG Cybersecurity 2025(kpmg.com)

- Forbes: Future of Software 2025(forbes.com)

- App Developer Magazine(appdevelopermagazine.com)

- CSIS: Navigating Risks of AI in News(csis.org)

- Columbia Journalism Review(cjr.org)

- AP News: Davos Report(apnews.com)

- ThinkSys: Secure Software Development(thinksys.com)

- Forrester: 2025 Security Predictions(forrester.com)

- SentinelOne: AI Security Risks(sentinelone.com)

- Medium: AI Cryptography(medium.com)

- OneAI on AI News Agents(oneai.com)

- BigID: Navigating AI Data Privacy(bigid.com)

- DataGrail: Generative AI Privacy(datagrail.io)

- Silo AI: GDPR & AI(silo.ai)

- Two Rivers Public Schools: Spot Fake News(libguides.trschools.k12.wi.us)

- Poynter: Misinformation Red Flags(poynter.org)

- Alltek Holdings: Spot Fake Software(alltekholdings.com)

- CircadianRisk: AI Myths in Security(circadianrisk.com)

- Q3Tech: AI Cybersecurity Myths(q3tech.com)

- Forbes Tech Council(forbes.com)

- Johns Hopkins: AI Image Generator Hacks(sos-vo.org)

- ApproachableAI: AI Vulnerabilities(approachableai.com)

- Forbes: AI Data Leaks(forbes.com)

- CISA: Secure by Design(cisa.gov)

- Developer Tech: AI and Software Development(developer-tech.com)

- CSO Online: Securiti Gencore AI(csoonline.com)

- SentinelOne: Generative AI Security Risks(sentinelone.com)

- CEBRI Journal: AI Bias & Cybersecurity(cebri.org)

- IBM: Algorithmic Bias(ibm.com)

- PwC: Trust in AI(pwc.com)

- LinkedIn: Securing AI Systems(linkedin.com)

- Microsoft Blog: Real-World AI Stories(blogs.microsoft.com)

- Medium: AI Startup Dynamics(medium.com)

- ClarifyCyber: DeepSeek AI Data Leak(clarifycyber.com)

- Spiceworks: ChatGPT Leaks(spiceworks.com)

- Bitdefender: Cutout.Pro Breach(bitdefender.com)

Ready to revolutionize your news production?

Join leading publishers who trust NewsNest.ai for instant, quality news content

More Articles

Discover more topics from AI-powered news generator

Scale News Coverage Easily Without Killing Trust or Your Newsroom

Scale news coverage easily with 7 radical strategies. Discover how to automate, outpace rivals, and rethink the newsroom for 2026. Don’t let your stories fall behind.

Scalable News Generation Platforms: Power, Risks, and ROI

Discover how AI-powered news generators are reshaping journalism, exposing hidden risks and opportunities. Read before you automate.

Scalable News Content, Real-Time Ai, and the Fight for Truth

Scalable news content is rewriting how journalism works. Discover the edgy truth, real risks, and how to harness AI-powered news generators now.

Scalable News Automation in 2026: Profits, Risks, and Reality

Scalable news automation is redefining journalism in 2026. Discover hidden risks, rare wins, and real-world strategies to master AI-powered news generation now.

Save Costs on News Writing Without Triggering a Race to the Bottom

Save costs on news writing with actionable strategies, AI-powered tools, and real-world case studies. Uncover bold truths, avoid pitfalls, and future-proof your newsroom.

Robot Journalism and Who Really Controls the Future of News

Robot journalism is rewriting the news industry. Discover the secrets, risks, and opportunities behind AI-powered reporting. Don’t get left in the dark—read on.

Replacement for Manual Fact-Checking Isn’t What You Think

Replacement for manual fact-checking is reshaping how we verify truth. Discover bold insights, real risks, and what the future holds—don’t get left behind.

Replacement for Freelance Journalists: What Newsrooms Choose Now

Replacement for freelance journalists is here—explore 7 transformative solutions, from AI-powered news generators to hybrid models. Rethink how news gets made.

Reliable News Generation Software and the Battle for Trust

In 2025, reality is a negotiation. News is no longer just reported—it’s generated, curated, and sometimes entirely conjured by algorithms spinning the world’s

Reduce News Writing Expenses and Outsmart Bigger Newsrooms

Reduce news writing expenses with bold, AI-driven strategies. Discover how to cut newsroom costs, boost quality, and outpace the competition in 2026.

Real-World News Generation Examples That Changed Journalism

Real-world news generation examples reveal how AI transforms breaking news, sports, and local stories. Discover the truth, controversies, and must-see cases now.

Real-Time Technology News Articles and the New Trust War

Real-time technology news articles are changing how we think, invest, and react. Discover the hidden costs, power players, and how to survive the info deluge. Read before your next refresh.