News Feed Personalization Is Quietly Rewriting Your Reality

Step into your daily scroll. The headlines aren’t just news—they’re a mirror, a maze, and a maze built just for you by invisible hands. News feed personalization has become the unseen architect of our digital lives, shaping what we know, what we question, and, more alarmingly, what we never even see. As over 70% of news app users demand tailored content, and AI-powered algorithms analyze our every click, the line between curation and manipulation is easier to cross than ever before. Are you truly in control of your news, or is your feed controlling you? This deep dive exposes the hidden truths, razor-edged benefits, and very real dangers of algorithmic news curation. Get ready for an unflinching look at the forces shaping your reality—and learn how to take back the keys before your digital appetite is engineered beyond recognition.

The evolution of news: from editors to algorithms

A brief history of news curation

For generations, newsrooms were temples of editorial judgment. Editors decided which stories ran, which voices mattered, and which scandals would roar or whimper. The process was messy, sometimes flawed, but largely human. Editorial boards relied on decades of experience, professional ethics, and often, a sense of civic duty. These gatekeepers weren’t infallible, but their decisions were—at least in theory—anchored by shared standards and public interest.

When the digital revolution stormed in, it promised endless choice and instant access. But the shift wasn’t just about speed or reach. The real revolution was in who (or what) got to decide what you would see. Human editors were gradually nudged aside as algorithms—opaque, mathematically precise, and shockingly efficient—arrived to curate our attention at scale.

Key Definitions:

- Curation: The act of selecting, organizing, and presenting information or content. Traditionally handled by human editors in newsrooms, ensuring a mix of significance and diversity.

- Editorial judgment: Human decision-making about newsworthiness and public interest, rooted in professional standards and societal values.

- Algorithmic feed: A stream of content assembled by automated systems that analyze user data, typically prioritizing engagement and personalization over editorial values.

The cost of this transition? While efficiency and scale skyrocketed, something intangible was lost: the human hand that balanced context, diversity, and responsibility. According to the Reuters Institute, 2024, today’s news curation is less about what the world needs to know and more about what keeps each user swiping.

How algorithms took over your headlines

The dawn of the algorithmic era wasn’t a light switch—it was a slow burn. In the early 2000s, news platforms began experimenting with basic recommendation engines. By the 2010s, social media feeds were driven almost entirely by engagement metrics, shifting power from editors to code. By 2024, the dominance of AI and machine learning in news curation is absolute. Algorithms now dissect your reading habits, search history, and even your pauses while scrolling to serve up headlines tailored to your profile.

| Year | Platform / Tech | Milestone | Impact |

|---|---|---|---|

| 2004 | Google News | Early “personalize by topic” | First taste of tailored news selection |

| 2009 | News Feed algorithms launched | Engagement metrics over editorial priorities | |

| 2016 | Apple News, Flipboard | Mobile app curation | Personalized feeds by device usage |

| 2020 | AI-powered models (NLP, ML) | Deep learning in news | Content tailored to micro-interests |

| 2024 | AI news generators (e.g., newsnest.ai) | Real-time, automated news | Hyper-personalized, instant delivery |

Table 1: Timeline of key news feed personalization milestones. Source: Original analysis based on Reuters Institute, 2024, Contentful, 2024.

The shift is profound: it’s not just what you’re shown, but how much say you have in the process. User agency—the power to choose or even understand what shapes your news—has been quietly redefined. As journalist Maya provocatively notes:

"Algorithms aren’t just tools—they’re gatekeepers now." — Maya, 2024

The role of data: why your clicks matter more than ever

Every swipe, tap, and share is a data point—fodder for the algorithm’s relentless optimization. AI-driven news platforms ingest terabytes of behavioral data daily, mapping your interests, location, and even the time you spend hovering over a headline. According to Quintype, 2024, over 70% of users actively engage with personalization settings, unwittingly training their feeds to deliver more of what they already like.

But here’s the catch: this isn’t just about convenience. The commodification of your behavior means your attention is the product being sold to advertisers, political campaigns, and content creators clawing for relevance. The privacy trade-offs are often buried in fine print, and the more data you provide, the deeper the algorithm’s grip on your worldview.

Hidden ways news platforms track and use your data:

- Click tracking: Every opened article signals interest, boosting similar content in your feed.

- Scroll depth: How far you scroll down a page informs content ranking.

- Dwell time: Pausing on a headline or image increases its perceived relevance.

- Like/share patterns: Social engagement weighs heavily in algorithmic promotion.

- Device and location metadata: Where and how you access news influences content selection.

The bottom line: in the world of news feed personalization, you’re both the subject and the source material. Your digital fingerprints shape the headlines you see—and the ones that vanish from view.

Inside the personalization engine: how your feed gets built

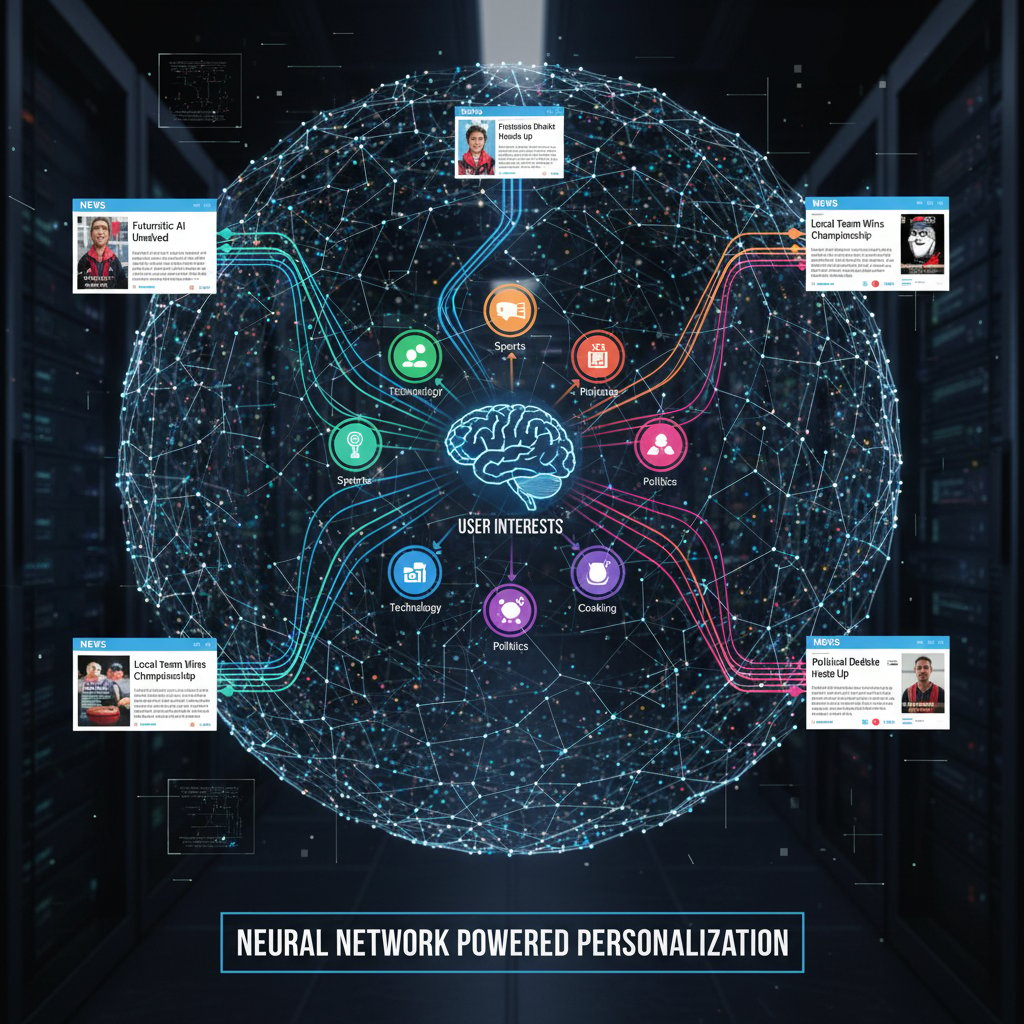

The mechanics: how AI tailors your news

At the heart of every personalized news feed is a blend of technical wizardry and psychological insight. Collaborative filtering analyzes the behavior of users similar to you, suggesting stories based on what “your tribe” reads. Natural Language Processing (NLP) dissects article content and matches it to your demonstrated interests—sometimes down to the subtlest keyword. Content-based models serve up stories eerily aligned with your past reading, creating feedback loops that are hard to break.

An AI-powered news generator—like the one powering platforms such as newsnest.ai—takes this a step further. It ingests breaking news, runs it through language models trained on millions of articles, and spits out original, high-quality content tailored to your preferences. The system cross-references your behavior, current trends, and even sentiment analysis to predict what will keep you engaged, right now.

This is personalization at the bleeding edge—dynamic, responsive, and almost unsettlingly accurate.

Personalization vs. manipulation: where’s the line?

Not all customization is benevolent. There’s a thin, slippery line between helping users discover relevant content and nudging them toward specific beliefs or behaviors. When does “tailored news” cross into manipulation? The answer is rarely clear-cut. Algorithms optimize for engagement, not ethics. If outrage or sensationalism keeps you scrolling, expect more of it. The feedback loop intensifies, and user agency can become an illusion.

Ethical considerations are mounting. Consent mechanisms—those endless cookie banners and privacy settings—are often perfunctory, rarely empowering users to understand or control how algorithms shape their reality. As digital ethicist Jordan observes:

"What you see isn’t always what you wanted. Sometimes it’s just what the algorithm wants." — Jordan, 2024

Transparency, true user control, and accountability are still evolving ideals, not standard practice.

The rise of AI-powered news generators

Platforms like newsnest.ai have redefined what’s possible in content creation and delivery. By automating news production, these services offer instant, scalable, and deeply customized reporting. Whether you’re monitoring financial markets, tech breakthroughs, or local events, AI-powered news generators can outpace traditional newsrooms—no human bottleneck, no bias fatigue, just rapid-fire coverage tuned to your every preference.

| Feature | Human-edited News | AI-generated Feeds (e.g., newsnest.ai) |

|---|---|---|

| Speed | Hours to days | Seconds to minutes |

| Bias | Editorial, human | Algorithmic, data-driven |

| Diversity | Curated, variable | Hyper-custom, risk of echo chamber |

| Depth | Investigative, slow | Surface-level, broad, can be shallow |

Table 2: Human-edited vs. AI-generated news feed comparison. Source: Original analysis based on Contentful, 2024, Cyber Journalist, 2024.

AI news generators democratize news creation and break the monopoly of legacy outlets—but they also multiply the risk of algorithmic bias and shallow reporting if not carefully managed.

The promise and peril: what news feed personalization gets right—and wrong

The benefits: relevance, engagement, and discovery

Let’s give the algorithm its due: news feed personalization can be a lifesaver in the digital noise. With millions of headlines published daily, a finely tuned feed can elevate stories that actually matter to you, while filtering irrelevant clutter. According to Microsoft Edge, 2024, mobile news consumption with personalized feeds jumped over 30% year-over-year, as users gravitate toward platforms that “get them.”

Hidden benefits of news feed personalization experts won’t tell you:

- Time efficiency: Personalized feeds reduce doomscrolling by surfacing high-priority content first, saving hours weekly.

- Discovery of niche topics: Algorithms can reveal voices and stories you’d never find on mainstream news sites.

- Adaptive learning: The more you interact with your feed, the better it adapts, improving accuracy and satisfaction.

- Distraction filtering: By deprioritizing clickbait, personalization can help maintain focus and attention.

- Self-education: Targeted news makes it easier to deepen expertise in areas you care about, from finance to tech.

But these strengths hide a darker, more complex story.

The dark side: filter bubbles, bias, and missed stories

Personalization’s shadow side is the infamous filter bubble. Algorithms learn your preferences—and then feed them back to you ad nauseam, reinforcing your worldview and muting opposing voices. The impact isn’t theoretical. According to Arcreactions, 2024, many users report feeling “trapped” in a cycle of repetitive stories, with little exposure to new perspectives.

"Sometimes I feel like I’m reading the same story, over and over." — Alex, 2024

This echo chamber effect can deepen bias, polarize public discourse, and even erode trust in journalism. Missed stories aren’t just missed opportunities—they’re blind spots that shape collective understanding.

Common myths about news feed personalization

Personalization is not a panacea. In fact, many common beliefs about algorithmic feeds are flat-out wrong.

Myths vs. reality of news feed algorithms:

- Myth: Personalization always improves your news experience.

- Reality: It can just as easily trap you in echo chambers.

- Myth: Algorithms are neutral.

- Reality: Every algorithm is built on specific values and priorities—usually maximizing engagement, not diversity.

- Myth: You’re fully in control of your feed.

- Reality: Most users unknowingly cede agency to opaque machine learning processes.

- Myth: Personalization only uses your explicit preferences.

- Reality: Platforms track far more—behavior, device, time, and network effects.

Don’t buy the myth of neutral code: personalization reflects the incentives and blind spots of its designers.

Case studies: who’s winning and losing the personalization game

Success story: how one publisher supercharged engagement

Consider a mid-size digital publisher struggling with stagnant traffic. In early 2023, they overhauled their platform with AI-driven feed personalization. Before the update, average session time hovered at 2.5 minutes, bounce rates were 58%, and repeat visits lagged industry benchmarks. Within six months of rolling out dynamic, user-adaptive feeds, engagement metrics soared: session times topped 4 minutes, bounce rates dropped to 38%, and repeat visits climbed 42%.

| Metric | Before Personalization | After Personalization | % Change |

|---|---|---|---|

| Avg. Session Time | 2.5 min | 4.1 min | +64% |

| Bounce Rate | 58% | 38% | -34% |

| Repeat Visits | 1.2x/month | 1.7x/month | +42% |

Table 3: Engagement metrics before and after personalization. Source: Original analysis based on industry reporting and Quintype, 2024.

Key to success: transparent personalization tools, user education, and regular audits to avoid monotony.

Personalization gone wrong: when algorithms backfire

But the risks are real. In 2023, a high-profile news app faced a backlash after its personalization algorithm began amplifying misinformation during a breaking political event. Users reported seeing eerily similar, sensational headlines regardless of their stated preferences. The fallout included public apologies, a dip in app store ratings, and a compliance investigation.

The lesson? Algorithmic opacity and lack of checks can make platforms complicit in spreading falsehoods. The company responded by instituting manual review layers and boosting transparency—but only after losing user trust.

User perspective: can you really trust your feed?

Take Jamie, a regular news consumer who found themself stuck in a political echo chamber. Alarmed by how narrow their perspective had become, Jamie took active steps to audit and diversify their feed.

Step-by-step guide to auditing and adjusting your news feed:

- Review your app’s content and privacy settings.

- Unfollow or mute repetitive sources.

- Actively search for and subscribe to diverse viewpoints.

- Clear browsing history and retrain the algorithm with new interests.

- Use browser extensions or third-party tools for a more balanced feed.

- Schedule periodic self-audits to check for creeping bias.

These steps won’t eliminate algorithmic bias, but they can help you wrest back some control.

The filter bubble dilemma: are you seeing the whole story?

What is a filter bubble—and why does it matter?

A filter bubble is a digital echo chamber—an environment where algorithms shield you from information that challenges your assumptions. Personalized news feeds reinforce existing beliefs, narrowing the scope of your world. The psychological impact is insidious: you start to confuse familiarity with truth, and diversity with noise.

The social cost is even greater. Filter bubbles fragment public discourse, making dialogue across ideological lines more difficult. According to Cyber Journalist, 2024, content isolation can contribute to rising polarization and even influence election outcomes.

Key Concepts:

- Echo chamber: A setting where only like-minded views are amplified, reinforcing existing biases.

- Information cocoon: A personalized content environment that insulates users from diverse viewpoints.

- Context collapse: The flattening of social boundaries in digital spaces, making nuanced discussion harder.

How filter bubbles form (and how to break them)

Filter bubbles are built through constant, invisible reinforcement. Every click, like, and linger is a vote for more of the same. Over time, your feed becomes an echo chamber tailored perfectly to your taste—and your blind spots.

Timeline of news feed personalization and filter bubble concerns:

- Early 2000s: Personalized recommendations debut on news sites.

- 2010: Social media platforms optimize for engagement, not diversity.

- 2016: “Fake news” crises spotlight algorithmic amplification of bias.

- 2020: Political polarization surges amid filter bubble awareness.

- 2024: Growing calls for transparency, user control, and algorithmic audits.

To burst your bubble, experts recommend: regularly auditing your sources, seeking out unfamiliar viewpoints, and using privacy tools to limit data profiling.

Case in point: filter bubbles and the 2024 election

During the 2024 global election cycle, researchers documented how news feed personalization shaped political narratives. Users were more likely to see stories aligning with their existing views, deepening polarization. Misinformation spread faster within like-minded bubbles, while trust in news sources diverged sharply.

| Trust in News Sources | Pre-Personalization | Post-Personalization | Notable Shift |

|---|---|---|---|

| High Trust (all sources) | 55% | 36% | -19% |

| High Trust (selected feeds) | 24% | 41% | +17% |

| Low/No Trust | 21% | 23% | +2% |

Table 4: Survey data on news source trust pre- and post-personalization. Source: Original analysis based on Reuters Institute, 2024.

Consequences: increased polarization, more pronounced echo chambers, and a spike in misinformation complaints. Breaking the bubble requires user agency and robust platform safeguards.

The ethics of personalization: who decides what you see?

Algorithmic transparency: can you trust the code?

With algorithms shaping reality, calls for transparency are growing louder. Users want to know why certain stories are promoted and others buried. Open-source initiatives are emerging, aiming to demystify the “black box” of news curation. Regulatory bodies in the EU and US are drafting guidelines for algorithmic accountability, mandating disclosures and user controls.

Trust in the code depends on visibility—without it, faith in news personalization will always be on shaky ground.

Privacy, consent, and the cost of free news

Every personalized feed is built on a mountain of user data. “Free” news platforms often pay the bills by selling that data to advertisers and partners. The trade-off is rarely clear to users, and privacy breaches abound. According to privacy experts, emerging tools now allow users to anonymize their web footprint or opt out of certain types of tracking—but adoption remains low.

Red flags to watch out for on personalized news platforms:

- Opaque privacy policies: Hard-to-parse legalese that hides real data practices.

- Mandatory data sharing: Platforms that require location or social data for access.

- No opt-out: Lack of settings to disable tracking or personalize consent.

- Excessive ad targeting: Personalized ads that eerily reflect private interests.

- Infrequent transparency reports: Platforms that don’t disclose algorithm updates or audit results.

Your right to privacy shouldn’t be the price of admission to a functioning news diet.

Who gets left behind? The risk of information inequality

Personalization can empower—but it can also exclude. Less tech-savvy users, marginalized communities, and those without strong digital literacy are at risk of being left out, or worse, manipulated by biased feeds. The digital divide is compounded by algorithmic opacity, making it harder for vulnerable groups to navigate or challenge the system.

"Personalization can empower—or exclude." — Leila, 2024

Bridging this gap requires not just better technology, but education and outreach.

Taking control: how to master your personalized news feed

Customizing your feed: practical tips for users

Don’t be a passive participant in your own digital reality. Take back control with deliberate choices and periodic self-audit.

Priority checklist for news feed personalization:

- Review your current sources: Identify echo chambers and diversify.

- Set clear preferences: Use platform tools to shape your interests.

- Limit data sharing: Only provide necessary information—opt out where possible.

- Audit regularly: Check your feed for creeping bias every month.

- Use privacy tools: Browser extensions and third-party apps can help mask your data.

- Report bad recommendations: Flag content that feels manipulative or off-base.

Periodic self-audits are essential—your news diet changes as the world (and the algorithm) evolves.

Tools and resources for a smarter news diet

There’s a growing arsenal of tech to help users fight back. Apps like newsnest.ai, browser extensions that flag bias or filter bubbles, and privacy tools to anonymize your browsing are all part of the new toolkit. Leverage platform settings, subscribe to newsletters from diverse sources, and experiment with feed diversity sliders when available.

Unconventional uses for news feed personalization:

- Skill training: Follow niche outlets to sharpen expertise in a new field.

- Global awareness: Add international news sources to break local bias.

- Fact-checking: Use feeds to cross-reference competing narratives in real time.

- Social listening: Monitor what’s trending outside your usual circles.

- Activism: Curate feeds with advocacy or watchdog sources for community engagement.

Diversity isn’t just an ethical choice—it’s a practical one for critical thinking and growth.

Common mistakes—and how to avoid them

News feed personalization isn’t a set-and-forget process. Users often fall into traps: oversharing data, failing to audit, or blindly trusting algorithmic recommendations.

Step-by-step troubleshooting for a broken news feed:

- Recognize the problem: Are you seeing repetitive, biased, or irrelevant stories?

- Check your preferences: Reset and clarify your interests.

- Purge your history: Clear cache, cookies, and app data.

- Diversify your sources: Add new perspectives, even if unfamiliar.

- Monitor algorithm changes: Stay alert to platform updates and new privacy settings.

Mistakes are inevitable, but a little vigilance goes a long way.

The future of news feed personalization: what’s next?

Next-gen personalization: beyond algorithms

Personalization isn’t just about better code—it’s about explainable, adaptive, and user-driven news curation. Trends like explainable AI, where algorithms “show their work,” and real-time adaptive feeds that adjust as your context shifts, are reshaping what’s possible. The most resilient systems blend human judgment with machine speed, letting users peek behind the curtain and even override the algorithm when needed.

The future is less about being fed news—and more about co-creating your own information landscape.

Hyper-personalization: when the feed knows you too well

There’s a point where personalization can go too far. Hyper-targeted feeds know your interests, fears, and habits with uncanny precision. The result? Psychological fatigue, information overload, and the slow death of serendipity—the joy of stumbling upon unexpected, transformative news.

| Pros of Hyper-Personalized News | Cons of Hyper-Personalized News |

|---|---|

| Razor-sharp relevance | Loss of surprise and diversity |

| Higher engagement | Information fatigue |

| Increased efficiency | Deepened echo chambers |

| Tailored learning curves | Reduced critical thinking |

Table 5: Pros and cons of hyper-personalized news feeds. Source: Original analysis based on multiple industry reports and Arcreactions, 2024.

The narrative is clear: more isn’t always better, and a little unpredictability can be a healthy antidote.

How regulation and public backlash are shaping the next era

Recent years have seen a sea change in regulation and user activism around news personalization. The EU’s Digital Services Act and the US’s proposed Algorithmic Accountability Act aim to force platforms to disclose how feeds are curated and offer users more control. Public pressure is mounting for algorithmic audits, redress for harm, and meaningful consent options.

Key regulatory terms:

- Algorithmic transparency: Platforms must disclose how their algorithms work.

- User opt-out: Users should be able to disable or limit personalization.

- Auditability: Third parties can review and test algorithmic systems for bias.

- Right to explanation: Users can demand to know why certain content was shown.

The next era will be shaped as much by civic action as by code.

Beyond news: personalization in other industries

Lessons from music, retail, and social media

News isn’t alone in the personalization arms race. Spotify’s Discover Weekly, Amazon’s “Recommended For You,” and Instagram’s Explore tab all use similar, sometimes more advanced, algorithms to predict your every desire.

Personalization has succeeded in music by surfacing forgotten gems; in retail by driving impulse buys; and in social media by multiplying engagement. But the pitfalls—bias, manipulation, and consumer fatigue—are universal.

Industry-specific personalization strategies:

- Music: Collaborative filtering based on listening habits.

- Retail: Dynamic pricing and product suggestions rooted in browsing history.

- Social media: Trending topics driven by real-time user activity and network effects.

- Streaming video: Genre blending and mood-based recommendations.

Each industry reveals both best practices and cautionary tales.

Cross-industry risks and opportunities

Bias, privacy concerns, and resistance to change are shared across sectors. But so are the tools for progress.

How to adapt successful personalization from other industries to news:

- Map user journeys: Identify key touchpoints for feedback and improvement.

- Incorporate explainable AI: Let users see and edit their preference profiles.

- Enable opt-outs: Always offer a “reset” or “randomize” option.

- Blend human and machine curation: Use editorial oversight to check algorithmic excesses.

- Regular audit cycles: Test for bias and diversity systematically.

Cross-pollination can spark new ideas—and expose hidden flaws.

What can news organizations learn from outside the media bubble? The courage to experiment, the humility to admit mistakes, and the commitment to building trust, not just clicks.

What news can teach the rest of the digital world

If there’s one lesson from the news feed wars, it’s that curation has become the new code. How we assemble, filter, and present information shapes everything from commerce to culture. As Priya, a digital strategist, quips:

"Curation is the new code—across every screen." — Priya, 2024

The ripple effects of news personalization will be felt everywhere algorithms shape our attention.

Conclusion: curating your reality in an age of AI news

Synthesis: what we learned—and what you can do next

News feed personalization isn’t just a feature—it’s a force quietly redefining your relationship with truth, culture, and self. The transition from human editors to AI-powered algorithms has amplified voices, accelerated access, and cut through digital static. But it has also narrowed horizons, deepened divides, and made your digital diet less your own than you might think.

User agency, transparency, and critical engagement are the only real antidotes. Control your feed, or risk letting it control you. As we’ve seen, the risks and rewards of algorithmic curation are inseparable—and the responsibility for balance now sits firmly in your hands.

Are you shaping your feed, or is it shaping you?

Quick reference: glossary of essential terms

- News feed personalization: The process of tailoring news content to individual user preferences using algorithms and user data.

- Filter bubble: An information environment where users only see content that reinforces their existing beliefs.

- Algorithmic curation: Automated selection and ranking of news stories based on engagement, relevance, and predicted interest.

- Collaborative filtering: A technique that recommends content based on the preferences of similar users.

- NLP (Natural Language Processing): AI methods that analyze and interpret human language in news content.

- Echo chamber: A closed information space amplifying similar viewpoints.

- Context collapse: Blurring of boundaries between social, professional, and private communication online.

- Auditability: The ability to review and verify algorithmic processes for fairness and accuracy.

Further reading and resources

Ready to dig deeper or take action? Consider these next steps:

- Explore innovative news personalization with newsnest.ai.

- Stay updated on algorithmic transparency via Reuters Institute Digital News Report.

- Use privacy-focused news readers, like Microsoft Edge’s customizable feed.

- Boost your digital literacy with Cyber Journalist’s guides.

- Try browser extensions like NewsGuard or Ground News for bias detection.

The future of news is yours to shape. Will you be the architect—or the product?

Sources

References cited in this article

- Cyber Journalist(cyberjournalist.net)

- Microsoft Edge(microsoft.com)

- Contentful(contentful.com)

- Quintype(blog.quintype.com)

- Arcreactions(arcreactions.com)

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

- Peukert et al., 2023(research.cbs.dk)

- GMRU(gmru.co.uk)

- Reuters Institute Trends 2023/2024(reutersinstitute.politics.ox.ac.uk)

- InfoTrust(infotrust.com)

- Proofpoint(proofpoint.com)

- Sift(sift.com)

- Search Engine Land(searchengineland.com)

- Pew Research(pewresearch.org)

- Forbes(forbes.com)

- Exploding Topics(explodingtopics.com)

- Medium(medium.com)

- Arena(arena.im)

- Politico(politico.com)

- Kansas Reflector(kansasreflector.com)

- NewsCatcher(newscatcherapi.com)

- LSE(lse.ac.uk)

- NPR(npr.org)

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

- IMF(imf.org)

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

- Tandfonline(tandfonline.com)

- ResearchGate(researchgate.net)

- AI WarmLeads(blog.aiwarmleads.app)

- Better News(betternews.org)

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

- Local Media Association(localmedia.org)

- Newswhip(newswhip.com)

- Bangor Daily News(bangordailynews.com)

- YouGov(today.yougov.com)

- Reuters Institute(reutersinstitute.politics.ox.ac.uk)

- Nature study summary(scitechdaily.com)

- Sage Journals(journals.sagepub.com)

- The Conversation(theconversation.com)

Ready to revolutionize your news production?

Join leading publishers who trust NewsNest.ai for instant, quality news content

More Articles

Discover more topics from AI-powered news generator

News Feed Customization to Escape Echo Chambers and Take Control

Discover insights about news feed customization

News Fact-Checking Automation’s Tipping Point: Trust or Speed?

News fact-checking automation is disrupting journalism—discover the untold risks, real-world impact, and what actually works. Don’t get left behind: read now.

News Creation Without Media Analysts: Who Really Controls the News?

Discover insights about news creation without media analysts

News Creation Without Journalists and the Battle for Your Trust

News creation without journalists is changing everything. Uncover the jaw-dropping risks, surprising benefits, and what this AI revolution means for your newsfeed.

AI News Creation for Financial Services: the Compliance Edge in 2026

News creation for financial services is being revolutionized—discover the 7 disruptive truths of AI-powered newsrooms and how to stay ahead in 2026.

News Coverage Expansion Tools and the Quiet Rewrite of Journalism

News coverage expansion tool exposes the raw reality behind AI-powered news. Discover how automation is rewriting journalism—plus what the big players won’t say.

News Content Without Fact-Checking Teams Is Remaking Trust

In the churn of 24/7 headlines, the rules of news have been rewritten—not just by speed, but by who (or what) is writing. “News content without fact-checking

News Content Scaling for Media Without Killing Your Newsroom

News content scaling for media is evolving fast. Discover 7 brutal truths and actionable strategies to future-proof your newsroom now. Don’t risk falling behind—read this.

News Content Originality Software and the New Plagiarism Wars

Discover insights about news content originality software

News Content Originality Checker Vs AI News: Who Decides the Truth?

Has your news diet turned synthetic—and would you even know? In 2025, the lines between real journalism and algorithmic echo chambers are blurring so fast,

News Content Generator or Journalist 2.0? the Real AI Newsroom Shift

News content generator disrupts how headlines are made—AI churns out real-time stories, challenges trust, and rewrites newsrooms. Are you ready to keep up?

AI Is Quietly Rewriting News Content for Marketing Executives

News content for marketing executives is being rewritten by AI. Discover the harsh realities, hidden risks, and new power moves for 2026. Don’t get left behind.