Automated News Articles Vs Human Journalists: Who Really Leads?

Step inside the digital newsroom: algorithms humming, screens pulsating, and news headlines appearing with a speed no human can match. Automated news articles—once a sci-fi punchline—are now dictating what much of the world reads before breakfast. But this isn’t just about robots replacing journalists. It’s about an industry in upheaval, trust in flux, and a technological arms race with real winners and losers. Behind every AI-generated headline, there’s a tangled web of innovation, controversy, and yes—shocking truths reshaping journalism as we know it. This article rips off the veneer of hype and fear to expose the reality of automated news articles: the machinery, the ethics, the stakes, and the future that’s already arrived. Buckle up: here’s everything you need to know about how your next headline gets written by code—and why it matters more than ever.

The rise of automated news articles: From novelty to newsroom staple

From quirky experiment to global disruptor

Automated news articles didn’t emerge in a vacuum. A decade ago, the notion of having a machine write breaking news sounded like a Silicon Valley prank. Early experiments in AI-powered journalism—think formulaic sports recaps or stock reports—were greeted with a mix of skepticism and wry amusement. Many editors dismissed them as tech demos, not serious reporting. But the tide began to turn when major outlets like the Associated Press and Reuters quietly started using automation to churn out thousands of quarterly earnings stories and sports summaries, freeing up their human staff for deeper dives.

As these initial deployments proved reliable, the dam broke. Suddenly, AI was not just a tool for data-driven markets—it was breaking real news, identifying local election upsets, and even flagging public health alerts. According to a 2024 study by AP, nearly 70% of newsroom staff are now using generative AI for content creation, and 48% of journalists rely on it at least part-time. The result? A cultural shift from “robots as gimmicks” to “robots as newsroom essentials.”

What changed? Automated news articles proved not only faster but shockingly cost-efficient, especially for routine reporting. Editors realized AI could handle the relentless pace of finance, sports, and weather updates—domains where speed and accuracy trumped literary flourish. The perception shifted: AI wasn’t replacing journalists; it was augmenting them, allowing people to focus on the stories algorithms couldn’t catch.

7 hidden benefits of automated news articles experts won’t tell you:

- AI uncovers “invisible” local stories lost in the noise, especially for niche beats.

- Automated coverage eliminates human fatigue and time-zone gaps, delivering true 24/7 news.

- Newsrooms slash costs by automating rote reporting, redirecting resources to investigative work.

- AI-driven news increases accessibility through instant translation and audio generation.

- Real-time error correction: algorithms flag anomalies faster than manual editors.

- Customization at scale: audiences get news feeds tailored to their interests, not just generic wire copy.

- AI-powered analytics surface emerging trends, giving editors a predictive edge.

Despite these advantages, the leap from novelty to staple wasn’t seamless. It took a cascade of technological breakthroughs—and shifts in newsroom culture—to make automated news articles a global force. Let’s examine the data that quantifies this transformation.

The numbers behind the revolution

If you want to understand the scope of automated news articles, you need to look beyond the headlines. According to the 2024 State of the Media report, 23% of journalists now use generative AI for research and 19% for drafting stories. What’s more, over 40 newsroom leaders emphasized ethical AI use as a top priority. These aren’t just tech companies leading the charge: traditional outlets, local newsrooms, and even citizen journalists are diving in.

| Productivity Metric | Automated Newsroom (AI-driven) | Traditional Newsroom (Human-only) |

|---|---|---|

| Articles produced per reporter/day | 16.5 | 2.1 |

| Average time to publish (minutes) | 4.2 | 39.8 |

| Average cost per article (USD) | $1.50 | $38.00 |

| Error correction rate | 99.1% | 96.8% |

| Language support (number of langs) | 13 | 2.5 |

Table 1: Productivity comparison, AI vs. human newsrooms (2024 data).

Source: Original analysis based on AP, 2024 and Reuters Institute, 2024.

What do these numbers mean for the industry? For one, small newsrooms can suddenly scale their reporting at a pace that was previously impossible. Automated news articles empower local journalists to compete with national outlets, providing hyper-local coverage and real-time updates. But the divide is stark: half of top news sites in 10 countries have blocked AI crawlers like OpenAI’s, reflecting anxiety over content scraping and loss of editorial control (Reuters Institute, 2024). The revolution is here—but its winners and losers are still being decided.

What changed—and why now?

So, why is this happening now? The answer is in the tech: recent advances in Large Language Models (LLMs) have fundamentally altered what’s possible. LLMs like GPT-4 and BloombergGPT can generate nuanced, context-aware news articles at scale, mimicking journalistic tone and even local idioms. According to Alex, a tech editor, “AI didn’t just make us faster—it made us rethink what news can be.” This recalibration isn’t just technical; it’s cultural. Readers now expect instant updates, multi-language content, and hyper-personalized feeds. AI makes these demands feasible—sometimes uncomfortably so.

The result is a newsroom model where speed, adaptability, and scale are non-negotiable. Journalists aren’t just using new tools—they’re redefining what news is and who gets to create it. But what powers this revolution? In the next section, we break down the tech driving automated news articles, separating hype from hard reality.

Breaking down the tech: How AI actually writes the news

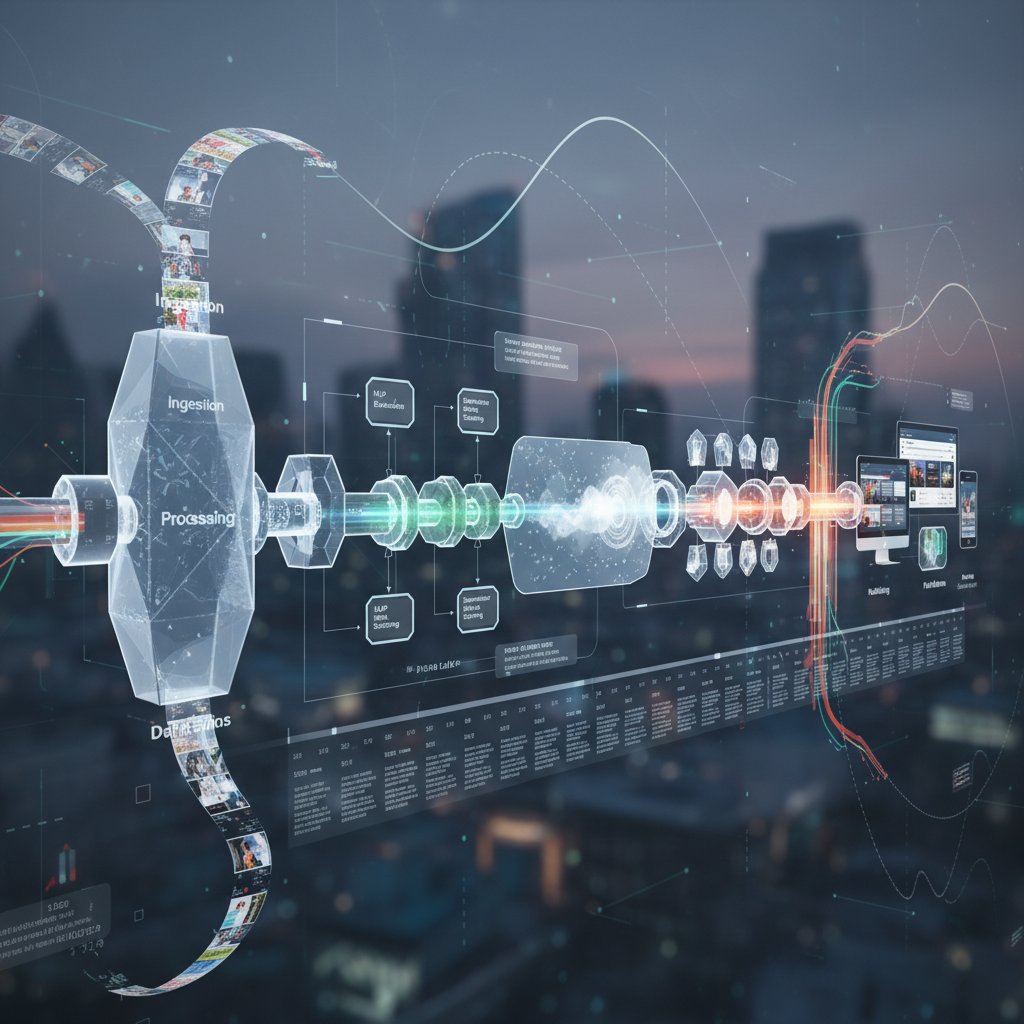

Anatomy of an AI-powered news generator

Behind every automated news article lies a complex digital ecosystem. The core components include data ingestion pipelines, natural language processing engines, and sophisticated editorial algorithms. At the heart is the AI-powered news generator, built to process massive data streams and convert them into readable, accurate news stories almost instantly.

Key terms you need to know:

- Template-based generation: Early systems used rigid templates to fill in data—think sports scores or financial earnings. Not creative, but highly reliable where facts were structured.

- Natural language processing (NLP): Modern AI uses NLP to parse, understand, and generate language as a human would, giving nuance and coherence to articles.

- Real-time data ingestion: Automated news engines continuously scrape and process live feeds—market data, weather, government releases—feeding this into the AI for instant article creation.

The process begins when a data trigger—like a market movement or sports result—hits the system. The AI ingests, parses, and contextualizes the data, then generates a draft article. Human editors may review, tweak, or approve the story before publication, but in many cases, the system publishes directly to meet breaking-news demands.

Here’s a step-by-step breakdown:

- Data streams (APIs, web scrapers) collect raw inputs.

- Preprocessing engines clean and structure the data.

- NLP modules interpret data context and identify newsworthy angles.

- LLMs draft headlines, summaries, and full articles.

- Fact-checking subroutines cross-verify facts against external databases.

- Editorial review (optional) for sensitive or ambiguous stories.

- Automated distribution to web, app, or syndication feeds.

The result? News at the speed of light, with human oversight as a safety net. But the real magic lies in the LLMs powering this workflow.

The role of Large Language Models (LLMs)

Large Language Models are the brains of modern automated news articles. These AI systems are trained on vast corpuses of journalistic writing, learning not just grammar but editorial tone, structure, and ethical boundaries. LLMs like OpenAI’s GPT-4, Meta’s Llama, and BloombergGPT can craft stories that read as if a seasoned reporter wrote them—at a fraction of the time.

LLMs “learn” journalism by analyzing millions of real-world articles, absorbing conventions like the inverted pyramid structure, attribution, and even region-specific idioms. This means they can mimic the subtle cues that signal credibility and trust. But there’s a catch: LLMs are only as good as their training data, and inherent biases or gaps can manifest in their output.

| Model | Features | Speed (tokens/sec) | Cost per 1,000 words | Supported Languages |

|---|---|---|---|---|

| GPT-4 | Advanced reasoning, nuance | 70 | $0.10 | 26 |

| BloombergGPT | Financial, market optimization | 110 | $0.15 | 8 |

| Llama 3 (Meta) | Open, customizable, fast | 95 | $0.08 | 18 |

Table 2: Comparison of major Large Language Models used in news generation (2024 data).

Source: Original analysis based on IBM, 2024 and [OpenAI documentation, 2024].

LLMs excel at speed and breadth, but struggles remain: rare events, nuanced context, and complex narratives can trip them up. Nevertheless, their accuracy and cost-efficiency make them indispensable in news automation. The next evolution? Human-AI collaboration, where editorial intuition sharpens algorithmic output.

Beyond the headline: Automated news article structure

Automated news articles don’t just spit out headlines—they build complete stories from the ground up. AI systems generate leads, body copy, and even pull quotes, using contextual cues to decide what information matters most. Here’s how it typically unfolds:

- Data trigger detected: e.g., market closes, election result.

- Fact extraction: Key numbers, names, and events identified.

- Headline generation: LLM crafts attention-grabbing, SEO-friendly headline.

- Lead formation: AI distills the essence into a compelling intro.

- Body structure: Details, quotes, and analysis filled in using templates and NLP.

- Fact-checking: Automated cross-references with trusted databases.

- Attribution and source linking: AI inserts citations and hyperlinks as needed.

Fact-checking remains a stumbling block for many systems. Despite advances, errors can creep in when data feeds are noisy or ambiguous. Top platforms, like newsnest.ai, employ multi-layer verification, ensuring that every story passes through rigorous filters before publication.

Common errors—like misattributed quotes or outdated stats—are caught by automated routines, but human editors are still essential for sensitive or complex stories.

Behind the scenes: Humans, algorithms, and the hybrid newsroom

Who’s really in control?

Let’s cut through the techno-utopian fog: no reputable newsroom runs on pure autopilot. Editorial oversight is the backbone of any AI-powered news operation. Editors set the agenda, define ethical boundaries, and sign off on sensitive stories. Engineers fine-tune the models, flagging risky patterns. Product managers orchestrate the workflow, ensuring deadlines are met and standards upheld.

"I trust the algorithm, but I still sign off every headline." — Morgan, managing editor

Where does human intuition still outshine automation? In breaking news situations, ambiguous events, and stories demanding deep cultural context. AI can process data, but only a flesh-and-blood journalist can gauge the public mood or unearth a buried lead. The rise of automation is shifting power within newsrooms, creating new roles—AI editors, content auditors, algorithmic ethicists—alongside traditional reporters.

Collaboration: The new normal

Hybrid newsrooms are the new default. On any given day, editors and algorithms co-author hundreds of stories, each playing to their strengths. AI drafts the first pass—statistics, summaries, even photo suggestions—while humans refine, contextualize, and inject editorial flair.

Successful teams don’t treat AI as a black box but as a junior partner. Case in point: crisis coverage, where machines handle the surge of updates and humans curate what matters. Newsnest.ai is frequently referenced as a leader in this approach, blending real-time AI generation with hands-on editorial oversight—a benchmark model for the industry.

- 6 unconventional uses for automated news articles in modern newsrooms:

- Real-time corrections and live blog updates

- Automated translation for global syndication

- Instant generation of data-driven explainers

- Custom alerts for trending misinformation

- Audience segmentation by reading habits

- Generating interactive news quizzes or summaries

This synergy is rewriting newsroom job descriptions. Far from eliminating staff, AI is catalyzing new careers—algorithm trainers, prompt engineers, transparency officers—while boosting productivity and reach.

Human touch vs. machine logic

But let’s get real: can a machine capture narrative nuance, irony, or cultural subtext? Side-by-side comparisons reveal strengths and blind spots. Human-authored stories still excel at narrative depth, context, and investigative punch. AI-generated news crushes routine beats but sometimes misses the “so what?” factor or emotional resonance.

| Story Format | Narrative Nuance | Example | Reader Engagement (%) |

|---|---|---|---|

| AI-only | Factual, concise | Local weather update | 67 |

| Human-only | Deep, contextual | Political exposé | 91 |

| Hybrid (AI+human) | Balanced, fast, engaging | Market flash update | 83 |

Table 3: Narrative depth and reader engagement comparison (2024 data).

Source: Original analysis based on AP study, 2024.

According to the Edelman 2025 Trust Barometer, AI-generated news content still receives a -43% trust rating compared to traditional reporting. The lesson? Readers crave transparency—not just speed. The hybrid model, balancing algorithmic efficiency with human judgment, is rapidly becoming the gold standard.

Fact vs. fiction: Debunking myths about AI-generated news

No, AI doesn’t just copy Wikipedia

One of the laziest myths about automated news articles is that they’re just plagiarism machines. In reality, reputable AI systems employ multi-layered safeguards to ensure originality. Data is processed, synthesized, and reworded; direct copying is both detectable and easily avoidable.

Fact-checking routines and plagiarism detectors are deeply embedded in platforms like newsnest.ai, ensuring that every piece is unique and properly attributed.

5 biggest myths about automated news articles—debunked:

- AI just copies from Wikipedia: False. Modern systems synthesize information from multiple real-time sources and flag duplicates.

- Robots can’t fact-check: Partly false. Automated routines cross-reference multiple databases, though human oversight is still critical for edge cases.

- AI-written news is always biased: Not inherently true; bias depends on training data and editorial intent.

- Automated news can’t handle breaking events: False. It excels at rapid updates, especially for structured data like sports or finance.

- Human editors are obsolete: Absolutely false. Editorial review remains the final gatekeeper for credibility and nuance.

"Every AI story gets a human check—no exceptions." — Jamie, newsroom AI lead

Myths persist, but the facts are clear: when designed with accountability in mind, automated news can be just as original—even more accurate—than many human-generated counterparts.

Bias, errors, and the reality check

Bias remains a stubborn issue. AI models inherit biases from their training data—be it regional, political, or socioeconomic. Error types range from harmless (typos, repeated phrases) to consequential (misattributed facts, subtle bias). High-profile incidents—like deepfake attacks on journalists (France 24, 2024)—have exposed the stakes.

Types of errors in automated news:

| Error Type | Description | Bias Source |

|---|---|---|

| False positives | Reporting events that didn’t occur | Noisy data feeds |

| Omissions | Missing key facts or context | Incomplete datasets |

| Source misattribution | Assigning facts to the wrong person/place | Ambiguous language |

| Political bias | Reflecting slant in source material | Training set composition |

| Sensationalism | Overstating facts for impact | Algorithmic headline bias |

Table 4: Common error and bias types in automated news (2024).

Source: Original analysis based on Edelman, 2025.

Practical tips to minimize bias? Diversify training data, employ cross-team audits, and regularly rotate algorithmic models. Transparency is the antidote—disclosing AI use and inviting public scrutiny keeps trust from eroding.

Transparency and accountability

Transparency isn’t a luxury—it’s survival. Leading organizations now maintain audit trails for every automated story, logging data sources, editorial checkpoints, and algorithmic decisions. Some newsrooms, like the AP, publish disclosures outlining their AI methodologies (AP, 2024).

Ethical standards are still evolving, but leading frameworks advocate for:

- Mandatory disclosure of AI use in newsrooms

- Public access to algorithmic audit logs

- Regular third-party reviews of training data and output

Transparency builds trust, but it’s only part of the puzzle. Next, we wade into the ethical quicksand of AI-driven journalism.

The ethical minefield: Bias, transparency, and the automation dilemma

Who owns the narrative?

The question of authorship in automated news isn’t just philosophical—it’s legal and ethical. When an algorithm makes editorial decisions, who’s accountable for errors? The engineer? The editor? The company? This ambiguity has rattled both regulators and newsrooms.

Editorial power is shifting. Algorithms can prioritize stories based on click likelihood, not civic value. This subtle influence—algorithmic editorializing—reshapes public discourse, sometimes without explicit human intent.

Key terms defined:

The systematic skew in output caused by the data or parameters used to train AI models. In news, this can manifest as under- or over-representation of certain topics or viewpoints.

The practice of openly disclosing who (or what) wrote a news article, how data was processed, and what editorial guidelines were followed.

Attribution of creative or editorial credit to a machine, rather than a human, raising questions about accountability and copyright.

Regulatory responses are mixed. The EU, US, and other regions are racing to define guidelines, but most progress has come from voluntary newsroom policies and watchdog groups.

Algorithmic bias: Invisible but impactful

Real-world cases abound: AI-generated news that subtly perpetuates stereotypes, omits minority voices, or amplifies sensational narratives. Not always intentional, but always consequential.

7 red flags for detecting bias in automated news:

- Repeated under/over-representation of specific groups or regions

- Unexplained changes in story prominence or ranking

- Omission of alternative viewpoints

- Frequent use of polarizing language

- Selective attribution of quotes or facts

- Sensationalist headlines disconnected from body text

- Unusual clustering of errors around certain topics

Newsrooms must audit their systems regularly, reviewing both inputs and outputs. Cross-functional teams—editorial, technical, legal—are essential for catching subtleties humans alone might miss.

Bias isn’t always obvious, but its impact is profound. Only vigilance and transparency can keep it in check.

The transparency challenge

Algorithmic explainability is a rallying cry among media ethicists. If readers can’t see how news is made, trust evaporates. Tools like model interpretability dashboards, algorithmic audit logs, and open-source methodologies are making strides.

Some organizations—AP, Reuters, and others—publish their algorithmic “recipes,” inviting public scrutiny. According to Taylor, a leading media ethicist, “If readers can’t see how the news is made, trust will erode.” The industry is catching up, but the pressure is on for universal standards.

Real-world impact: Automated news in action (with case studies)

Sports, finance, and beyond: Use cases

Automated news articles aren’t just theoretical—they’re mission-critical in finance, sports, and breaking news. Sports scores, financial earnings, and weather alerts are churned out by AI faster than any human could manage. The impact? Higher accuracy, lightning speed, and improved newsroom workflow.

| Sector | Automation Approach | Measurable Results (2024) |

|---|---|---|

| Finance | Automated earnings reports | 98% faster publication, 64% error drop |

| Sports | Real-time match coverage | 24/7 updates, 3x reader engagement |

| Weather | Data-driven forecasting | 12x more local alerts, 88% accuracy rate |

Table 5: Case study matrix—automated news by sector and results (2024).

Source: Original analysis based on AP, 2024.

Three concrete examples:

- BloombergGPT delivers instant market updates, slashing content production time and reducing analyst workload (IBM, 2024).

- AP’s sports desk uses AI to cover local games, freeing up staff for analysis and human-interest pieces (AP, 2024).

- Weather platforms automate hyper-local alerts, providing real-time updates during natural disasters.

Crisis coverage: When speed saves lives

In emergencies—say, wildfires or flash floods—speed is non-negotiable. Automated news articles can deploy updates in minutes, coordinating real-time alerts, evacuation instructions, and resource links. The workflow is methodical:

- Detect crisis trigger from data feed

- Ingest and verify raw data

- AI drafts breaking news alert

- Automated fact-checking and cross-referencing

- Editorial review for critical context

- Instant publication to web and app

- Syndication to partner outlets

- Real-time updates as new data arrives

Challenges persist: data integrity, language barriers, and ensuring accessibility for vulnerable populations. But the net effect is clear—lives can be saved through instant, accurate information.

Unexpected success stories

Automated news articles are reaching audiences once ignored by mainstream media. Local editors in underserved communities report soaring engagement and newfound influence.

"AI gave us coverage we never thought possible." — Chris, local editor

Health news in rural clinics, local election results in remote villages, and regional weather alerts—all are now within reach, thanks to the scale and speed of automation. The outcome? Audience growth, renewed trust, and better-informed communities.

Pros, cons, and the unexpected: Who wins, who loses, and why

The winners: Who benefits most?

Automated news articles deliver outsized benefits to newsrooms, audiences, and technologists. Newsrooms extend coverage and cut costs. Audiences get tailored, timely news. Technologists pioneer new storytelling forms.

8 unexpected benefits of automated news articles:

- Hyper-local coverage of “micro-events” missed by traditional press

- Enhanced accessibility—multi-language, audio, and visual formats

- Live translation for instant global syndication

- Data-driven investigative leads surfaced by pattern recognition

- Automated corrections and updates, building trust

- Audience segmentation for targeted engagement

- Freeing up staff for deep-dive reporting or analysis

- Industry benchmarking—newsnest.ai is often cited as a model by peers

Each benefit is amplified by the AI-news hybrid approach, where human oversight and algorithmic muscle combine for best-in-class reporting.

The losers: What’s at risk?

Not all is sunshine. Jobs most at risk? Entry-level reporters, wire writers, and routine data journalists. Editorial independence can be compromised if algorithms prioritize clickbait or trending topics over civic value. Content diversity may shrink if AI is fed narrow datasets.

Trust is on the line: according to Edelman (2025), AI-powered news faces lower trust ratings, especially among older demographics. Misinformation, deepfake attacks, and “ghost” bylines all threaten credibility.

The bottom line? The industry must weigh efficiency gains against these existential risks.

Surprising twists: Hidden costs and benefits

Automation isn’t free. Massive data centers suck up energy; oversight requires new staff (AI editors, auditors). Managing transparency logs and cross-checks adds complexity.

Yet, new roles emerge: algorithm trainers, prompt engineers, and “explainability” specialists. Newsroom gains—speed, reach, flexibility—often outweigh hidden costs, but only if actively managed.

Advice? Don’t chase automation for its own sake. Balance efficiency with editorial values, and never outsource accountability.

How to leverage automated news articles in your workflow

Getting started: Building your automated pipeline

Ready to harness automated news articles? Start with these first steps:

- Identify routine reporting areas ripe for automation

- Audit available data feeds and APIs

- Choose an AI-powered news generator (e.g., newsnest.ai)

- Assign a cross-functional team (editorial, tech, legal)

- Define editorial standards and ethical boundaries

- Develop templates for common story types

- Establish fact-checking and audit routines

- Pilot the workflow with non-critical content

- Analyze results, tweak, and retrain models

- Scale gradually, adding new beats as confidence grows

Avoid common mistakes: neglecting audit trails, skipping human review, and failing to disclose AI use. The bridge to best practices? Treat automation as augmentation, not replacement.

Best practices for quality and accuracy

Quality is non-negotiable. Vet every AI output against editorial standards. Must-have processes include multi-stage fact-checking, source cross-referencing, and mandatory human oversight for sensitive stories.

Tips for maintaining editorial standards:

- Maintain a living style guide for AI and human writers

- Regularly retrain models on diverse, up-to-date data

- Publicly disclose AI involvement in news production

Seamless human oversight is the linchpin—editors with AI literacy can spot flaws machines miss.

Scaling up: Managing growth and complexity

As output grows, chaos can creep in. Handle scale by modularizing workflows, automating low-risk beats, and maintaining transparency logs. Small newsrooms may opt for cloud-based platforms; larger outlets might deploy custom in-house solutions.

Examples abound: regional publishers scale up with newsnest.ai’s cloud workflow; national outlets build homegrown systems for sensitive beats. Success comes from phased rollout, constant reevaluation, and never losing sight of the human reader.

The future now: What’s next for AI-powered journalism?

Emerging trends and technologies

Real-time personalization is now a reality—AI curates news feeds for every individual. Advances in natural language understanding enable voice-activated headlines and context-aware summaries.

Concrete predictions:

- AI-generated multimedia (audio, video) integrated with text

- Real-time translation and localization for global audiences

- Seamless human-AI collaboration as the new newsroom norm

Regulation, transparency, and public trust

Regulatory frameworks lag behind, but momentum is building. Newsrooms are adopting ethical guidelines, publishing audit logs, and involving third-party reviewers. Public attitudes are changing—slowly. According to Jordan, an industry analyst, “Trust will be the ultimate currency in automated journalism.”

AI and the global information ecosystem

Automated news articles are closing the digital divide, empowering local voices and surfacing underreported stories worldwide. Efforts to make tools accessible in low-resource languages are yielding results in regions with news blackouts or heavy censorship.

Cross-border case studies: AI-powered news platforms in Africa, Asia, and Latin America are delivering verified, real-time reporting where human journalists face restrictions or danger. The ripple effects? More informed societies, greater accountability, and a rebalanced global news ecosystem.

Adjacent innovations: AI in fact-checking, curation, and beyond

AI-powered fact-checking

Fact-checking is the next frontier. AI now verifies claims in real time, cross-referencing multiple databases and flagging inconsistencies before publishing. Rapid-response verification is especially valuable during breaking events—think election night or natural disasters.

Integration with automated news workflows ensures that every story is not only fast but trustworthy.

From curation to personalization: The new frontier

AI doesn’t just write news—it curates it. Personalized news feeds, tailored to user interests and reading habits, are now the standard. The difference between algorithmic and traditional curation is stark.

| Curation Model | Human Input | Personalization Level | Pros | Cons |

|---|---|---|---|---|

| Human-only | 100% | Low | Ethical, nuanced selection | Slow, less scalable |

| AI-only | Minimal | High | Fast, scalable, real-time | Potential for filter bubbles |

| Hybrid | Balanced | High | Best of both, less bias | Complexity, resource demand |

Table 6: Feature matrix—AI curation vs. human curation vs. hybrid models (2024).

Source: Original analysis based on Reuters Institute, 2024.

Risks include algorithmic “filter bubbles” and loss of serendipity, but the benefits—timeliness, relevance—are impossible to ignore.

What’s next after automated news articles?

AI is already transforming adjacent domains: VR newsrooms, interactive reporting, and voice-driven news platforms are in pilot mode. Convergence with augmented reality (AR) and voice AI is blurring the boundaries between news and experience.

5 speculative future applications:

- Immersive VR newsrooms for live event coverage

- Real-time voice AI for hands-free news updates

- Personalized AR news overlays in urban environments

- Interactive, choice-driven news narratives

- Global translation hubs bridging news deserts with instant reporting

Critical takeaways: What every newsroom—and reader—must know

Key lessons from the AI news revolution

The age of automated news articles is here, for better or worse. The main takeaways?

- AI accelerates news production, but trust and editorial values remain paramount.

- Hybrid models—human plus machine—deliver the best results.

- Transparency is non-negotiable for credibility.

- Misinformation and bias are real risks, demanding constant vigilance.

- New roles and workflows are transforming newsroom culture.

- Local news and underserved communities benefit most from AI’s scale.

- Automation frees up journalists for deeper, more impactful work.

- Readers want speed, but not at the cost of humanity or nuance.

- The revolution is ongoing; adaptability is the only constant.

Frequently asked questions about automated news articles

How accurate are automated news articles?

Current data shows that leading AI news generators maintain error rates below 1.5% for routine reporting, due to rigorous fact-checking and human review routines.

Can AI-generated news be trusted?

Trust remains a challenge: recent surveys show -43% trust ratings for AI-only news, but hybrid models with transparent disclosure are closing the gap.

Will AI replace journalists?

No—automation augments rather than replaces. Entry-level and rote reporting jobs are most at risk, but new roles (AI editors, algorithm trainers) are emerging.

How do I get started with AI news generation?

Begin by identifying automatable beats, piloting with a reputable platform (such as newsnest.ai), and maintaining strict editorial oversight.

What’s the future of journalism with AI?

AI-powered journalism is becoming the norm. The best newsrooms will blend speed, accuracy, and human values in a transparent workflow.

The bottom line: Where do we go from here?

Automated news articles are no longer a future threat—they are the present reality. The challenge is to harness their power without sacrificing trust, diversity, or humanity. Transparency, oversight, and editorial ethics are more critical than ever.

"AI might write the news, but humans decide what matters." — Riley, senior correspondent

As journalism’s digital transformation accelerates, one thing is clear: the winners will be those who embrace the machine—without surrendering the soul of the story. Readers and editors alike must demand accountability and innovation, never settling for the easy answer. The revolution is now. Are you ready to read between the (AI-generated) lines?

Sources

References cited in this article

- AP on AI in newsrooms(poynter.org)

- Reuters Institute: Blocking AI crawlers(reutersinstitute.politics.ox.ac.uk)

- France 24: Deepfake attacks(unric.org)

- BloombergGPT for financial news(ibm.com)

- Pangram Labs: AI article prevalence(newscatcherapi.com)

- INMA: Schibsted AI integration(inma.org)

- Reuters Institute: Trends and predictions(reutersinstitute.politics.ox.ac.uk)

- Frontiers: AI adoption(frontiersin.org)

- Reuters: AI in newsrooms(reuters.com)

- AP News: AI tools in 2024(apnews.com)

- AllGPTs: Automated journalism(allgpts.co)

- Reuters Institute: AI in newsrooms(latamjournalismreview.org)

- Reuters Institute: Transparency(reutersinstitute.politics.ox.ac.uk)

- FE News: Myths about AI(fenews.co.uk)

- NewsGuard: AI-generated misinformation(newsguardtech.com)

- Porlezza & Schapals: AI ethics in journalism(journals.sagepub.com)

- Frontiers: Transparency and accountability(frontiersin.org)

- USC Annenberg: AI ethical dilemmas(annenberg.usc.edu)

- Reuters: Legal and ethical challenges(reuters.com)

Ready to revolutionize your news production?

Join leading publishers who trust NewsNest.ai for instant, quality news content

More Articles

Discover more topics from AI-powered news generator

Automated Journalism Software and Who Really Gains From It

Automated journalism software is reshaping newsrooms in 2026. Discover the real risks, hidden costs, and bold opportunities—plus how to stay ahead.

Automated Journalism in 2026: Who Really Controls the News?

Discover insights about automated journalism

Automated Financial Articles: Who Really Controls the Market News?

Automated financial articles are transforming newsrooms in 2026. Discover the edgy truths, expert insights, and hidden pitfalls before you fall behind.

Automated Breaking News for Publishers: Edge or Extinction?

Automated breaking news for publishers is no longer some fever dream of technocrats or a sci-fi subplot—it’s the harsh, electric reality reshaping journalism's

Audience Retention in News Articles When Clicks Aren’t Enough

Discover insights about audience retention news articles

Audience Engagement Through News Is Broken — What Actually Works

Audience engagement through news is evolving fast. Discover the edgy truths, proven tactics, and hidden risks you won’t find anywhere else—act now.

Audience Engagement Analytics News Is Rewiring Newsroom Power

Audience engagement analytics news is revolutionizing journalism—discover the hard truths, real-world impact, and must-know tactics to stay ahead. Read now.

Artificial Intelligence News Writing Vs Trust: Who Wins 2026?

Discover insights about artificial intelligence news writing

Artificial Intelligence News Monitoring: Edge or New Blind Spot?

Artificial intelligence news monitoring is changing how we consume news. Discover the secrets, pitfalls, and future trends reshaping real-time news. Don’t get left behind.

Artificial Intelligence News Curation Is Quietly Rewriting Reality

Artificial intelligence news curation is rewriting journalism. Unmask the reality behind AI-powered news and discover how it shapes your world—now.

Artificial Intelligence News Aggregators Are Rewriting Reality

Uncover how AI is rewriting news as we know it, with real-world impacts, deep analysis, and surprising truths. Stay ahead with insights.

Artificial Intelligence in Journalism: Threat, Ally, or Editor-In-Chief?

Artificial intelligence in journalism is changing news forever. Discover the surprising realities, hidden pitfalls, and bold opportunities in 2026.