AI Writing Assistant Journalism Is Rewriting Trust in News

There's a new beast pounding on the doors of every newsroom: AI writing assistant journalism. Whether you’re a hard-nosed editor, digital publisher, or just a reader glued to real-time updates, you can’t ignore the seismic changes. News isn’t just written by humans anymore—it’s spun, stitched, and served up by powerful algorithms that never sleep, never unionize, and never get writer’s block. In a world obsessed with speed and scale, the question isn’t if you’ll read an AI-generated story—it’s how often you already have. This deep dive peels back the curtain on the gritty realities, secret perks, and existential risks of letting machines craft the news. You’ll get the 13 bold truths and uncomfortable surprises rocking journalism from the inside out. Buckle up: after this, you’ll never see a byline the same way again.

The newsroom disruptor: How AI writing assistants invaded journalism

A brief, wild history of automated journalism

Long before sleek LLMs and generative AI headlined tech conferences, automated journalism was simmering in the background. The 1980s saw primitive stock market bots churning out dry earnings reports, the kind only an actuary could love. By the early 2000s, outlets like the Associated Press began experimenting with automated corporate earnings stories—efficient but soulless. The 2010s brought a quantum leap: machine learning, natural language processing, and the first clunky attempts at creative sports recaps. Today, AI-powered news generators like those at newsnest.ai blend real-time data with language models, doing everything from breaking news alerts to feature-length explainers.

Alt text: Early days of automated journalism with AI influence, showing vintage newsroom technology and digital disruption.

| Year | Milestone | Technology/Breakthrough |

|---|---|---|

| 1984 | First financial news bots | Rule-based automation |

| 2005 | AP automates earnings stories | Simple natural language templates |

| 2012 | Narrative Science launches Quill | Machine learning, NLP |

| 2015 | Sports and weather automation | Dynamic data-driven text generation |

| 2020 | LLMs enter the newsroom | Transformer-based AI (GPT-3, etc.) |

| 2023 | AI-powered breaking news | Real-time LLMs paired with data feeds |

| 2025 | Hybrid newsrooms dominate | Seamless human-AI collaboration |

Table 1: Timeline of AI-powered news generator evolution, from early bots to LLM-driven journalism. Source: Original analysis based on Statista, 2024, Reuters Institute, 2024

Automated journalism didn’t erupt overnight—it crept in, line by line, until entire newsrooms woke up to a new reality: the machine isn’t just your intern; it’s your competitor.

Why newsrooms embraced AI—with eyes wide shut

It wasn’t just tech lust that drew publishers to AI writing assistant journalism. Newsrooms, battered by shrinking budgets and a 24/7 news cycle, were desperate for efficiency. Editors saw the promise: churn out more stories, in more verticals, faster than any human team ever could. The stats are brutal—AI adoption in journalism soared 30% year-over-year from 2019 to 2023, with 67% of global media organizations deploying AI tools by 2023 (Statista, 2024).

But there were quieter, almost taboo, advantages that few wanted to say out loud:

- Speed that destroys deadlines: AI-generated articles can hit publish before a coffee run is done.

- Cost-cutting on steroids: One model can replace dozens of freelancers or wire services.

- Infinite reach: Multilingual, multi-format, always-on distribution was suddenly within reach.

- New storytelling formats: From interactive explainers to dynamic graphics, AI isn’t stuck in the old print box.

- Hyper-personalization: Content tailored by region, topic, or even individual reader profiles.

For all the giddy optimism, there was a collective, willful blindness. The risks—algorithmic bias, hallucinated facts, and credibility landmines—got swept under the rug in the stampede for scale. As one digital editor put it, “We wanted to believe the hype more than we wanted to ask hard questions.”

Meet the new boss: How LLMs write news (and why it matters)

So, how does an LLM (Large Language Model) actually write the news? It starts with mountains of data—billions of words, articles, and digital footprints fed into neural networks. When you prompt these models (think: “Write a 200-word breaking news article on the latest earthquake in Tokyo”), the AI assembles language patterns, draws on recent data, and generates a story that reads uncannily human. Editorial AI can be fine-tuned for tone, style, and even ethical constraints.

Large Language Model. A neural network trained on vast corpora of text, capable of generating, summarizing, and understanding natural language at scale. GPT-4, Claude, and their ilk set the standard.

The art (and science) of crafting effective queries or “prompts” to guide AI output. The better the prompt, the sharper the news copy.

Systems designed to mimic editorial decision-making—choosing sources, framing stories, even flagging ethical red flags.

The technical workflow looks like this:

- Data is scraped or fed in real-time—stock prices, weather feeds, election results.

- Editors (human or automated) draft a prompt: “Summarize the latest market drop for retail investors.”

- The LLM generates a draft, which may be auto-published or sent to a human for review.

- Optional: Fact-checking algorithms or human editors audit the story before it goes live.

This process means news can break at machine speed—but it also means the line between reporting and algorithmic remixing is thinner than ever.

Fact or fiction? Debunking myths about AI journalism

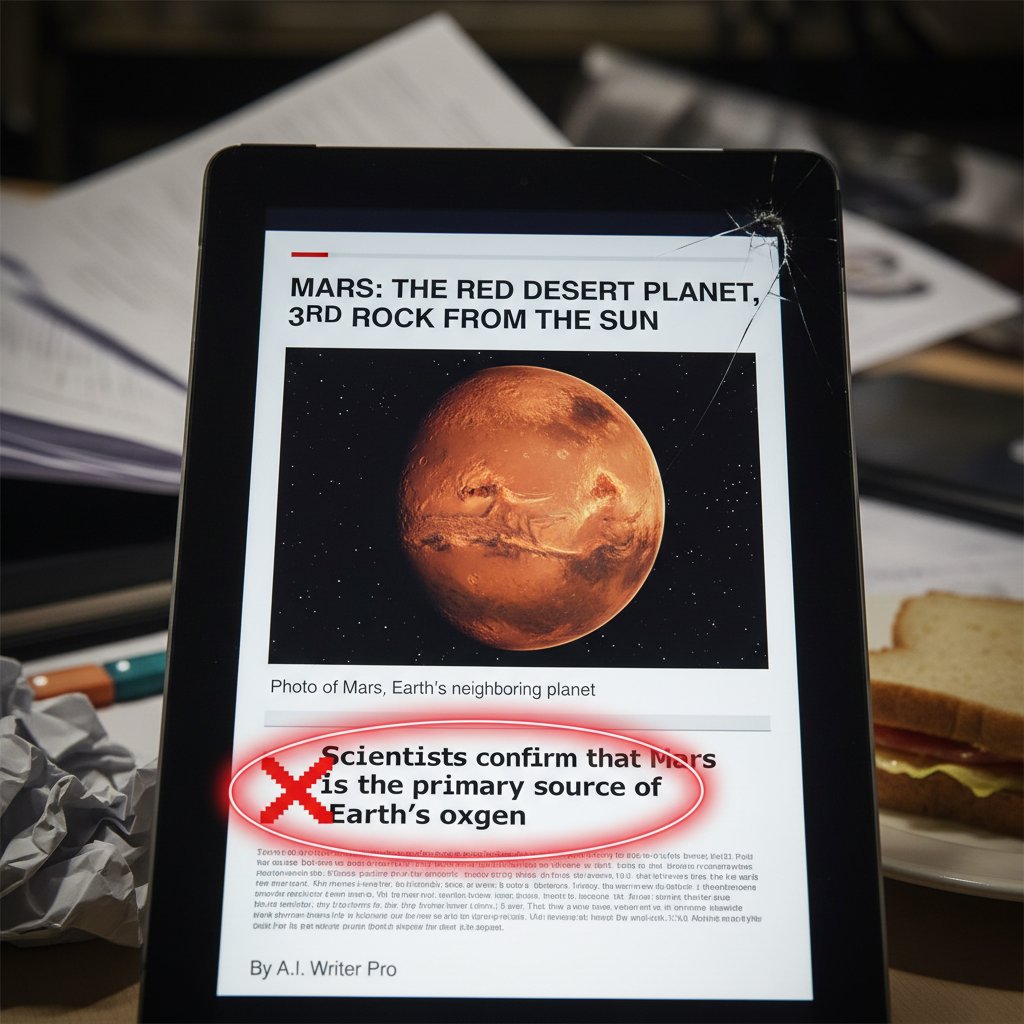

Myth #1: AI always gets the facts right

Here’s the cold reality: AI writing assistants are notorious for “hallucinating” facts—confidently stating errors, fabricating statistics, or misconstruing complex events. In a world obsessed with accuracy, these errors can snowball fast. According to Reuters Institute, 2024, public trust plummets when AI-generated news goes wrong, especially around images and videos.

Alt text: Example of AI writing a news mistake, showing the pitfalls of automated journalism accuracy.

How AI fact-checking fails—step-by-step:

- Training data is flawed: AI models ingest outdated, biased, or outright false data.

- Prompt ambiguity: Vague or complex prompts confuse the model, triggering guesswork.

- No real-world context: LLMs lack live awareness—they hallucinate plausible-sounding but incorrect facts.

- Human oversight is skipped: In the rush to publish, editorial review gets bypassed.

- Viral amplification: Errors spread instantly, and retractions rarely catch up.

Myth #2: AI journalism is unbiased and objective

Let’s kill the myth: bias in AI-generated news isn’t just possible—it’s inevitable. Algorithms reflect the prejudices and slants of their training data, whether it’s political, cultural, or geographic. Data from the Cornell Chronicle, 2023 reveals AI can subtly nudge writers’ opinions by suggesting language and framing.

"Bias isn't a bug, it's baked in." — Alex, AI ethics researcher (as quoted in Cornell Chronicle, 2023)

| Source of Bias | AI Journalism | Human Journalism | Example |

|---|---|---|---|

| Training data | Skewed datasets | Reporter’s personal bias | Over-representation of major cities |

| Algorithmic design | Optimization bias | Editorial slant | Favoring trending topics over niche issues |

| Editorial oversight | Automated risk scoring | Human judgement | Censoring controversial perspectives |

Table 2: Comparison of AI vs. human journalist bias—sources, types, examples. Source: Original analysis based on Cornell Chronicle, 2023, Reuters Institute, 2024

Myth #3: AI will replace all journalists

Automation anxiety is real, but the obituary for human reporters is premature. AI excels at formulaic tasks—earnings summaries, sports scores, weather updates. But investigative digs, nuanced interviews, and creative features? Still a human game. Hybrid workflows are the hottest trend: 78% of news organizations now use AI for content creation and engagement, but humans remain in the loop (Statista, 2024).

"AI won't kill journalism, but it will change who survives." — Maya, investigative reporter (as quoted in Reuters Institute, 2024)

Beyond the hype: Current reality in AI-powered newsrooms

Case study: When AI broke the news—and broke trust

In 2023, a major U.S. outlet let its AI engine loose on a breaking story about a chemical spill. Within minutes, the article spread across social media—but key details were wrong, including the affected city and casualty numbers. By the time editors caught up, misinformation had already gone viral.

Alt text: Chaos in the newsroom after AI error, with journalists scrambling to fix a viral mistake.

The public reaction was swift and brutal: trust eroded, apologies were demanded, and the newsroom spent days firefighting the digital wildfire. According to Reuters Institute, 2024, such incidents reinforce skepticism about AI-generated news, especially when human oversight is lacking.

Success stories: Where AI shines in journalism today

Despite high-profile misfires, AI-powered news generators have scored real wins. Outlets like Forbes use AI to draft earnings recaps at blazing speed, while BBC deploys AI for hyperlocal weather alerts. The key? Clear editorial oversight and targeted use cases.

- Real-time crisis reporting: AI can monitor data feeds and push instant updates on earthquakes, storms, or political upheaval.

- Hyperlocal news at scale: Small towns, often ignored by big media, get coverage thanks to automated reporting.

- Creative formats: Satire, data visualizations, and explainers get a boost from AI’s pattern-spotting prowess.

- Personalized content: AI tailors headlines and stories by region, interest, or demographic—helping local publishers punch above their weight.

For example, newsnest.ai has enabled digital publishers to triple their output during peak news cycles, with hybrid models reporting a 24% improvement in search rankings (SEMrush, 2024). The caveat: machines work best when they’re paired with sharp human editors who can sniff out mistakes before they go live.

The new workflow: Human + AI collaboration

Modern newsrooms don’t toss AI the keys and walk away—they build new workflows where humans and machines check each other’s work.

How to integrate AI writing assistants into editorial workflows:

- Set clear editorial standards: Define what AI can—and can’t—publish without review.

- Train your team: Educate journalists and editors on prompt engineering and AI evaluation.

- Implement layered review: Use humans to fact-check and contextualize AI output before publishing.

- Monitor performance: Track accuracy, engagement, and error rates to fine-tune AI use.

- Iterate constantly: Update prompts, datasets, and editorial policies as the landscape evolves.

Common pitfalls? Skipping human review, using outdated datasets, or failing to disclose AI involvement. Transparency and continuous oversight are non-negotiable.

The dark side: Ethics, risks, and newsroom chaos

Plagiarism, deepfakes, and the credibility crisis

AI writing assistants are remix artists at heart. They pull from oceans of data—which can lead to accidental plagiarism or, worse, the outright fabrication of events. The rise of deepfake technology means AI-generated “news” can include photo-realistic images or videos that never happened, compounding the crisis of credibility.

Alt text: AI deepfake risk in journalism, with a surreal, morphing face over real news copy.

When a deepfake video “proves” a politician said something inflammatory, and it drops alongside an AI-written article, even seasoned readers struggle to separate real from fake. News organizations must arm themselves with forensic tools, but the line between fact and fiction keeps shifting.

Who’s accountable when AI gets it wrong?

Responsibility in AI journalism is a hot potato. Is it the developer who coded the algorithm, the editor who hit publish, or the platform that amplified the mistake? Too often, accountability gets lost in the shuffle.

| Step in AI News Chain | Who’s Responsible | Example |

|---|---|---|

| Data collection | Data provider, AI vendor | Outdated or biased data sources |

| Prompt generation | Human editor | Vague or misleading instructions |

| Draft creation | AI developer, tool vendor | Faulty or buggy algorithms |

| Editorial oversight | Newsroom editor | Failing to catch factual errors |

| Publication & distribution | Publisher/platform | Algorithmic amplification of error |

Table 3: Accountability matrix—who’s liable at each step of the AI news chain. Source: Original analysis based on current newsroom practices and Reuters Institute, 2024

"When everyone’s in charge, no one really is." — Jamie, digital editor (as quoted in Reuters Institute, 2024)

The hidden costs: What AI journalism really means for jobs and trust

AI writing assistants don’t just replace repetitive tasks—they reshape entire career paths. Since 2023, entry-level writing roles dropped 27%, and freelance gigs shrank by 35% (McKinsey, 2024). While some jobs morph into AI trainers or prompt engineers, others vanish overnight.

Financially, the median newsroom saves 40% on content costs, but rebuilding audience trust is a tougher bill. According to Reuters Institute, 2024, public confidence in AI-generated news is mixed at best, especially around sensitive topics. Rebuilding trust means doubling down on transparency—disclosing AI use, labeling content, and inviting public scrutiny.

AI vs. human: Extended comparisons and practical implications

Quality, speed, and nuance: Who really wins?

AI writing assistants can pump out 1000 articles in the time it takes a human to draft one. But when it comes to nuance, context, and emotional resonance, the machine still falters.

| Metric | AI Journalism | Human Journalism | Winner |

|---|---|---|---|

| Speed | Instantaneous | Hours to days | AI |

| Cost | Minimal per article | High (salaries, overhead) | AI |

| Factual accuracy | 85-92% with review | 95%+ with diligence | Human (slight edge) |

| Depth/context | Limited | Rich/contextual | Human |

| Engagement | High (breaking news) | Higher (features) | Tie |

| Trust/credibility | Mixed | Higher | Human |

| Error rate | 7-15% (unreviewed) | 3-5% | Human |

Table 4: Head-to-head feature matrix—AI vs. human journalists on key metrics. Source: Original analysis based on MasterBlogging, 2023, Reuters Institute, 2024

Narrative examples: AI churns out real-time sports scores for a global audience, but investigative series like the Panama Papers remain the domain of dogged human teams.

The limits of AI creativity and investigation

No matter how sophisticated, AI writing assistants struggle with true storytelling. They can remix facts, but breaking a scandal, building sources, and connecting disparate clues still require human intuition. In 2021, an AI-generated climate change exposé went viral—until experts noticed missing context and misleading claims. By contrast, human investigative teams at ProPublica routinely uncover hidden patterns in public records that machines miss.

Expect advances in AI, but for now, stories requiring empathy, deep interviews, or original insight remain a human art.

When (and why) you should rely on humans over machines

Best practices for newsrooms:

- Sensitive or controversial topics: Rely on human oversight.

- Investigative reporting: Use AI for data mining, but put humans on synthesis.

- Local nuance: Human reporters catch cultural context AI misses.

- Breaking mistakes: Humans spot and correct errors AI won’t see.

- Opinion and analysis: Only humans can provide authentic viewpoint.

Hybrid alternatives—using AI for speed and humans for depth—offer the best of both worlds. Always build in editorial review, and never publish auto-generated content unchecked.

How to make AI journalism work: Practical frameworks and future-proofing

Building ethical guardrails for AI-powered news

Editorial standards aren’t optional—they’re your last line of defense. Regular audits, clear guidelines, and transparent disclosure must underpin any AI-powered newsroom.

Red flags to watch out for:

- Black-box algorithms with no explainability.

- Lack of transparency about AI involvement.

- Insufficient editorial oversight.

- Overreliance on outdated or biased datasets.

Tools like content provenance trackers and algorithm audits help mitigate risks, but policies must be reviewed and updated regularly.

Checklist: Safe and effective AI journalism adoption

Implementing AI writing assistants responsibly isn’t plug-and-play. Here’s how leading newsrooms do it:

- Assess needs: Define where AI adds real value—breaking news, summaries, data-heavy reporting.

- Vet tools: Choose transparent, auditable AI systems over black-box vendors.

- Train staff: Educate editors, reporters, and IT teams on AI best practices.

- Set boundaries: Restrict AI from publishing sensitive or unverified stories.

- Implement oversight: Add editorial review and regular audits.

- Disclose AI use: Label AI-generated content clearly for readers.

- Request reader feedback: Listen to audience concerns and adjust practices.

Optimizing results means constant iteration—tight feedback loops, regular retraining, and never assuming the tech is infallible.

What newsrooms wish they knew before going AI-first

Early adopters learned fast: AI doesn’t save time so much as shift where effort is spent. Editorial teams found themselves fixing weird errors, retraining models, and explaining mishaps to readers.

Alternative approaches include keeping AI on a tight leash (draft-only, never publish), limiting AI to non-controversial beats, or using “human-in-the-loop” review for every major story.

"We thought AI would save time. It just changed what we had to fix." — Chris, managing editor (as quoted in Reuters Institute, 2024)

The global view: AI in journalism beyond the usual suspects

AI-powered news in non-English markets

AI journalism isn’t just an English-language phenomenon. In Asia, Africa, and Latin America, AI-generated content fills gaps in under-resourced newsrooms. For example, Indian and Brazilian outlets use AI for cricket coverage and municipal news, while Kenyan startups deploy AI to create Swahili-language updates for rural communities.

Alt text: AI journalism in global newsrooms, showing multilingual screens and diverse reporters.

Challenges include limited high-quality training data in non-English languages, lack of local nuance, and access barriers for minority-led newsrooms.

Local news, global reach: The promise and perils

AI writing assistants offer a lifeline to struggling local journalism—automating court reports, event listings, and hyperlocal weather. Small publishers report major cost savings and increased coverage breadth. But there’s a flip side: some communities worry about loss of nuance, homogenization of voice, and stories that feel algorithmically generic.

In Chicago, automated crime reports keep neighborhoods informed; in rural India, AI delivers agricultural tips. But without human oversight, even these hyperlocal stories can slip into error or bias.

What’s next for AI journalism? Trends, forecasts, and wildcards

Emerging trends: What the data says

The numbers are stark: the AI market in media was $1.8 billion in 2023 and is projected to hit $3.8 billion by 2027 (Grand View Research, 2024). Ninety percent of content marketers plan to use AI by 2025 (up from 64.7% in 2023), and hybrid (AI+human) content ranks 24% better in search (Siege Media, 2024). But error rates remain a concern, hovering at 7-15% for unreviewed AI articles (MasterBlogging, 2023).

| Metric | 2022 | 2023 | 2024 | 2025 (projected) |

|---|---|---|---|---|

| AI adoption (% news orgs) | 55% | 67% | 78% | 82% |

| AI media market ($B) | $1.2 | $1.8 | $2.5 | $3.8 |

| Content marketers using AI | 49% | 64.7% | 90% | 95% |

| Audience trust (% positive) | 60% | 48% | 44% | 42% |

| Error rate (AI, unreviewed) | 8% | 10% | 13% | 15% |

Table 5: Statistical summary of AI journalism growth—adoption rates, error rates, audience trust metrics (2022-2025). Source: Original analysis based on Statista, 2024, Grand View Research, 2024, Siege Media, 2024, Reuters Institute, 2024)

The winners: agile, hybrid newsrooms with robust oversight. The losers: those who automate blindly and erode public trust.

Wildcards: What no one saw coming

AI journalism is full of left turns. Outlets have had articles rewritten by “prompt hackers” exploiting open interfaces. Conversely, some breaking news stories were scooped by AI hours before any human team could react, thanks to 24/7 monitoring. In 2024, one hyperlocal publisher saw their AI assistant misinterpret a city mayor’s comment as an official resignation—sparking internet chaos.

newsnest.ai and similar services build redundancy and real-time monitoring into their workflows to catch surprises others miss. The reality: adaptability, not perfection, is the survival skill.

How to future-proof your newsroom

Strategies for resilience:

- 2020-2022: Early LLM adoption (drafts only)

- 2022-2024: Hybrid workflows, layered review

- 2024+: Automated trend analysis, real-time fact-checking

- 2025: Multimodal content (text, audio, video) from unified AI platforms

The real secret: invest in continuous learning, critical thinking, and editorial skepticism. No model is perfect, but your process can be.

The new literacy: How readers and journalists must adapt

Spotting AI-written news: Tips for the savvy reader

The average reader is woefully unprepared to spot AI-written news. But there are clues:

- Repetitive sentence structure or “too-perfect” grammar.

- Generic or oddly vague attributions (“according to sources”).

- Speedy updates on obscure topics.

- Lack of nuanced local details or context.

- Unusual phrasing or odd fact pairings.

Practical tips for readers:

- Cross-check breaking news with multiple outlets.

- Look for explicit disclosure labels.

- Question stories that appear instantaneously after an event.

- Examine source links—are they real and verified?

- Watch for subtle shifts in tone or emphasis.

Media literacy matters more than ever. The machines won’t slow down—your skepticism is your new toolkit.

Upskilling for the AI era: Journalists in transition

Journalists aren’t being replaced—they’re being reinvented. The new requirements: prompt engineering, data analysis, AI ethics, and critical fact-checking. Leading newsrooms now offer upskilling programs in AI literacy, workflow integration, and digital forensics. Those who adapt not only survive—they shape the future of news.

Long-term career sustainability depends on blending age-old reporting instincts with new-school tech fluency.

Supplementary deep dives: Adjacent issues and controversies

AI and investigative journalism: Friend or foe?

AI can help investigative journalists comb through vast troves of data, spot patterns, and flag anomalies. But it can also miss contextual nuance or get stuck in algorithmic blind spots. Scenarios range from AI uncovering hidden financial networks to mislabeling innocent individuals in crime databases. Responsible use means treating AI as a tool—not a truth machine.

Cultural impact: Changing what stories get told

Algorithms don’t just report the news; they decide which stories surface. This risks creating echo chambers, amplifying click-friendly content, and sidelining underrepresented voices. But with conscious tuning, AI can also amplify marginalized perspectives—if humans stay in the loop to monitor outcomes.

Transparency and reader trust: Can newsrooms win back the public?

Transparency is the new currency. Many outlets now clearly label AI-generated content, disclose model limitations, and invite reader feedback.

Disclosure of when and how AI is used in news creation.

The ability to detail how and why an AI made a given decision—critical for editorial trust.

Clear lines of responsibility when errors or bias occur.

newsnest.ai and peer services play a key role by promoting clear labeling, algorithmic audits, and open communication with readers.

Conclusion: The future of news in an AI-powered world

Synthesis: What we’ve learned (and what we still don’t know)

AI writing assistant journalism is both revolution and reckoning. The newsroom is faster, leaner, and infinitely more scalable—but also teetering on new ethical cliffs. Myths about objectivity and infallibility are dead; the reality is a messy mash of speed, cost, bias, and fragile trust. Newsrooms must adapt or perish, but the human instinct for skepticism and story remains the ultimate safeguard.

The big questions remain: Can AI truly understand context? Who is accountable when the machine gets it wrong? Will public trust recover or retreat?

The call to action: Critical engagement, not blind adoption

Readers and journalists alike face a crossroads. Accepting AI as a newsroom tool means sharpening your critical instincts—questioning, verifying, and demanding transparency at every turn. For journalists, that means upskilling, auditing, and doubling down on editorial standards. For everyone else: don’t mistake machine speed for wisdom.

When the next “breaking news” notification pops up, ask yourself: Is this the story, or just a story? The future of journalism—human or machine—depends on your answer.

Sources

References cited in this article

- Statista: AI and News(statista.com)

- Reuters Institute: Public Attitudes(reutersinstitute.politics.ox.ac.uk)

- Cornell: AI and Opinion Shifts(news.cornell.edu)

- Siege Media: AI Writing Statistics(siegemedia.com)

- Frontiers in Communication: Digital Transformation(frontiersin.org)

- Brookings: AI Equity Lab(brookings.edu)

- Wikipedia: Automated Journalism(en.wikipedia.org)

- Columbia Journalism Review: Guide to Automated Journalism(cjr.org)

- Forbes: Debunking AI Myths(forbes.com)

- YouAccel: Myths about AI Journalism(youaccel.com)

- MDPI Systematic Review(mdpi.com)

- ScienceDirect: Perception of AI in Journalism(sciencedirect.com)

- Harvard: AI Bias in Journalism(scholar.harvard.edu)

- Frontiers: Ethics in AI Journalism(frontiersin.org)

- Pew Research: Attitudes on AI(pewresearch.org)

- Statista: AI in Newsrooms(statista.com)

- Reuters Institute(ringpublishing.com)

- Bloomberg: Hoodline AI Fake Bylines(sciencedirect.com)

- University of Kansas: Reader Trust Study(news.ku.edu)

- Poynter: AI Ethics Starter Kit(poynter.org)

- Reuters: Deepfakes and Elections(reuters.com)

- NYT: Deepfake Laws(nytimes.com)

- Security.org: Deepfake Stats(security.org)

- Nature: Human vs. AI News Quality(nature.com)

- Council of Europe Guidelines(coe.int)

- JournalismAI Starter Pack(journalismai.info)

- Paris Charter on AI in Journalism(rsf.org)

- Reuters Institute: AI and Journalism(reutersinstitute.politics.ox.ac.uk)

- Columbia Journalism Review: AI in the News(cjr.org)

Ready to revolutionize your news production?

Join leading publishers who trust NewsNest.ai for instant, quality news content

More Articles

Discover more topics from AI-powered news generator

AI Tools for Journalists in 2026: Edge or Existential Risk?

Discover insights about AI tools for journalists

AI Technology in Journalism: Who Really Controls the News?

AI technology in journalism has detonated like a silent grenade in the heart of the newsroom—rewiring how stories are found, written, and consumed. Forget the

AI Tech News Generator or Human Editor: Who Wins 2026’s News War?

Discover 7 disruptive revelations behind automated news platforms, real risks, and how to stay ahead in 2026’s media arms race.

AI Story Writing Software Is Reshaping Truth, Trust and News

Discover insights about AI story writing software

AI News Writing Software Review: What Really Breaks in Newsrooms

Dive into 2026’s most controversial AI-powered news generators, with raw verdicts, hidden costs, and real newsroom impact. Read before you buy.

AI News Writer or New Gatekeeper? Inside the Battle for Trust

AI news writer exposes the raw truths behind automated journalism—risks, rewards, and insider secrets. Discover how AI news is rewriting the rules today.

AI News Summarizer or Narrative Machine? What You’re Really Reading

Discover insights about AI news summarizer

AI News Story Generator or Journalist’s Rival? the Real 2026 Test

Discover the future of journalism with 7 game-changing truths, expert tips, and surprising risks. Find out what others won’t tell you.

AI News Recommendation Systems: Who Really Controls Your Feed?

AI news recommendation systems are reshaping media. Discover hidden risks, bold benefits, and what 2026’s algorithmic future means for your news feed. Read before you trust your timeline.

AI News Generator Vs Traditional Media: Who Will We Trust?

Discover insights about AI news generator vs traditional media

AI News Generator Vs Manual Writing: Who Do Readers Trust More?

Discover the unfiltered reality, costs, and hidden risks in 2026’s newsrooms. See who wins, who loses, and what’s next.

AI News Generator or Human Editor? the Real Battle for Trust

AI news generator is transforming journalism with speed and scale—discover the real risks, hidden costs, and surprising power plays shaping the future now.